According to a report shared by AI commentator Rohan Pandey, Anthropic is in the process of establishing a corporate Political Action Committee (PAC). The reported aim is to influence the development of artificial intelligence policy in the United States, with a focus on the legislative landscape ahead of the 2026 midterm elections.

What Happened

The report, originating from sources cited by Pandey, indicates that Anthropic is taking a formal step into political lobbying by creating a corporate PAC. A PAC is a legal mechanism that allows corporations, unions, or other groups to pool campaign contributions from members and donate those funds to support or oppose candidates, ballot initiatives, or legislation.

For a company like Anthropic, a leading AI safety and research company, this represents a strategic evolution from technical advocacy and white papers to direct financial involvement in the political process. The timing is pointedly linked to the upcoming midterm elections, a period when political fundraising and policy positioning are highly active.

Context: The AI Lobbying Landscape

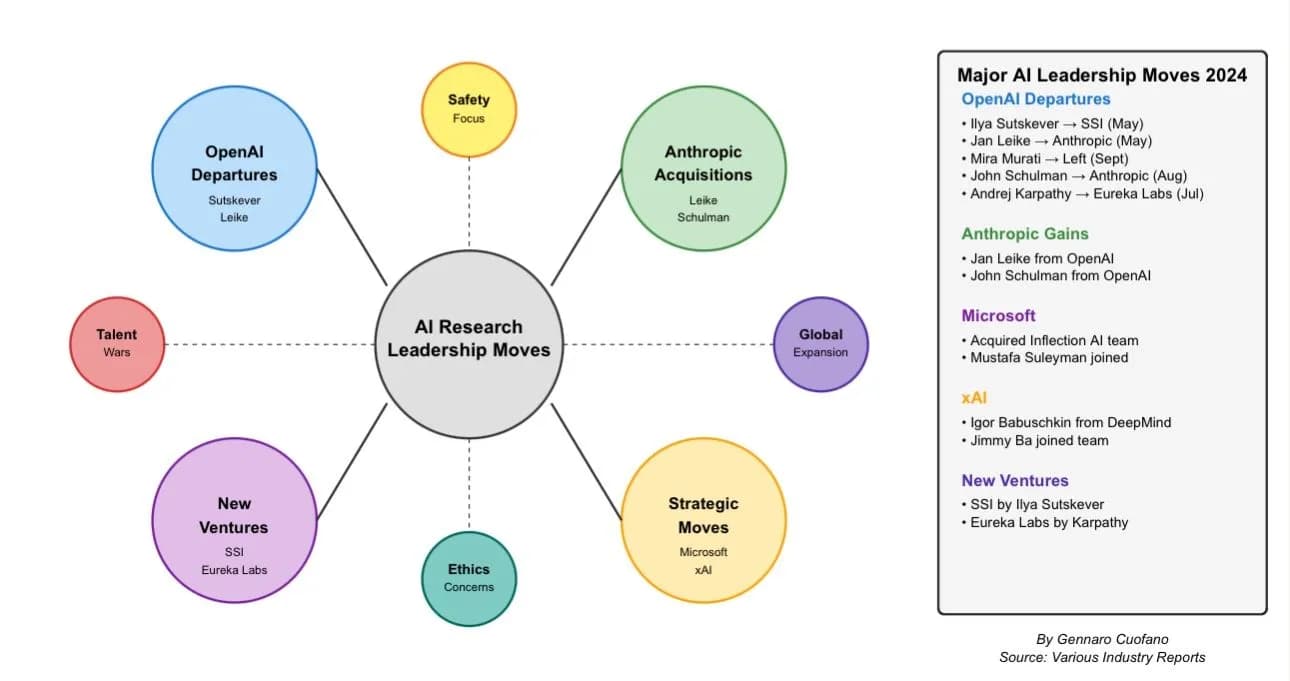

The formation of an Anthropic PAC would place it among a growing cohort of major technology firms actively seeking to shape AI governance. OpenAI launched its own PAC in late 2024, and tech giants like Google, Microsoft, and Meta have long maintained sophisticated lobbying operations in Washington, D.C.

The core battleground is the evolving framework for AI regulation. Key issues include safety standards for advanced models, liability frameworks, copyright and data usage, export controls on AI chips, and funding for public AI research. With comprehensive federal legislation like the proposed AI Safety and Innovation Act still under debate, companies are investing heavily to ensure their perspectives are heard by lawmakers who will draft the rules.

Anthropic’s stated corporate mission centers on building reliable, interpretable, and steerable AI systems. Its entry into political lobbying suggests a recognition that its long-term operational environment will be determined as much in congressional hearings as in research labs.

What This Means in Practice

- Direct Advocacy: A PAC allows Anthropic to financially support candidates who align with its policy views on AI, potentially giving it more direct access and influence.

- Policy Specifics: While not detailed in the initial report, Anthropic’s lobbying priorities likely include advocating for risk-based regulatory approaches that differentiate between frontier models and narrower AI applications, promoting public-private partnerships for safety research, and influencing definitions of key concepts like “AI safety” and “catastrophic risk.”

- Competitive Dynamics: This move institutionalizes Anthropic’s political efforts, putting it on a more level playing field with its well-resourced competitors in the policy arena.

gentic.news Analysis

This development is a logical, if significant, next step in the corporatization of AI safety advocacy. Anthropic has transitioned from a research collective focused on technical AI safety to a well-funded commercial entity backed by Amazon and Google. A corporate PAC is a standard tool for a company of its scale and regulatory exposure, particularly in a sector facing existential policy decisions.

The reported timing—"before the midterms"—is critical. The 2026 elections could reshape key congressional committees overseeing technology. By establishing a PAC now, Anthropic is building a war chest and relationships to engage in the 2025-2026 election cycle, seeking to influence not just current legislation but also the composition of the next Congress.

This aligns with a broader trend we identified in our October 2025 analysis, "The Beltway AI Boom: How Lobbying Spend Skyrocketed 300% in 18 Months." AI companies are no longer just talking to each other at conferences; they are engaged in a high-stakes battle for narrative and regulatory control in national capitals. Anthropic’s move confirms that even mission-driven AI labs now view direct political investment as non-optional for survival and influence.

It also creates an interesting tension. Anthropic’s founding principles emphasize long-term benefit to humanity. Navigating the pragmatic, often transactional world of political campaigning through a PAC will test its ability to maintain that alignment in the face of short-term political necessities.

Frequently Asked Questions

What is a corporate PAC?

A corporate Political Action Committee (PAC) is a legal entity that a company can form to raise money from its employees, shareholders, or other connected individuals. The PAC can then donate those funds to political candidates or parties, subject to federal contribution limits. It is a primary tool for corporations to engage in the U.S. political fundraising system.

Why would an AI company like Anthropic need a PAC?

As AI moves from research to widespread deployment, it faces increasing scrutiny and potential regulation from governments. A PAC allows Anthropic to support lawmakers who understand or are sympathetic to its technical and policy positions, thereby gaining a seat at the table when laws are written. It is a defensive and offensive strategy to shape the regulatory environment in which it will operate.

How does this compare to what other AI companies are doing?

Anthropic would be following a path already taken by OpenAI, which formed a PAC in late 2024. Larger tech incumbents like Google, Microsoft, Amazon, and Meta have had established lobbying operations and PACs for years. The difference is that Anthropic’s entire public identity is built around AI safety and responsible development, so its political engagements will be scrutinized for consistency with those principles.

Does this mean Anthropic is moving away from technical research?

Not necessarily. This is better understood as an expansion of its strategy. Anthropic continues to be a leading publisher of AI safety research. The PAC represents a parallel track of engagement, acknowledging that the future of AI will be shaped by both technical breakthroughs and political decisions. Most large corporations in regulated industries (e.g., pharmaceuticals, finance) maintain both strong R&D divisions and government affairs teams.