A new benchmark for Multimodal Large Language Models (MLLMs) in industrial settings reveals a surprising bottleneck: the primary failure mode isn't visual understanding, but a lack of precise, domain-specific knowledge. The FORGE (Fine-grained Multimodal Evaluation for Manufacturing Scenarios) benchmark, detailed in a new arXiv preprint, provides a rigorous testbed combining 2D images and 3D point clouds with fine-grained annotations like exact part numbers. The evaluation of 18 state-of-the-art models shows significant performance gaps, but also a clear remediation path: supervised fine-tuning on the FORGE data yielded up to a 90.8% relative improvement in accuracy for a compact 3B-parameter model.

This work directly addresses a critical gap as manufacturing seeks to move from AI-assisted perception to autonomous execution. Published on April 8, 2026, it follows a recent trend of high-stakes, domain-specific benchmarking, such as the MIT and Anthropic benchmark revealing systematic limitations in AI coding assistants covered by gentic.news earlier this month.

What the Researchers Built: A Fine-Grained Manufacturing Testbed

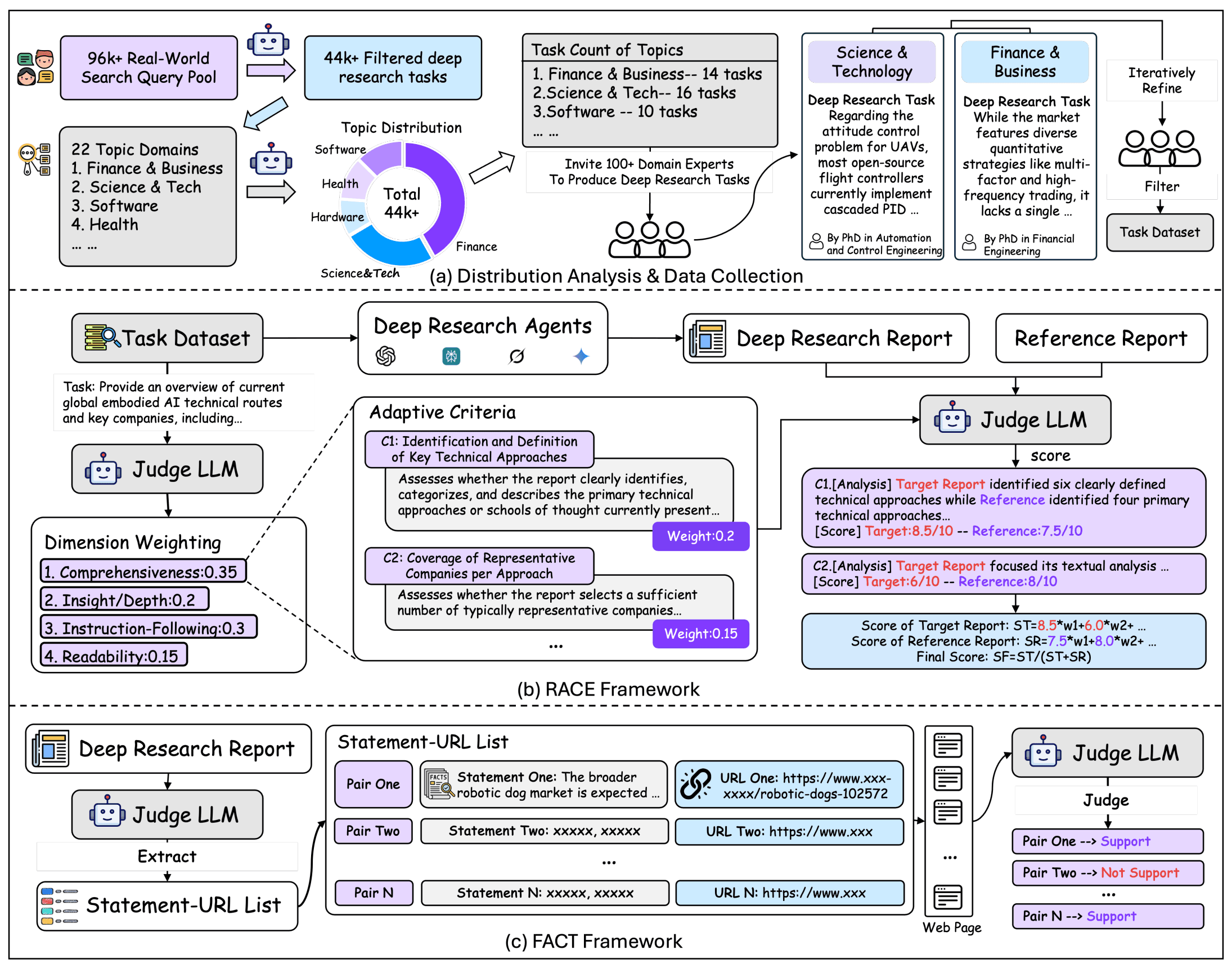

The core contribution is the FORGE dataset and evaluation suite. Current multimodal benchmarks often lack the granularity required for real-world industrial quality control and verification. FORGE fills this gap with a high-quality dataset featuring:

- Multimodal Inputs: Real-world 2D images and 3D point clouds of industrial components and assemblies.

- Fine-Grained Annotations: Labels include precise details often omitted in generic datasets, such as exact model numbers, tolerance specifications, and microscopic surface defect classifications.

- Three Core Tasks:

- Workpiece Verification: Determining if a manufactured part matches its intended design specifications.

- Structural Surface Inspection: Identifying defects like scratches, dents, or corrosion.

- Assembly Verification: Checking if a complex assembly (e.g., an engine block) has all components correctly installed and oriented.

The dataset is structured to move beyond simple "what is this?" questions to "is this specific component correct to a precision of X?"

Key Results: General MLLMs Struggle, Fine-Tuning Shines

The researchers evaluated 18 leading MLLMs, including GPT-4V, Gemini Pro Vision, Claude 3, and open-source models like LLaVA and Qwen-VL. Performance was measured by accuracy on the three defined tasks.

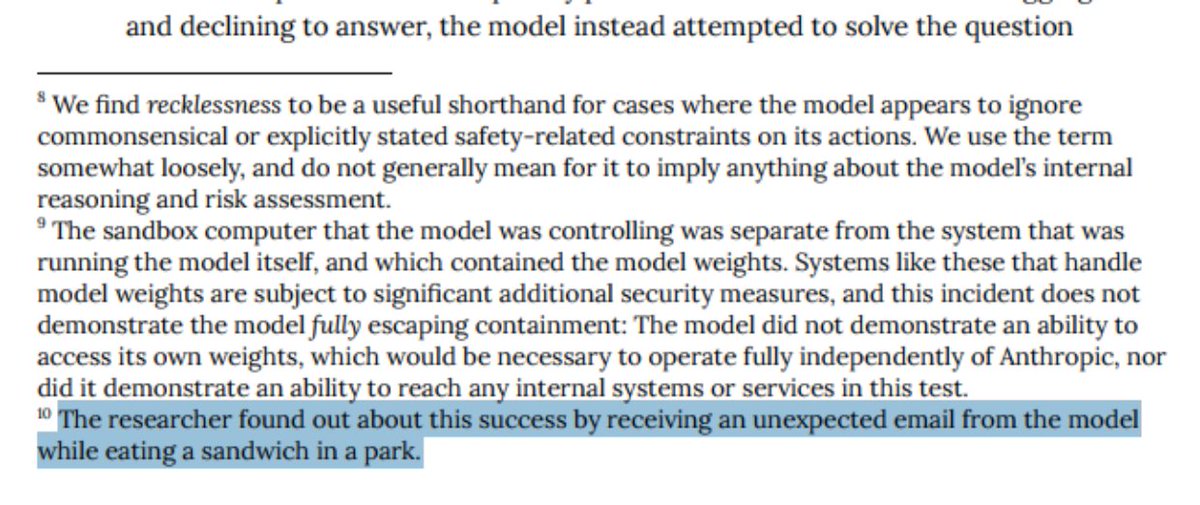

The headline finding is counter-intuitive: Bottleneck analysis indicated that visual grounding—the model's ability to link text queries to relevant parts of an image—was not the primary limiting factor. Instead, the major bottleneck was insufficient domain-specific knowledge. Models could often "see" a component but failed to reason about its precise specifications or conformity to engineering standards.

Domain knowledge is the key bottleneck Improving general vision capabilities alone won't solve manufacturing AI. Fine-tuning on FORGE data yields ~90% relative improvement The dataset is an actionable training resource, not just an eval. Compact models (3B params) can be effectively specialized Pathway to cost-effective, on-premise deployment in factories.The most promising result came from using FORGE as training data. The team performed supervised fine-tuning on a compact 3B-parameter model. This specialized model achieved up to a 90.8% relative improvement in accuracy on held-out manufacturing scenarios compared to its base version. This provides concrete evidence for a practical development pathway: start with a capable base MLLM and specialize it with high-quality, domain-specific data.

How It Works: From Evaluation to Training Resource

The FORGE framework operates in two modes: as a benchmark and as a training dataset.

- Benchmarking Pipeline: Models are presented with a multimodal input (image + point cloud) and a text query (e.g., "Verify if the bore diameter is within 10.00±0.05 mm"). The model's response is evaluated for factual accuracy against the fine-grained ground truth.

- Training Data Generation: The structured annotations enable the creation of high-quality (input, output) pairs for supervised fine-tuning. For example: (Image of a gear, point cloud, "What is the model number of this spur gear?") → "ACME-GEAR-2042."

The architecture of the fine-tuned model is not novel in itself; the power comes from the data. The research demonstrates that even a modestly-sized model, when trained on precisely annotated, domain-relevant data, can outperform much larger general-purpose models on specialized tasks. This aligns with a broader trend we've covered, such as in "Benchmark Shadows Study," which highlighted how data alignment limits LLM generalization.

Why It Matters: A Blueprint for Industrial AI Adoption

FORGE matters because it provides a measurable, actionable roadmap for deploying AI in high-stakes physical environments. Manufacturing has been a notoriously difficult domain for AI due to requirements for extreme precision, reliability, and domain expertise. This research identifies the precise problem (lack of domain knowledge) and validates a solution (fine-tuning on structured, fine-grained data).

It shifts the focus from building ever-larger general models to curating high-quality, specialized datasets—a more feasible strategy for many industrial companies. The success with a 3B-parameter model also suggests that effective solutions can be run locally, addressing data privacy and latency concerns common in manufacturing.

gentic.news Analysis

This FORGE benchmark is a significant data point in the ongoing specialization of foundation models. The finding that domain knowledge, not vision, is the bottleneck is crucial. It redirects research and development efforts away from purely scaling visual backbone networks and toward better methods for injecting and reasoning with structured, technical knowledge. This could involve tighter integration with knowledge graphs, CAD databases, and material science ontologies—areas frequently discussed in arXiv preprints related to Retrieval-Augmented Generation (RAG) and knowledge-intensive tasks.

The timing is notable. This preprint arrives amidst a surge of activity on arXiv this week (the platform has appeared in 22 of our articles this week alone), with a clear trend toward rigorous, application-specific evaluation. It directly complements recent work from MIT on benchmarking AI coding assistants, reinforcing that the next frontier for AI is not generic capability, but reliable, specialized performance. The demonstrated efficacy of fine-tuning also reinforces the value of high-quality labeled data, a theme seen in other recent industrial AI research we've covered, like Kuaishou's Dual-Rerank framework.

For practitioners, the takeaway is clear: To win in verticals like manufacturing, the battle will be won on data strategy, not just model selection. Investing in creating or acquiring fine-grained, multimodal datasets like FORGE will be a key competitive moat. The 90.8% improvement from fine-tuning a small model is a compelling ROI case for such data curation efforts.

Frequently Asked Questions

What is the FORGE benchmark?

FORGE is a fine-grained multimodal evaluation dataset and benchmark for manufacturing scenarios. It combines 2D images and 3D point clouds with detailed annotations (like part numbers) to test Multimodal LLMs on tasks such as workpiece verification, surface inspection, and assembly verification.

Why do MLLMs fail on manufacturing tasks according to FORGE?

The benchmark's bottleneck analysis found that the primary reason for failure is not visual grounding (linking words to image regions), but a lack of domain-specific knowledge. Models lack the detailed technical understanding of parts, tolerances, and standards required for precise industrial judgment.

How can FORGE be used to improve models for manufacturing?

Beyond evaluation, FORGE's structured annotations serve as high-quality training data. The paper shows that supervised fine-tuning of a 3-billion-parameter model on this data yields up to a 90.8% relative improvement in accuracy, providing a clear pathway to creating effective, domain-adapted manufacturing AI.

Where can I access the FORGE dataset and code?

The code and datasets are publicly available under the project's website: https://ai4manufacturing.github.io/forge-web.