Deploying large multimodal models at scale is bottlenecked by token-based pricing, where every text token—including those in system prompts, instructions, and context—adds to the bill. A new research paper, "Token-Efficient Multimodal Reasoning via Image Prompt Packaging," introduces a straightforward but systematic approach to this problem: take the text you'd normally send as tokens, render it into an image, and send that image instead.

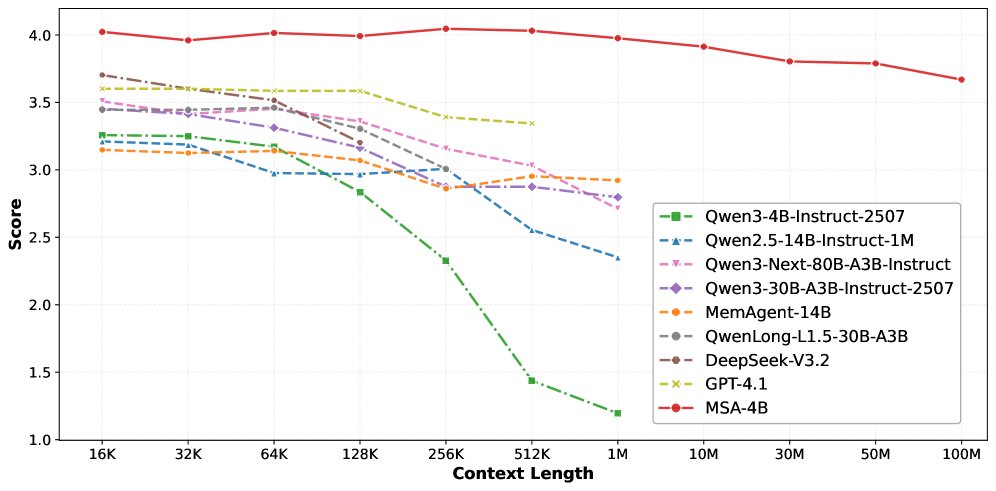

The method, called Image Prompt Packaging (IPPg), treats visual encoding as a first-class variable in system design. By benchmarking across five datasets, three frontier models (GPT-4.1, GPT-4o, Claude 3.5 Sonnet), and two task families (visual question answering and code generation), the researchers provide a rare, quantitative characterization of how visual prompting strategies actually affect both cost and accuracy in production-like settings.

What the Researchers Built

Image Prompt Packaging is a prompting paradigm, not a model architecture. The core idea is to convert structured textual information—like database schemas, long instructions, or code context—into a rasterized image that is then presented to a vision-language model alongside other visual inputs. This directly reduces the number of text tokens consumed per API call.

The process involves:

- Text Extraction & Structuring: Identifying the textual components of a prompt that are candidates for visual embedding (e.g., a SQL schema, a lengthy problem description).

- Visual Rendering: Using standard libraries (like PIL in Python) to render the text into an image. The paper explores a 125-configuration ablation study on rendering parameters like font, size, color, layout, and background.

- Multimodal Prompt Assembly: Combining the generated text-image with any other necessary images (e.g., charts, diagrams) and a minimal text instruction into a single multimodal prompt for the model.

The research is fundamentally an empirical cost-performance analysis. The team derived a cost formulation that decomposes savings by token type (input vs. output, text vs. image), providing a framework for engineers to estimate potential savings for their specific use cases.

Key Results: Cost Savings and Accuracy Trade-offs

The headline finding is significant cost reduction, but with a critical, model-dependent caveat on accuracy.

Cost Reduction:

IPPg achieved inference cost reductions of 35.8% to 91.0% across the evaluated benchmarks. Token compression reached up to 96%—meaning that 96% of the text tokens that would have been used in a standard prompt were eliminated by moving the information into the image channel.

Accuracy Outcomes (Model-Dependent):

Performance was not uniformly preserved. The results create a clear taxonomy of which models and tasks benefit.

Key Insight: For schema-structured tasks (like converting a natural language question into SQL given a table schema), IPPg was highly effective. The visual representation of the schema seemed to aid GPT-4.1's reasoning. Conversely, for tasks requiring precise optical character recognition (OCR) or spatial reasoning, accuracy degraded, especially for Claude 3.5.

The rendering ablation study proved that visual encoding choices are not trivial. Variations in font, layout, and style caused accuracy shifts of 10–30 percentage points, underscoring that "how you render the text" is a major engineering lever.

How It Works: The Technical Mechanism

From the model's perspective, IPPg changes the modality mix of the input. Instead of processing a long text sequence, the model uses its vision encoder to parse the text from the image. This transfers computational load from the language model's token-processing pathway to the vision encoder's image-processing pathway.

The cost savings arise because multimodal API pricing is typically structured as:Cost = (Text Tokens * Text Price) + (Image Tokens * Image Price)

Image tokens are usually counted as a fixed number based on resolution (e.g., a 1024x1024 image might be 765 tokens), regardless of how much text is packed into it. Therefore, embedding 10,000 characters of text into a single image can replace thousands of text tokens with a few hundred image tokens.

The paper's systematic error analysis identified primary failure modes:

- Spatial Reasoning: Models struggled when the task required understanding the relative position of text elements in the image.

- Non-English Inputs: Rendering and subsequent OCR accuracy fell for characters outside the typical Latin alphabet.

- Character-Sensitive Operations: Tasks like exact code generation or mathematical formula parsing, where a single misread character (e.g.,

lvs.1) causes failure, were vulnerable.

Why It Matters: A Practical Tool with Clear Boundaries

This work provides a immediately applicable, code-level technique for engineers building with multimodal models. It moves visual prompting from an ad-hoc trick to a characterized method with known trade-offs.

For practitioners, the takeaway is conditional:

- Use IPPg for: Transmitting large, structured reference text (schemas, documents, code context) to GPT-4.1, especially for code generation tasks. The cost savings are substantial and accuracy can improve.

- Avoid IPPg for: Tasks requiring fine-grained OCR, non-English text, or precise spatial reasoning with Claude 3.5 Sonnet, as the accuracy penalty may outweigh cost benefits.

The research also serves as a benchmark revealing model-specific strengths. GPT-4.1's robustness to this technique suggests superior visual text understanding in its vision encoder, while Claude 3.5's sensitivity highlights a potential area for improvement in future model iterations.

gentic.news Analysis

This paper, posted to arXiv on April 2, 2026, arrives amid intense focus on optimizing the cost of running large AI models in production. It directly addresses a pain point for developers using the very models—GPT-4.1, GPT-4o, Claude 3.5—that dominate our weekly coverage. The trend data is telling: Claude Code appeared in 65 articles this week, and a core challenge for tools like it is managing context cost effectively. This research provides a potential technique to reduce the token overhead of feeding large codebases or documentation to these coding agents.

The findings have intriguing competitive implications. The model-dependent results create a new, subtle dimension for comparison. While raw benchmark scores on standard vision tasks are one thing, a model's "token-efficiency robustness"—its ability to maintain accuracy under cost-saving prompt optimizations—is now a measurable operational metric. GPT-4.1's strong showing here could be leveraged as a practical advantage in developer marketing, contrasting with our April 5th article on locking in Claude Code access, which focused on pricing strategy.

Furthermore, the paper's deep dive into rendering parameters (font, layout, etc.) exposes a largely unexplored hyperparameter space for prompt engineering. This aligns with a broader shift we're seeing from simple text prompting toward engineered, multi-modal prompt chains, as hinted at in the recent integration of Claude with tools like Canva and Figma. The choice of how to visually represent information is now a legitimate system design decision, not just a cosmetic one.

Frequently Asked Questions

Can I use Image Prompt Packaging with the OpenAI API today?

Yes, technically. The method does not require any special API support. You can implement it by using an image generation library to render your text to a PNG or JPEG, then including that image in your API call to gpt-4-vision-preview or similar endpoints. The paper provides the empirical evidence for what savings and accuracy changes to expect.

Does this method work with open-source vision-language models?

The paper only tested proprietary frontier models (GPT-4.1, GPT-4o, Claude 3.5). The effectiveness on open-source models like LLaVA or Qwen-VL would depend entirely on the quality of their vision encoder's OCR capabilities and is an open question. It is likely less reliable given typical performance gaps in text understanding from images.

What's the main risk of using IPPg in production?

The primary risk is the introduction of silent errors. Because the model is reading text from an image, it may misread characters (e.g., confusing 'O' with '0') with high confidence. This is particularly dangerous for tasks like code generation or data extraction where exact correctness is required. The paper recommends rigorous validation on a task-specific basis before deployment.

How do I choose the best font and layout for rendering text?

The paper's ablation of 125 configurations found that simple, high-contrast, sans-serif fonts (like Arial) on a plain white background generally performed well. Avoiding stylized fonts, low contrast, or complex backgrounds minimized OCR errors. The researchers recommend running a small-scale ablation on your specific task and model to tune these parameters for optimal accuracy.