What Happened

Researchers from Kuaishou—the Chinese short-video platform serving over 400 million daily active users—have published a new paper on arXiv proposing Dual-Rerank, a unified framework for industrial generative reranking. The work addresses fundamental challenges in deploying generative reranking systems at massive scale, where traditional score-and-sort methods fail to capture combinatorial dependencies between items.

The core innovation addresses what the authors call the "dual dilemma":

- Structural Trade-off: Autoregressive (AR) models capture sequential dependencies well but suffer from prohibitive latency in production environments. Non-autoregressive (NAR) models are efficient but lack dependency modeling capabilities.

- Optimization Gap: Supervised learning struggles to directly optimize whole-page utility metrics, while reinforcement learning (RL) faces instability issues in high-throughput data streams.

Technical Details

Dual-Rerank proposes two key technical solutions:

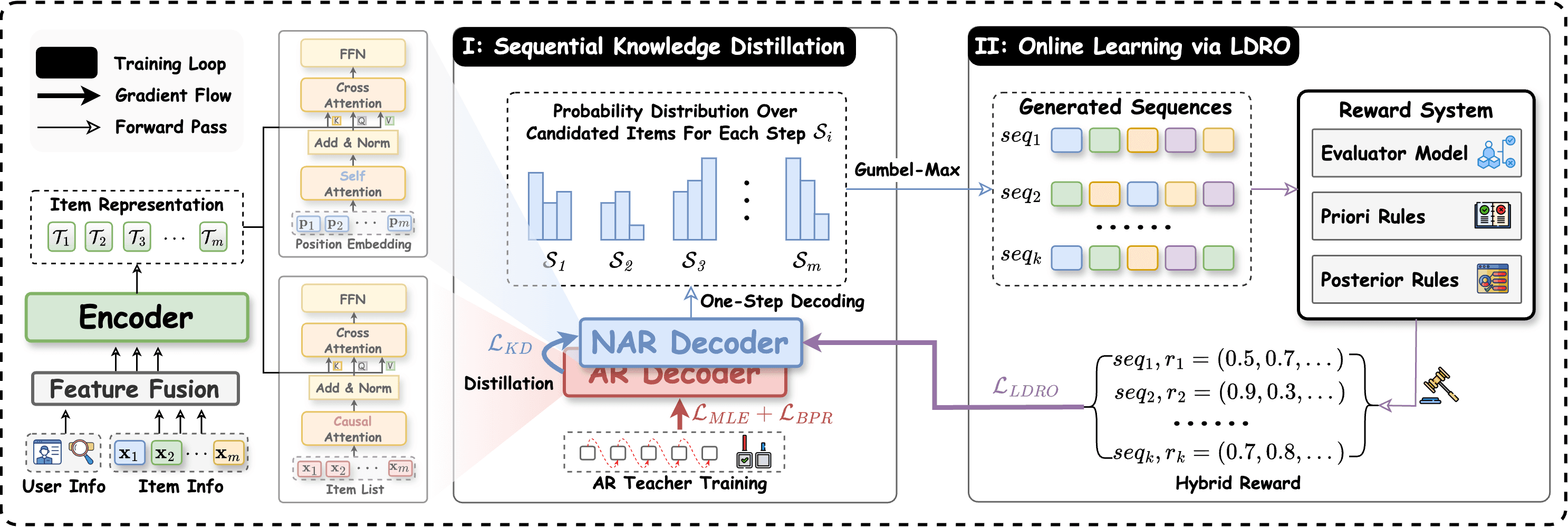

1. Sequential Knowledge Distillation (SKD)

This technique bridges the structural gap by distilling knowledge from a powerful but slow teacher AR model into a student NAR model. The distillation process preserves the teacher's ability to model item dependencies while enabling the student's inference efficiency. The approach specifically focuses on capturing the causal relationships between items in a ranked list—understanding how the placement of one item affects user engagement with subsequent items.

2. List-wise Decoupled Reranking Optimization (LDRO)

To address the optimization gap, LDRO employs a two-stage approach:

- Stage 1: A supervised learning phase trains the model on historical data to learn basic ranking patterns.

- Stage 2: An online reinforcement learning phase fine-tunes the model using real-time user feedback, with specific stabilization techniques to handle the volatility of production traffic.

The decoupled approach allows for stable online RL optimization while maintaining the benefits of supervised pre-training. The framework directly optimizes for "whole-page utility"—considering how the entire ranked list performs together rather than optimizing individual item scores independently.

Retail & Luxury Implications

While the paper focuses on short-video recommendations for Kuaishou, the underlying technology has direct applications in luxury and retail e-commerce. Generative reranking represents the next evolution beyond traditional recommendation systems, particularly for:

1. Personalized Product Collections & Looks

Luxury retailers curating complete outfits or collections could use generative reranking to optimize the sequence in which products are presented. The system could learn that showing a handbag before shoes leads to higher engagement with both items, or that certain brand sequences maximize overall basket value.

2. Search Result Optimization

For luxury e-commerce sites with complex product catalogs (watches with multiple complications, handbags with various leathers and hardware), generative reranking could optimize search result pages by considering how products complement each other rather than just individual relevance scores.

3. Email & Campaign Sequencing

The same principles could apply to marketing campaign sequencing—determining the optimal order of product recommendations in personalized emails or push notifications to maximize overall campaign effectiveness.

4. Virtual Stylist & Concierge Services

AI-powered styling assistants could benefit from the dependency modeling capabilities, understanding that recommending a specific dress should influence subsequent accessory recommendations in a coherent, brand-consistent manner.

The paper's emphasis on industrial deployment is particularly relevant for luxury retailers operating at scale. The latency reduction achieved through Sequential Knowledge Distillation (comparing NAR efficiency with AR quality) addresses a critical barrier to deploying sophisticated AI in customer-facing applications where milliseconds matter.

Implementation Considerations

For retail AI teams considering similar approaches:

Technical Requirements:

- Existing recommendation infrastructure with real-time user feedback loops

- Ability to run A/B tests at scale (the paper mentions "extensive A/B testing on production traffic")

- ML infrastructure capable of supporting both supervised learning and online reinforcement learning pipelines

- Monitoring systems for model stability in high-throughput environments

Complexity Level: High. This represents advanced ML engineering beyond typical recommendation systems, requiring expertise in knowledge distillation, reinforcement learning, and large-scale system optimization.

Data Requirements:

- Historical interaction data for supervised pre-training

- Real-time user engagement signals for RL fine-tuning

- Sufficient traffic volume to support stable online learning (Kuaishou's scale of "hundreds of millions of search queries daily" provides statistical significance)

Risk Factors:

- Online RL systems can be unstable without proper safeguards

- The complexity increases system maintenance overhead

- Requires careful calibration between exploration (trying new rankings) and exploitation (using known good rankings)

- Privacy considerations when using detailed user interaction data for real-time optimization