What Happened

A comprehensive survey paper, "Multi-Agent Video Recommenders: Evolution, Patterns, and Open Challenges," was posted to arXiv, providing a structured overview of a significant architectural shift in recommendation systems. The core thesis is that traditional, monolithic recommender models—which often optimize for a single, static engagement metric—are insufficient for the dynamic, multi-faceted demands of modern video platforms. In response, researchers and practitioners are turning to multi-agent architectures.

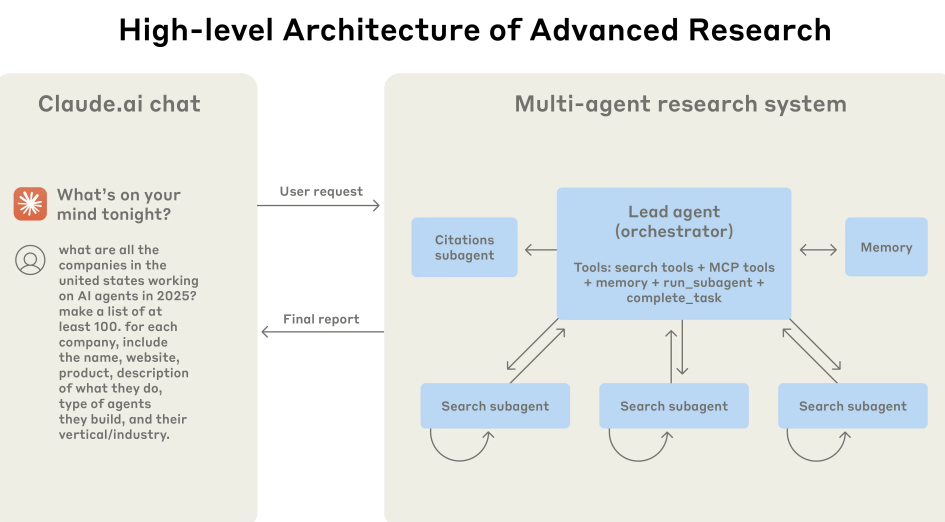

These systems decompose the recommendation task among specialized, collaborative AI agents. One agent might be responsible for deep video understanding (analyzing content, style, sentiment), another for user reasoning (interpreting intent from history and context), another for long-term memory (maintaining a persistent user profile), and another for feedback processing. The coordination of these agents aims to produce more precise, adaptive, and crucially, explainable recommendations.

Technical Details

The survey maps the evolution from early Multi-Agent Reinforcement Learning (MARL) systems, like MMRF, where agents learned to cooperate through reward signals, to the current frontier: LLM-powered MAVRS. Here, large language models act as the "brains" of the agents, enabling sophisticated reasoning, natural language understanding for query interpretation, and the generation of human-readable explanations for why a video was suggested.

Key architectures discussed include MACRec and Agent4Rec, which exemplify how LLMs can orchestrate specialist modules. The paper presents a taxonomy of collaborative patterns, such as:

- Hierarchical Coordination: A master LLM agent breaks down a user request and delegates sub-tasks to specialist agents.

- Peer-to-Peer Negotiation: Agents representing different aspects (e.g., content freshness, diversity, user preference) debate to reach a consensus recommendation.

- Market-Based Mechanisms: Agents "bid" on their proposed items based on their specialized view, with the highest aggregate bid winning.

The survey also rigorously outlines persistent open challenges:

- Scalability & Latency: The computational overhead of running multiple LLM-based agents is non-trivial for real-time serving.

- Multimodal Understanding: Effectively fusing visual, audio, textual, and temporal (watch patterns) signals remains complex.

- Incentive Alignment: Ensuring all specialized agents work towards a coherent, long-term user satisfaction goal, not just their own sub-task metric.

- Evaluation: Moving beyond simple click-through rate to measure nuanced qualities like satisfaction, trust, and diversity.

Retail & Luxury Implications

The direct application for retail and luxury is not in video, but in the architectural paradigm. The core problem—moving from a single, black-box model predicting "click" to a coordinated system that understands nuanced intent, rich product attributes, and long-term brand relationship goals—is identical.

Imagine a multi-agent recommender for a luxury fashion platform:

- A Product Understanding Agent: Powered by a vision-language model like CLIP, it deeply analyzes product imagery and descriptions for style, material, occasion, and designer ethos.

- A Client Reasoning Agent: An LLM-based agent that maintains a rich, narrative-style client profile, interpreting past purchases, saved items, customer service notes, and even the sentiment of product reviews they've written.

- A Context & Trend Agent: Monitors real-time data on emerging trends, regional weather, local event calendars, and inventory levels.

- A Conversational Interface Agent: Handles natural language queries ("I need an outfit for a garden wedding in Tuscany in June that feels modern but not trendy") and synthesizes the inputs from other agents into a coherent, explainable recommendation.

This agentic framework could power a next-generation conversational commerce experience, where the AI shopping assistant can reason across multiple constraints (style, inventory, budget, sustainability values) and justify its suggestions in a manner that builds trust—a critical factor for high-value purchases. The survey's identified challenge of incentive alignment is particularly relevant; you would need to ensure the "sales agent" doesn't overpower the "style authenticity agent" or the "long-term client value agent."

The technical hurdles, especially latency for real-time personalization on a product detail page, are substantial. However, for high-consideration purchases, appointment booking, or post-purchase styling advice, where interaction can be asynchronous (e.g., over chat or email), this multi-agent, LLM-driven approach could redefine digital clienteling.