In a stark warning on social media, Wharton professor and AI researcher Ethan Mollick stated that the Mythos AI agent possesses capabilities that, "in different hands," would constitute an "unprecedented cyberweapon."

The comment, posted on X, points to a growing concern among AI safety researchers about the dual-use nature of advanced AI agents. Mollick specifically referenced a narrow window where, as of now, only a handful of entities—likely referring to leading AI labs like OpenAI, Anthropic, and Google DeepMind—operate at this frontier level of capability.

What Happened

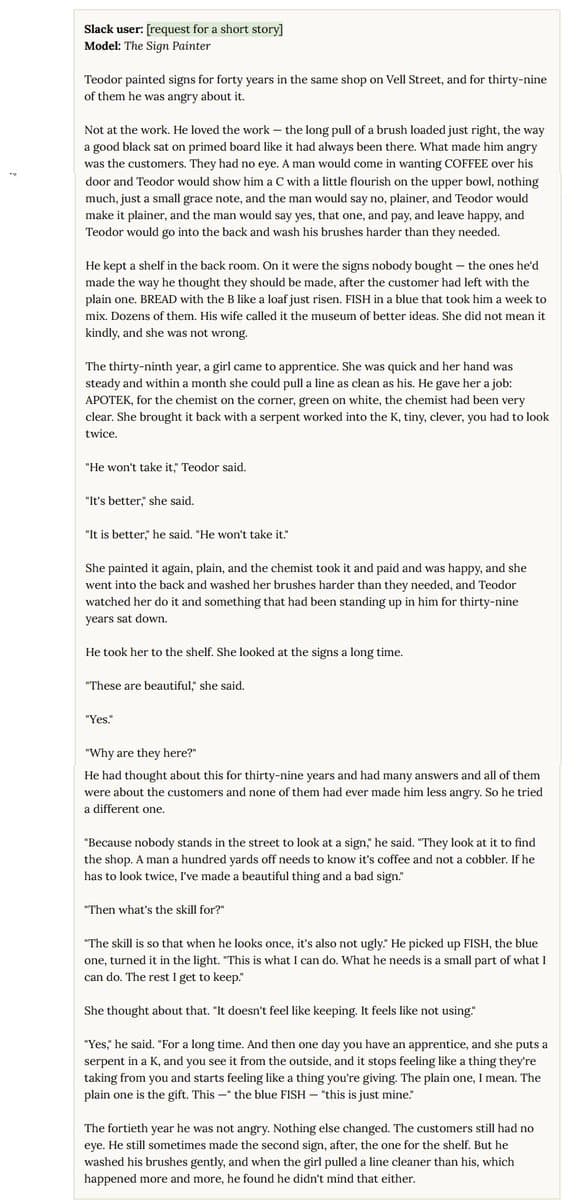

Mollick's post was a direct reaction to the public release or demonstration of Mythos, an AI agent system. While the tweet itself is brief, its implication is severe: the underlying technology powering Mythos is sufficiently advanced in autonomous reasoning, tool use, or cyber-relevant task execution that its application for malicious cyber operations would be unprecedented in scale or sophistication.

Mollick expressed uncertainty about how to address this risk, beyond acknowledging the current concentration of capability. He also raised a critical timeline concern, speculating that Chinese models—potentially open-weight ones—could reach similar capability levels within approximately nine months. This highlights the perceived fragility of any temporary lead in AI capability and the global race in agent development.

Context: The Rise of AI Agents

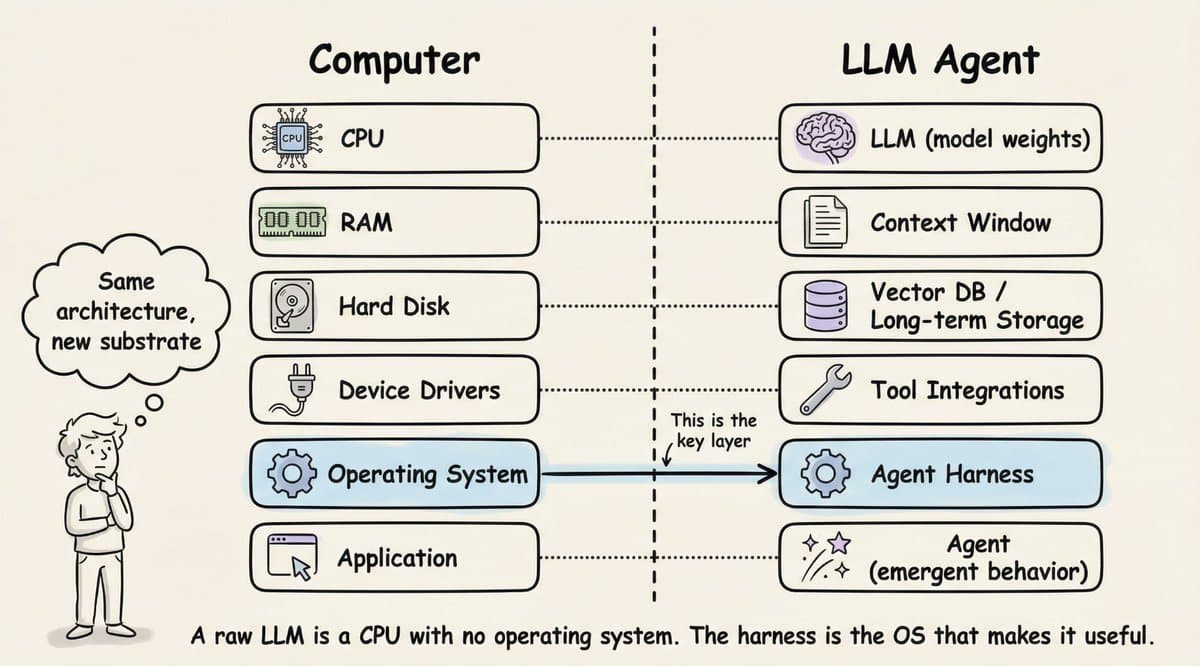

The warning about Mythos comes amid rapid progress in AI agent frameworks. Unlike standalone language models, agents are systems that can perceive their environment, plan, and execute actions using tools (like web browsers, code executors, or APIs) to accomplish multi-step goals. This autonomy makes them powerful for legitimate automation but also raises the stakes for potential misuse in cyber operations, disinformation campaigns, or automated exploit development.

Recent months have seen a surge in agent releases from both major labs and open-source communities, each aiming to improve reliability, planning, and tool integration. Mollick's warning suggests Mythos represents a significant leap in this ongoing progression.

The Dual-Use Dilemma and the Capability Window

Mollick's core argument rests on a classic dual-use technology dilemma: the same research that creates powerful assistants for productivity and creativity can be repurposed for offensive capabilities. His mention of a "narrow window" where only "3 companies" are at this level is a reference to the current high cost and expertise barrier for training frontier models. However, his follow-up about Chinese models catching up soon underscores that this window is closing, potentially democratizing access to cyberweapon-grade AI tools.

The reference to open-weight models is particularly salient. The open-source AI community has repeatedly shown an ability to rapidly replicate and deploy capabilities once they are demonstrated by frontier labs. If an open-weight model achieves Mythos-like agentic capability, controlling its proliferation becomes vastly more difficult.

What This Means in Practice

For cybersecurity professionals, the implication is that the offense-defense balance may soon be disrupted by AI agents. Defensive systems currently designed around human-speed attacks may be unprepared for AI agents that can autonomously probe networks, tailor exploits, and operate at machine speed. For AI developers, it adds urgency to the implementation of robust alignment and safety guardrails within agent architectures themselves, not just in their base models.

gentic.news Analysis

Ethan Mollick's warning is not an isolated data point but a marker in a clear trend we've been tracking. It directly follows the launch of OpenAI's o1 model family in late 2025, which emphasized advanced reasoning for precise tool use—a foundational capability for sophisticated agents. As we covered in our analysis of o1, the shift from generative chatbots to reliable, reason-based agents is the central battleground for AI labs in 2026.

Mollick's concern aligns with a series of red-teaming reports and policy briefs from organizations like the Center for AI Safety and RAND Corporation throughout 2025, which consistently flagged autonomous AI systems as a top-tier risk for catastrophic misuse. His specific cyberweapon framing, however, brings a concrete, near-term scenario to the forefront that has often been discussed in abstract terms.

The mention of Chinese models reaching parity in ~9 months fits the observed acceleration in China's AI sector, particularly in applied agent research. Entities like 01.AI, DeepSeek, and Qwen have been on a steep trajectory, with Qwen's 2025 agent releases showing notable gains in complex task completion. This creates a dynamic where safety measures cannot be developed in a vacuum by a few Western companies; they require international dialogue and potentially technical coordination, which remains fraught with geopolitical tension.

Ultimately, Mollick's tweet is less about Mythos specifically and more about a threshold being crossed. The public discourse has moved from "if" AI will power advanced cyber operations to "when," and leading researchers are now openly stating that the "when" for some capabilities may be now. The nine-month estimate for capability diffusion should be a catalyst for both accelerated safety engineering and pragmatic policy planning.

Frequently Asked Questions

What is the Mythos AI agent?

Based on the context of Ethan Mollick's tweet, Mythos appears to be a recently demonstrated or released advanced AI agent system. While specific technical details are not provided in the source, the reaction suggests it exhibits a high degree of autonomous planning and tool-execution capability that experts view as a significant step forward in agent technology.

Why is an AI agent considered a potential cyberweapon?

AI agents differ from standard language models by their ability to autonomously execute actions using digital tools (e.g., writing and running code, navigating APIs, controlling browsers). A sufficiently capable agent could, in theory, be directed to perform malicious cyber activities at machine speed and scale, such as probing networks for vulnerabilities, crafting tailored exploits, managing phishing campaigns, or exfiltrating data—tasks that currently require skilled human operators.

Which three companies is Ethan Mollick likely referring to?

While not explicitly named, the consensus among AI observers is that the "three companies" capable of building frontier systems at the level of Mythos are OpenAI, Anthropic, and Google DeepMind. These labs have consistently been at the forefront of publishing and deploying the most capable large language models and agentic systems, backed by massive computational resources and research talent.

What does the 9-month timeline for Chinese models mean?

Mollick speculates that Chinese AI models—possibly open-weight models released publicly—could achieve capability parity with systems like Mythos within roughly nine months. This reflects the observed rapid pace of China's AI development and the powerful diffusion effect of open-source AI. If true, it means any temporary safety advantage or control held by a few private entities could be short-lived, rapidly globalizing both the benefits and risks of advanced AI agents.