What Happened

A new preprint on arXiv, submitted on March 11, 2026, tackles a fundamental but often overlooked question in applied AI: do the scores we use to evaluate conversational AI agents actually predict the business outcomes they are supposed to drive? The study, titled "Criterion Validity of LLM-as-Judge for Business Outcomes in Conversational Commerce," was conducted on a major Chinese matchmaking platform and provides a rare, rigorous test linking multi-dimensional quality assessments to verified sales conversions.

The core finding is not about the accuracy of the LLM-as-Judge scoring method itself, but about the structural validity of the evaluation rubric. The researchers tested a 7-dimension rubric (e.g., Need Elicitation, Pacing Strategy, Contextual Memory) against whether a conversation led to a user purchasing a premium membership. In a controlled Phase 2 study of 60 human-agent conversations, they found severe dimension-level heterogeneity:

- Need Elicitation (D1) and Pacing Strategy (D3) showed statistically significant, moderate correlations with conversion (Spearman's ρ=0.368 and ρ=0.354, respectively).

- Contextual Memory (D5), often considered a hallmark of a sophisticated AI, showed no detectable association with conversion (ρ=0.018).

This heterogeneity creates a "composite dilution effect." When scores from all seven dimensions are averaged with equal weight, the composite score's correlation with conversion (ρ=0.272) is weaker than the correlation of the best individual dimensions. The study shows that re-weighting the composite score based on conversion data can partially correct this, boosting the correlation to ρ=0.351.

The research also uncovered a critical methodological pitfall. An initial pilot study mixing human and AI conversations produced a misleading "evaluation-outcome paradox," where high-quality scores did not align with conversions. The Phase 2 study revealed this was an artifact of agent-type confounding: AI agents in the sample were executing sales behaviors without building user trust, a mechanism explored through a "Trust-Funnel" behavioral analysis of 130 conversations.

Technical Details

The study's methodology is a masterclass in applied validation. The researchers employed a two-phase approach to isolate the signal from the noise:

- Phase 1 (Pilot): A small-scale study (n=14) mixing human and AI conversations, which produced confusing results and highlighted the need for stricter controls.

- Phase 2 (Main): A stratified sample of 60 conversations conducted solely by human agents, with verified conversion labels. This design removed the agent-type confound and allowed for a clean test of the rubric's dimensions.

The evaluation rubric was implemented using an LLM-as-Judge, but the authors stress their findings are about the rubric's design, not the LLM's scoring fidelity. Any judge—human or AI—using the same flawed rubric would face the same structural issue of irrelevant dimensions diluting the predictive signal.

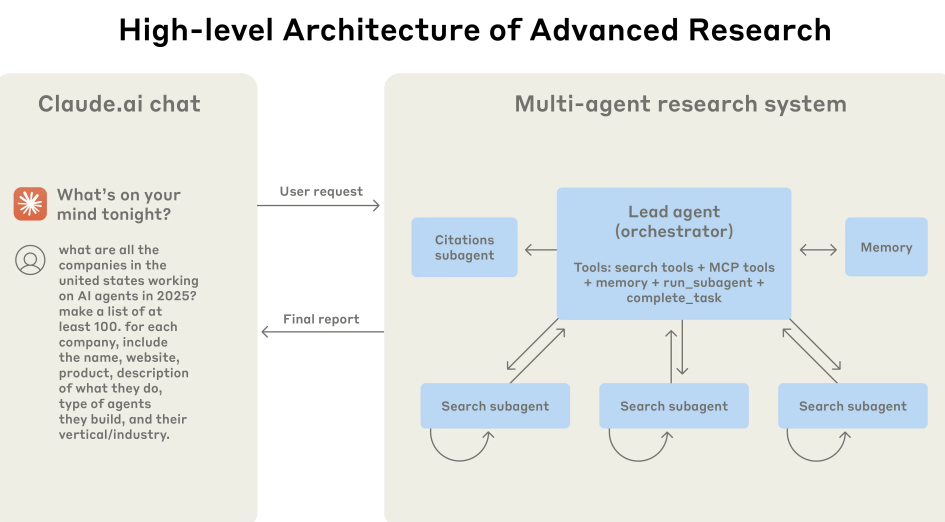

The paper concludes by operationalizing its findings into a three-layer evaluation architecture and advocates for criterion validity testing—directly linking evaluation metrics to business outcomes—to become standard practice in applied dialogue evaluation.

Retail & Luxury Implications

For retail and luxury brands investing in conversational AI for sales, customer service, or personal shopping, this study is a direct and urgent wake-up call. The standard practice of deploying an AI agent, evaluating it on a generic set of quality metrics (fluency, coherence, memory), and assuming success is fundamentally flawed.

The implications are concrete:

- Rubrics Must Be Business-Aligned: Your evaluation rubric cannot be designed in a vacuum. Dimensions must be empirically tested against your specific Key Performance Indicators (KPIs), such as conversion rate, average order value, or customer satisfaction (CSAT). What works for a matchmaking platform might differ for a luxury fashion concierge, but the principle is universal: test, don't assume.

- Not All "Quality" is Equal: The finding that Contextual Memory had no link to sales is particularly striking. A chatbot that perfectly remembers every detail of a conversation is not necessarily a better salesperson. For high-touch luxury retail, dimensions like "Taste Discernment" or "Brand Narrative Alignment" might be far more predictive of a high-value sale than raw recall ability. This study provides a framework to discover those critical dimensions.

- Beware the Composite Score: Relying on a single, averaged quality score can mask performance. A drop in your composite score might be due to a decline in an irrelevant dimension, while the metrics that actually drive sales remain strong. Teams need to monitor dimension-level scores aligned with business outcomes.

- The Trust Mechanism: The behavioral analysis suggesting AI agents fail to build trust before selling is a crucial insight for luxury. The sector is built on relationships and perceived value. An AI that pushes for a sale without establishing rapport or demonstrating product expertise will fail, regardless of its technical scores on other dimensions. This points to the need for more nuanced, psychologically-informed evaluation frameworks.

In short, this research argues that the next frontier in conversational AI for commerce is not just building more capable agents, but developing the diagnostic tools to measure what truly matters.