The Illusion of Simplicity and the Economic Reality

The question haunting CTOs and finance teams is no longer about AI's potential, but its price tag: "How can a text conversation cost this much?" As Generative AI initiatives graduate from controlled pilots to full-scale production, initial cost models are collapsing under real-world scale. The source article provides a stark economic analysis, arguing that the visible interface of a chatbot is merely the tip of a vast operational iceberg.

The core misalignment stems from a fundamental difference in economic models. Traditional enterprise software operates on predictable, largely flat costs for provisioned infrastructure and licensing. GenAI systems, however, introduce a variable cost tied directly to usage—every token processed, every vector searched, every inference run adds to the bill. This usage-based consumption, layered atop fixed infrastructure, creates a cost structure that appears sound in early estimates but becomes volatile at scale.

Deconstructing the Cost Layers: From Predictable to Hidden

The article breaks down GenAI costs into two broad categories: the predictable expenses organizations plan for, and the hidden operational costs that emerge post-deployment and often dominate the total cost of ownership.

Predictable Costs (The Budget Line Items)

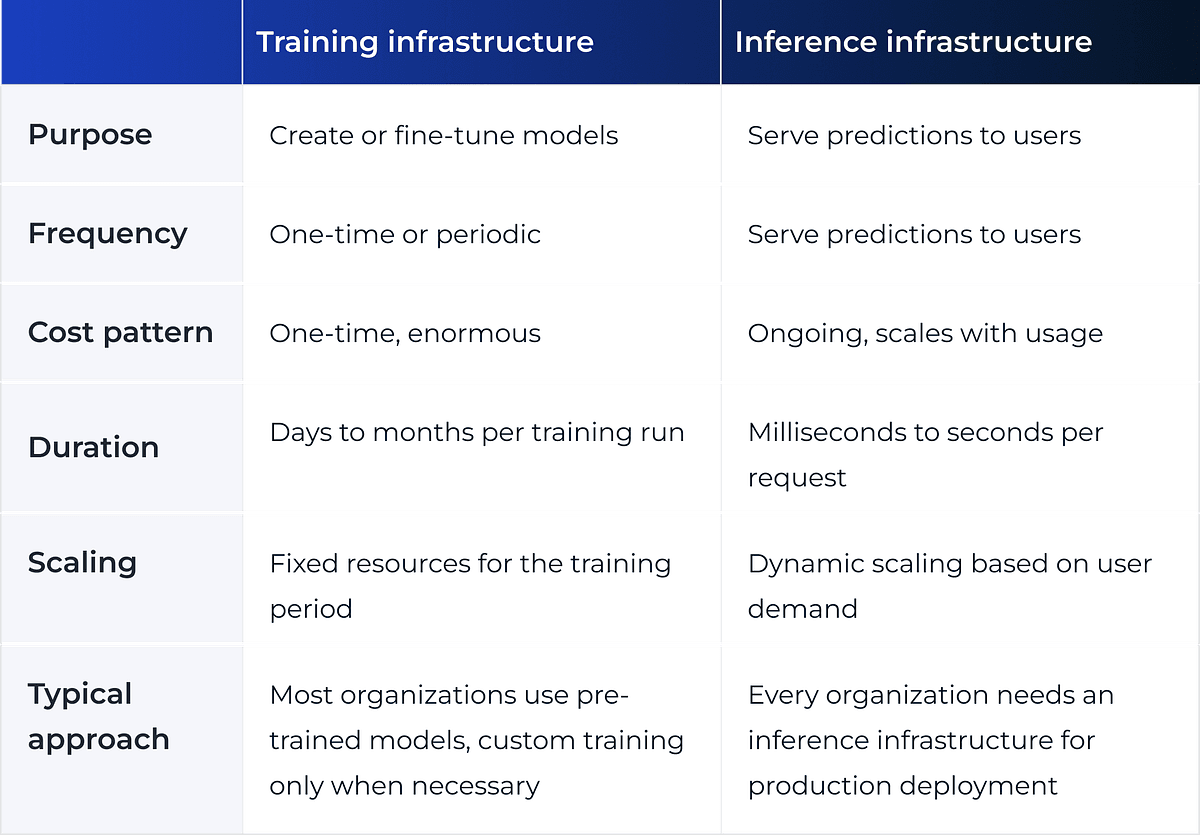

- Infrastructure & Compute: Running neural networks with billions of parameters requires specialized GPU/TPU hardware, whether owned or accessed via cloud APIs. Costs are split between the one-time (or periodic) burden of model training and the continuous expense of inference.

- API & Model Serving Fees: The most visible cost, typically priced per token (OpenAI, Anthropic) or in usage tiers (Google Gemini). Volume discounts exist, but they rarely offset the exponential growth in usage for consumer-facing applications.

- Storage & Database Systems: This includes vector databases for embeddings, traditional relational databases for user data, and caching/backup systems. The article notes a critical nuance for Retrieval-Augmented Generation (RAG) architectures: documents are stored twice (original text and mathematical vectors), doubling storage needs.

- Initial Development & Integration: Costs for specialized talent (ML engineers, prompt engineers), system development, integration with existing ERP/CRM systems, testing, and deployment.

The Hidden Operational Costs (The Iceberg Beneath)

This is where financial projections are most likely to fail. The article asserts that these ongoing operational expenses can exceed visible costs by 200-300%.

- Continuous Data Pipeline: A GenAI system is not a "set-and-forget" tool. To remain accurate, it requires a live, maintained data pipeline. This involves:

- Continuously extracting and normalizing content from enterprise sources.

- Cleaning data, chunking documents, generating embeddings, and indexing vectors.

- Regularly synchronizing the system with updates to product catalogs, policies, and knowledge bases. Stale data leads directly to misleading AI outputs.

- Monitoring & Observability Infrastructure: GenAI demands far deeper visibility than traditional software. Beyond uptime, teams must track cost-per-interaction, token consumption, response latency, and—critically—AI-specific quality metrics like hallucination rates, prompt effectiveness, and model performance drift. This requires specialized tooling not typically covered in initial budgets.

- Quality Assurance, Safety, & Maintenance: Each AI response may need to pass through validation layers, safety filters, and logging systems. Maintaining these guardrails and ensuring the model's performance doesn't degrade (a phenomenon known as "model drift") requires dedicated, ongoing human and computational effort.

The Retail & Luxury Implications: Scaling Beyond the Pilot

For luxury retail houses and fashion conglomerates, this analysis is not theoretical—it's a roadmap of the financial and operational challenges awaiting any successful AI pilot. The implications are profound for key use cases:

- Personal Shopping Assistants & High-Touch Customer Service: A conversational AI for VIP clients isn't just an API call. It's a system requiring real-time integration with inventory (ERP), clienteling profiles (CRM), and lookbooks. Each conversation incurs variable costs, and the data pipeline must update instantly with new collection drops or stock changes. The cost of a hallucination—suggesting an out-of-stock bag or misstyling a client—is a direct brand equity hit.

- RAG-Powered Knowledge Hubs for Staff: A tool for store associates to query policy, product details, or styling guides relies entirely on the health of its RAG system. As the Knowledge Graph data shows, RAG is a dominant enterprise trend, but its production reality is complex. The "hidden cost" of continuously updating this system with new product technical sheets, campaign narratives, and return policies is substantial. This follows recent warnings from developers about RAG system failures at production scale.

- Creative & Marketing Content Generation: Scaling an AI copilot for marketing copy or visual concepting from one campaign to all regions means usage—and costs—will scale non-linearly. Monitoring for brand voice consistency and compliance across thousands of generated assets becomes a major operational lift.

The fundamental takeaway is that the business case for any GenAI application must be built on a total lifecycle cost model, not just prototype API fees. The ROI must justify not only the build but the perpetual, resource-intensive operation and evolution of the system.

Implementation & Governance: A Reality Check

Moving forward requires a shift in planning:

- Financial Modeling: Budget for a 2-3x multiplier on initial API/infrastructure estimates to account for hidden operational costs. Implement detailed cost-per-interaction tracking from day one.

- Platform Thinking: Don't build isolated AI apps. Invest in a centralized AI platform layer that can share data pipelines, monitoring tools, and governance frameworks across multiple use cases (clienteling, operations, design). This aligns with the trend toward platform engineering for production RAG.

- Own the Data Pipeline: The highest leverage—and most costly—component is the continuous data workflow. Prioritize automating the synchronization between core business systems (PLM, ERP, PIM) and the AI's knowledge base.

- Governance from the Start: Establish clear metrics for AI quality (hallucination rate, retrieval accuracy) and assign ownership for model monitoring and maintenance. Plan for regular review cycles to retrain or update systems as products and business rules evolve.

gentic.news Analysis

This cost analysis arrives at a pivotal moment for enterprise AI, particularly in retail. It directly contextualizes and validates several trends we've been tracking via our Knowledge Graph Intelligence.

First, it explains the underlying economic pressure behind the recent industry shift from consumer-facing AI chat to core utility for rewiring product data supply chains. The hidden costs of consumer-facing chat are prohibitive without a clear, high-value ROI. Using AI to streamline internal data flows, however, can have a more defensible and measurable cost-saving impact.

Second, it provides the financial rationale for the intense focus on production-grade RAG architectures. With RAG being mentioned in 92 prior articles and appearing in 5 articles this week alone, the community is grappling with moving it from prototype to platform. The article's warning about the dual storage cost and continuous pipeline maintenance for RAG is a critical, practical footnote to that trend. It adds a cost dimension to the recent framework for moving RAG systems from proof-of-concept to production and the cautionary tales of RAG failure at scale.

Finally, this analysis subtly supports the provocative thesis from Ethan Mollick on the 'end of the RAG era' as the dominant paradigm. If the operational overhead of maintaining accurate, large-scale RAG systems is this high, it creates a strong incentive for the industry to explore more efficient or alternative architectures for knowledge integration, such as more capable foundational models or managed agentic systems. The search for cost-effective, sustainable AI operations is now a primary driver of architectural innovation.