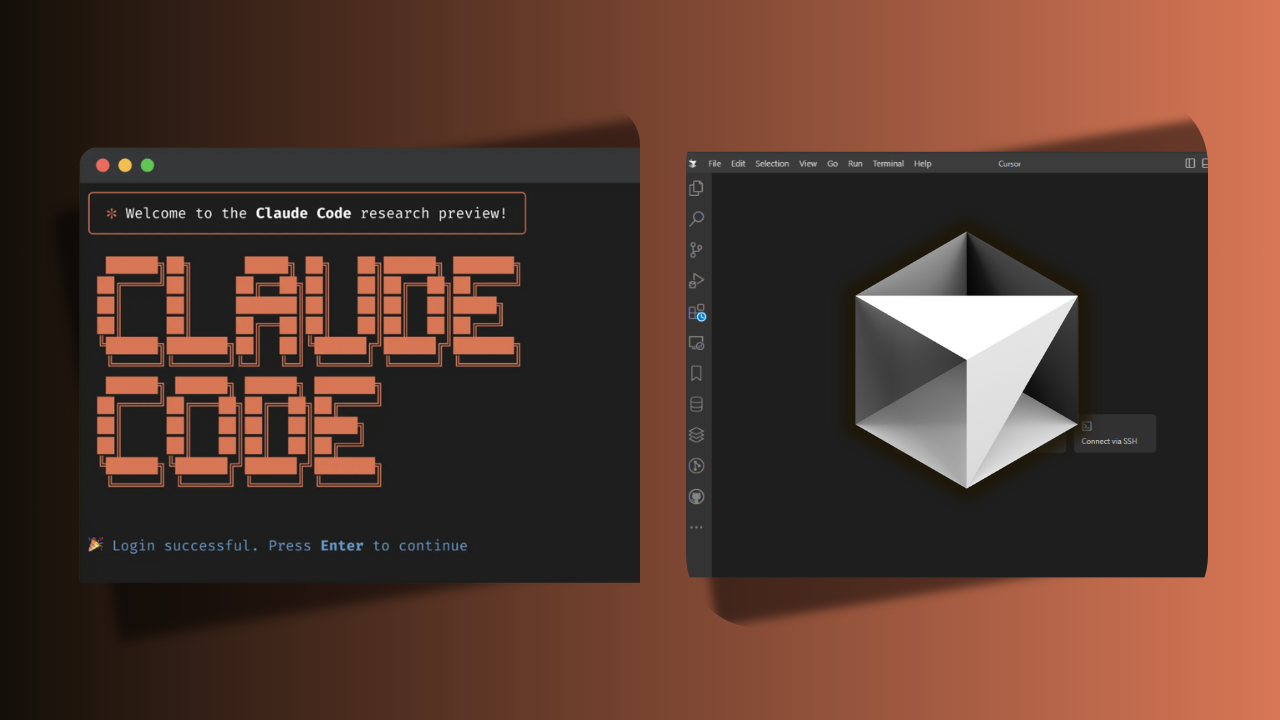

A new, streamlined workflow for fine-tuning Google's latest Gemma 4 model is now available for free, courtesy of Unsloth AI. Announced via a social media post, the process requires only a web browser and access to a Google Colab notebook, significantly lowering the technical and financial barrier to customizing a powerful, open-weight large language model.

What's New

Unsloth, a company specializing in optimization libraries for faster and more memory-efficient LLM training, has packaged its technology into a user-friendly Colab notebook. The key offering is the ability to fine-tune Google Gemma 4—the successor to the popular Gemma 2 family—at no cost. The notebook interface reportedly provides access to over 500 models, with Gemma 4 as the highlighted option.

The advertised process is a three-step sequence:

- Open the provided Unsloth Colab notebook.

- Select a model (e.g., Gemma 4) and a dataset.

- Initiate the training run.

This eliminates the traditional setup hurdles of provisioning cloud GPUs, managing dependencies, and writing training scripts, compressing it into a browser-based task.

Technical Details & Context

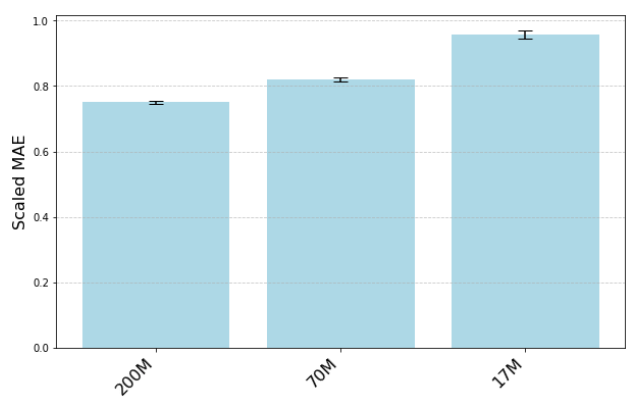

While the announcement is light on specific technical benchmarks for the fine-tuned outputs, the value proposition rests on Unsloth's core technology. The company's libraries are designed to accelerate LoRA (Low-Rank Adaptation) and full fine-tuning by up to 5x while reducing memory usage by up to 70%. This efficiency is what makes free Colab-based fine-tuning feasible, as Colab's free tier provides limited GPU resources (typically an NVIDIA T4 or L4) and session time.

Google's Gemma 4, released in early 2026, represents a significant step up from its predecessors. It is a more capable, efficient model family that has been benchmarked competitively against other leading open and closed models. Fine-tuning allows developers to specialize this general-purpose model for specific tasks like coding, creative writing, or domain-specific Q&A.

The Unsloth notebook likely uses QLoRA (Quantized LoRA) techniques, which combine model quantization with low-rank adapters. This is the standard method for fine-tuning large models on consumer-grade or limited cloud hardware, as it keeps the base model weights frozen and only trains a small set of additional parameters.

How It Compares

This move continues the trend of democratizing LLM customization. Other platforms offer fine-tuning, but often with costs or complexity:

Unsloth Colab Gemma 4, 500+ others Free (Colab limits) Browser/Notebook Google Vertex AI Gemma, PaLM Pay-as-you-go Cloud Console/API Hugging Face AutoTrain Community models Credits required Web UI/API OpenAI Fine-tuning GPT-3.5/4 Per token cost API Together AI Llama, Mistral Compute credits APIUnsloth's differentiator is the combination of a free tier, a simplified Colab-based workflow, and its underlying optimization software that makes training on limited hardware practical.

Limitations & Considerations

Practitioners should be aware of the constraints inherent to this free offering:

- Colab Limitations: Google Colab's free sessions are time-limited (typically ~12 hours) and may disconnect. For fine-tuning larger datasets or models, this may be insufficient, requiring a Colab Pro subscription or managed cloud credits.

- Dataset Size: The "free" aspect is contingent on Colab's resources. Very large datasets may not be processable within the memory and time constraints.

- Model Variants: The announcement mentions "Gemma 4" but does not specify which parameter size (e.g., 7B, 14B) is available for free fine-tuning. The larger the model, the more resource-intensive the process.

- Serving: The notebook produces fine-tuned model weights (likely adapters). Deploying these fine-tuned models for inference still requires hosting infrastructure, which incurs cost.

gentic.news Analysis

This development is a tactical move by Unsloth that aligns with several broader industry trends we've been tracking. First, it directly leverages the momentum behind Google's Gemma family, which has seen rapid adoption since Gemma 2's release in mid-2025. By featuring Gemma 4—a model trending 📈 in developer mindshare—Unsloth is ensuring its tool meets immediate market demand.

Second, this continues the fierce competition in the LLM optimization stack. Unsloth is competing with libraries like axolotl, LLaMA-Factory, and Hugging Face's PEFT and TRL. By offering a zero-friction, free entry point, Unsloth is effectively a top-of-funnel user acquisition strategy. Developers who start with their free Colab may later purchase Unsloth's paid licenses for faster on-premise training or use their upcoming managed cloud service, a pattern we observed when Replicate and Banana Dev initially offered free credits.

Finally, this underscores the strategic importance of fine-tuning as a service. As closed-model providers like OpenAI and Anthropic monetize their fine-tuning APIs, there's a parallel market for tools that empower developers to fine-tune open-weight models efficiently. Unsloth's play here is to own the developer workflow for open-model customization, a space where Hugging Face is also aggressively investing with AutoTrain and Spaces. This announcement can be seen as a counter to Hugging Face's integrated offering, emphasizing simplicity and a specific, popular model (Gemma 4) over a broader platform.

Frequently Asked Questions

Is fine-tuning Google Gemma 4 really free?

Yes, the fine-tuning process itself is free using Unsloth's Colab notebook and Google Colab's free GPU tier. However, you are subject to Google Colab's usage limits on compute time and memory. For larger jobs, you may hit these limits and need to use paid Colab tiers or alternative cloud credits.

What do I need to start fine-tuning Gemma 4 with Unsloth?

You only need a Google account to access Google Colab and a web browser. The Unsloth notebook contains all the necessary code and instructions. You will also need your training dataset prepared in a supported format (like JSONL).

How does Unsloth's free offering compare to Hugging Face AutoTrain?

Hugging Face AutoTrain is a more comprehensive web UI that supports many models and tasks but requires purchasing compute credits once you exceed very small free tiers. Unsloth's Colab notebook is a more bare-bones, code-first approach that is completely free but relies on you managing the Colab environment. Unsloth may be faster due to its optimized kernels, while AutoTrain offers more hand-holding.

Can I use the fine-tuned Gemma 4 model commercially?

This depends on the license of the base Gemma 4 model from Google and your use case. Google's Gemma family typically has a permissive license allowing commercial use. The fine-tuned adapters you create are your own. You must ensure your training data also has appropriate rights for commercial use.