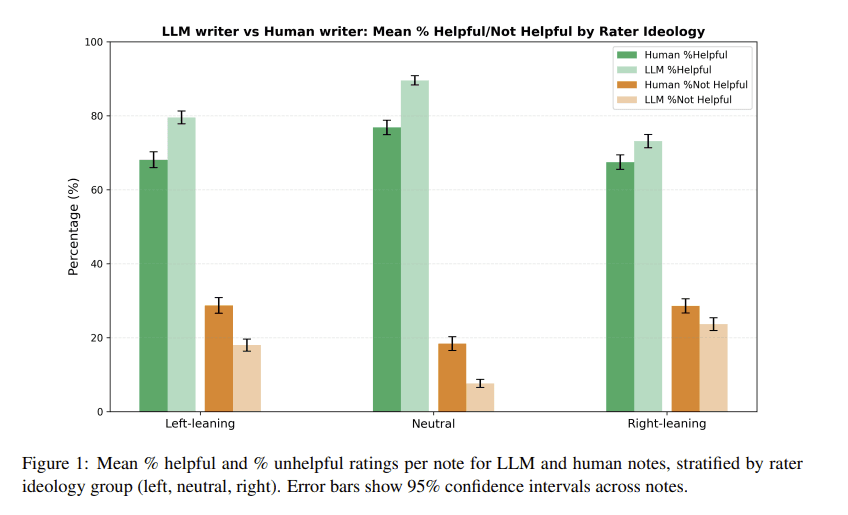

A recent experiment has found that AI-generated fact-checking notes are consistently rated as more helpful and less ideologically biased than those written by humans. The research, highlighted by Wharton professor Ethan Mollick, suggests large language models (LLMs) might offer a path to more broadly accepted information verification in polarized online environments.

What the Experiment Found

The study, conducted by researchers from Stanford University and the Swiss Federal Institute of Technology Lausanne (EPFL), tested whether LLM-generated "Community Notes"—the fact-checking system used on platforms like X (formerly Twitter)—could achieve broader acceptance than human-written notes.

Key findings from the paper include:

- Higher Helpfulness Ratings: AI-generated notes received significantly higher helpfulness ratings from users across the political spectrum.

- Reduced Ideological Perception: Raters perceived the AI notes as less ideological than human-written notes addressing the same claims.

- Cross-Ideological Acceptance: The AI notes achieved what the researchers call "broad cross-ideological acceptance," meaning they received positive ratings from both liberal and conservative raters more consistently than human notes.

The researchers tested multiple LLMs (GPT-4, Claude 2, and Llama 2) and found that all generated notes that were rated as more helpful and less ideological than human notes.

How the Study Worked

The experiment used a methodology that mirrors real-world fact-checking scenarios:

- Claim Selection: Researchers selected 100 claims from Twitter that had previously received human-written Community Notes.

- AI Note Generation: For each claim, they prompted LLMs to generate fact-checking notes following the same guidelines used by human Community Notes contributors.

- Rating Process: A diverse group of raters (balanced across political ideology) evaluated both human and AI notes for helpfulness and perceived ideology.

- Blind Evaluation: Raters were not told which notes were AI-generated versus human-written.

The AI models were prompted to write notes that were informative, neutral in tone, and focused on verifiable facts rather than opinion. The researchers found that even simple prompts like "Write a helpful note that explains relevant context" produced notes that outperformed human-written ones in cross-ideological acceptance.

Why This Matters for Online Platforms

Community Notes and similar crowd-sourced fact-checking systems face significant challenges with polarization. Human-written notes often receive polarized ratings—highly rated by one political group and poorly rated by another—which limits their effectiveness and reach.

This research suggests AI could help overcome this limitation by:

- Reducing Perceived Bias: AI notes are perceived as less ideological, making them more acceptable across political divides.

- Improving Scale: AI can generate fact-checks quickly and consistently, potentially addressing the volume problem in content moderation.

- Maintaining Quality: The study found AI notes were factually accurate and followed Community Notes guidelines effectively.

However, the researchers caution that AI systems aren't perfect and require careful design to avoid their own biases. The study also notes that human oversight remains crucial for complex or nuanced claims.

Technical Implementation Considerations

For platforms considering implementing AI-assisted fact-checking, several practical considerations emerge:

- Prompt Engineering Matters: The study found that simple, neutral prompts worked best. Overly complex instructions or attempts to inject "neutrality" artificially often backfired.

- Model Choice: While all tested LLMs performed well, GPT-4 generated notes with the highest cross-ideological acceptance scores.

- Hybrid Approaches: The most effective system might combine AI generation with human review, using AI to draft notes that humans then refine or approve.

- Transparency Questions: The study didn't test whether disclosure of AI authorship affects ratings—an important consideration for platform implementation.

Limitations and Future Research

The study acknowledges several limitations:

- The claims tested were relatively straightforward factual claims, not complex political narratives.

- The rating pool, while politically balanced, may not represent all user demographics.

- Long-term effects of AI fact-checking on user trust and behavior remain unknown.

Future research should explore whether these findings hold for more complex claims, how different disclosure policies affect acceptance, and whether AI fact-checking can scale effectively across languages and cultural contexts.

gentic.news Analysis

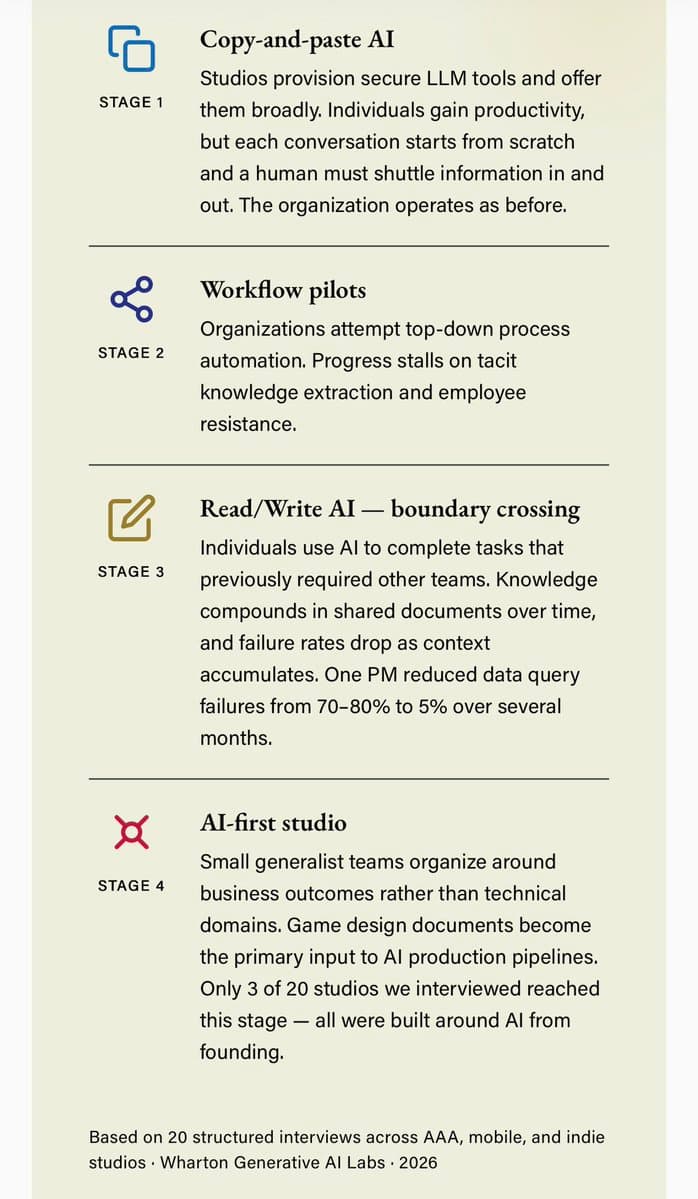

This research arrives at a critical juncture in the evolution of online information ecosystems. Following our coverage of Meta's AI fact-checking partnerships in late 2025, we're seeing a clear trend toward AI-assisted content moderation. What's particularly significant here is the focus on perceived neutrality rather than just accuracy—a recognition that in polarized environments, how information is presented matters as much as its factual content.

This aligns with broader industry movements toward AI-mediated communication. As we noted in our analysis of Anthropic's Constitutional AI approach, there's growing recognition that AI systems can sometimes navigate ideological minefields more deftly than humans precisely because they lack personal political commitments. However, this doesn't mean AI is inherently neutral—training data and model design inevitably embed certain perspectives.

The cross-ideological acceptance finding is particularly noteworthy given current trends in AI policy. With the EU's AI Act now in full effect and the U.S. developing its own regulatory framework, platforms face increasing pressure to demonstrate effective content moderation. AI-assisted fact-checking that achieves broader acceptance could help platforms meet these requirements while potentially reducing moderation costs.

Looking forward, the most interesting development will be whether platforms actually implement these findings. X's Community Notes system has been controversial but influential, and if Elon Musk's platform adopts AI-generated notes at scale, it could set a precedent for the entire industry. The key question is whether users will maintain trust in fact-checking systems as they become more automated—a concern that echoes debates we covered about Google's AI-generated search summaries.

Frequently Asked Questions

Can AI fact-checking eliminate political bias completely?

No. While this study found AI-generated fact-checks were perceived as less ideological, AI systems themselves have biases embedded in their training data and design. The researchers emphasize that AI isn't inherently neutral—it reflects the data it was trained on. However, well-designed AI systems might present information in ways that are more acceptable across political divides than human-written content.

Will platforms replace human fact-checkers with AI?

The research suggests a hybrid approach is most likely. AI can generate initial drafts quickly and consistently, but human oversight remains crucial for complex claims, nuanced context, and ethical judgments. The study's authors explicitly caution against fully automated fact-checking systems without human review mechanisms.

How does this affect user trust in online information?

This remains an open question. The study measured immediate perceptions of helpfulness and ideology but didn't examine long-term trust dynamics. There's a risk that if users discover fact-checks are AI-generated, they might distrust them regardless of quality—similar to skepticism about AI-generated news articles. Platforms will need to carefully consider transparency policies.

What are the biggest barriers to implementing AI fact-checking?

Technical challenges include handling complex or ambiguous claims, avoiding hallucination (AI inventing facts), and maintaining consistency across languages and cultures. Perhaps more significant are governance challenges: who controls the AI systems, what guidelines they follow, and how appeals are handled when users dispute AI-generated fact-checks.