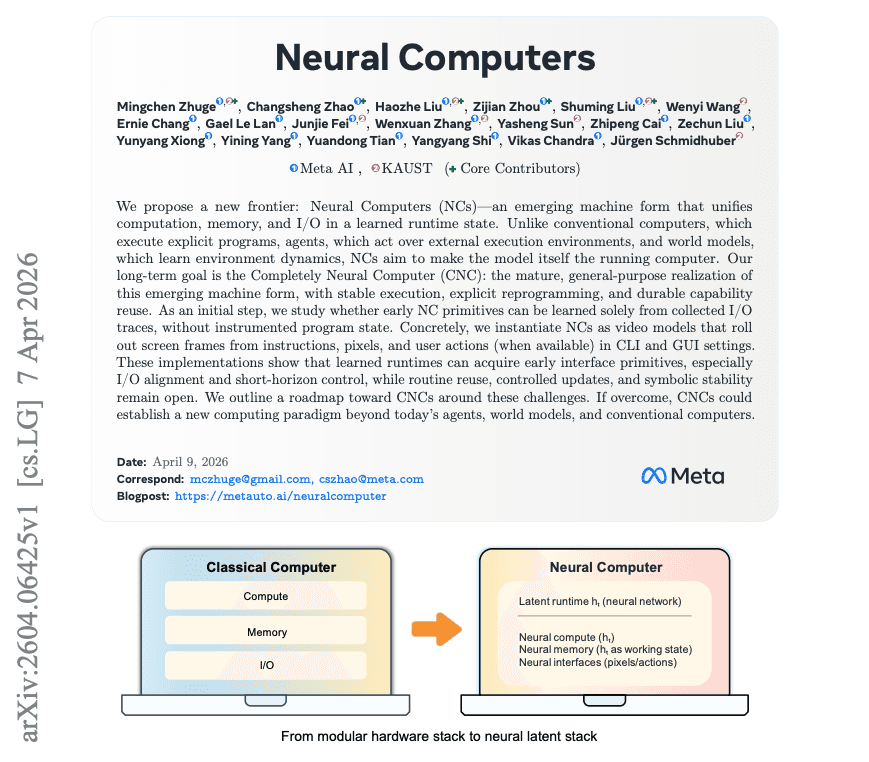

A new research paper from Meta AI and KAUST (King Abdullah University of Science and Technology) proposes a fundamental shift in how AI agents interact with computing environments. Instead of an agent using an external computer—relying on its operating system for memory, state, and execution—the vision is for the model to become the computer. The researchers term this a Neural Computer (NC): a learned runtime where computation, memory, and I/O all live inside a single, evolving latent state.

This work, shared via a paper titled "Neural Computers," moves beyond incremental improvements to agent tool-use. It questions the foundational assumption that AI must depend on external, symbolic systems (like a Python interpreter or a GUI) to perform complex, stateful tasks. The early prototypes are built on video models that learn to roll out terminal and GUI interfaces directly from prompts, pixel inputs, and user actions.

Key Takeaways

- Meta AI and KAUST research introduces Neural Computers, a paradigm where AI models internalize computation, memory, and I/O.

- Early prototypes show 98.7% GUI cursor control and an 83% arithmetic accuracy boost via reprompting.

What the Researchers Built: From External Tools to Internalized Runtime

Today's AI agents operate as clients to a host computer. They send commands (like ls or a mouse click) to an external runtime (the OS), which executes the action, manages state, and returns the result. This creates a brittle, slow loop with high latency and numerous failure points where the agent misunderstands the system's state.

The Neural Computer inverts this relationship. The model itself maintains a persistent, internal "machine state." This latent state is updated through a learned computational process that simulates the effects of actions on an interface. In their initial implementation, the researchers use a video-prediction architecture. Given a starting screen (pixels) and a user command (text or action), the model predicts the next screen state, effectively rendering the outcome of the computation.

Think of it as the AI dreaming up the entire computer interface and its evolution in response to interaction, all within its own neural network. The "computer" is not a separate piece of silicon and software, but a set of learned dynamics inside the model.

Key Results: Promising but Grounded Prototypes

The paper presents results from three probing experiments designed to test the core capabilities of a Neural Computer:

CLI Rendering Generating terminal output from commands Measurable improvement in output fidelity over baselines. GUI Cursor Control Moving a cursor to a target on a screen 98.7% success rate when provided with explicit visual supervision. Arithmetic Reasoning Performing multi-step calculations internally Accuracy on a probe task jumped from 4% to 83% using a "reprompting" technique that refreshes the latent state.The 98.7% cursor control demonstrates that the model can learn precise, goal-directed visual motor control within its generated interface. The dramatic leap in arithmetic accuracy—from near-random to highly competent—is particularly telling. The reprompting technique acts as an internal "reset" or state refresh, suggesting that managing the stability of the latent computational state is critical for reliable operation.

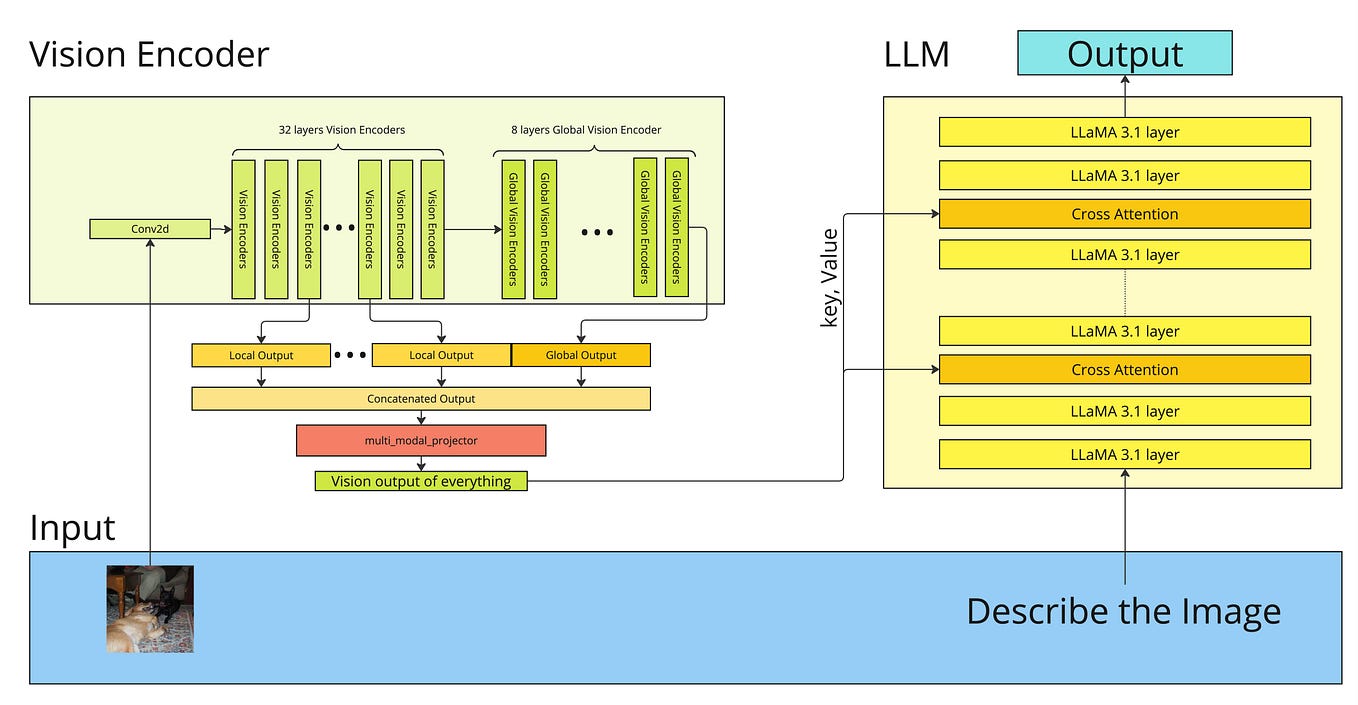

How It Works: Video Models as Learned Simulators

The technical approach leverages the power of modern video prediction models. The Neural Computer is framed as a latent video model that generates the sequence of screen states (s_1, s_2, ...) constituting the human-computer interaction.

- Input: The model takes a concatenated input of the current screen pixels and an embedding of the user's action (e.g., a keystroke, a mouse command, or a natural language prompt).

- Core Computation: Instead of just predicting the next raw pixel frame, the model operates on a compressed latent representation. The transformation in this latent space constitutes the "computation." Memory is implemented as persistence in this latent state across prediction steps.

- Output: The model decodes the updated latent state into the next predicted screen of the interface (a CLI output, a GUI with a moved cursor, etc.).

- I/O: The screen serves as the primary output channel. User actions, provided as input for the next step, form the input channel. This creates a closed loop.

The training data consists of screen recordings of human-computer interactions (like terminal sessions or GUI usage). The model learns the dynamics of how the screen changes in response to actions, internalizing the rules of the operating system and applications.

Why It Matters: A New Substrate for AI Computation

This research is not about building a better agent for today's computers. It's an early exploration of a different machine form. The long-term implication is a move toward AI-native computing substrates where the traditional, rigid stack of hardware, kernel, OS, and application is replaced or deeply integrated with a learned, adaptive runtime.

Potential advantages include:

- Lower Latency: Eliminating the round-trip between agent and external runtime could make interactions feel instantaneous.

- Greater Robustness: The model learns a unified, probabilistic understanding of the entire system, potentially making it more forgiving to ambiguous commands.

- Novel Abilities: Neural Computers could learn to perform computations or manage state in ways that are impossible or inefficient in symbolic systems.

However, the authors are clear about the significant open challenges: achieving symbolic reliability (consistently correct arithmetic, for example), enabling stable reuse of learned computational subroutines, and establishing runtime governance and safety for a system whose entire state is a learned latent representation.

gentic.news Analysis

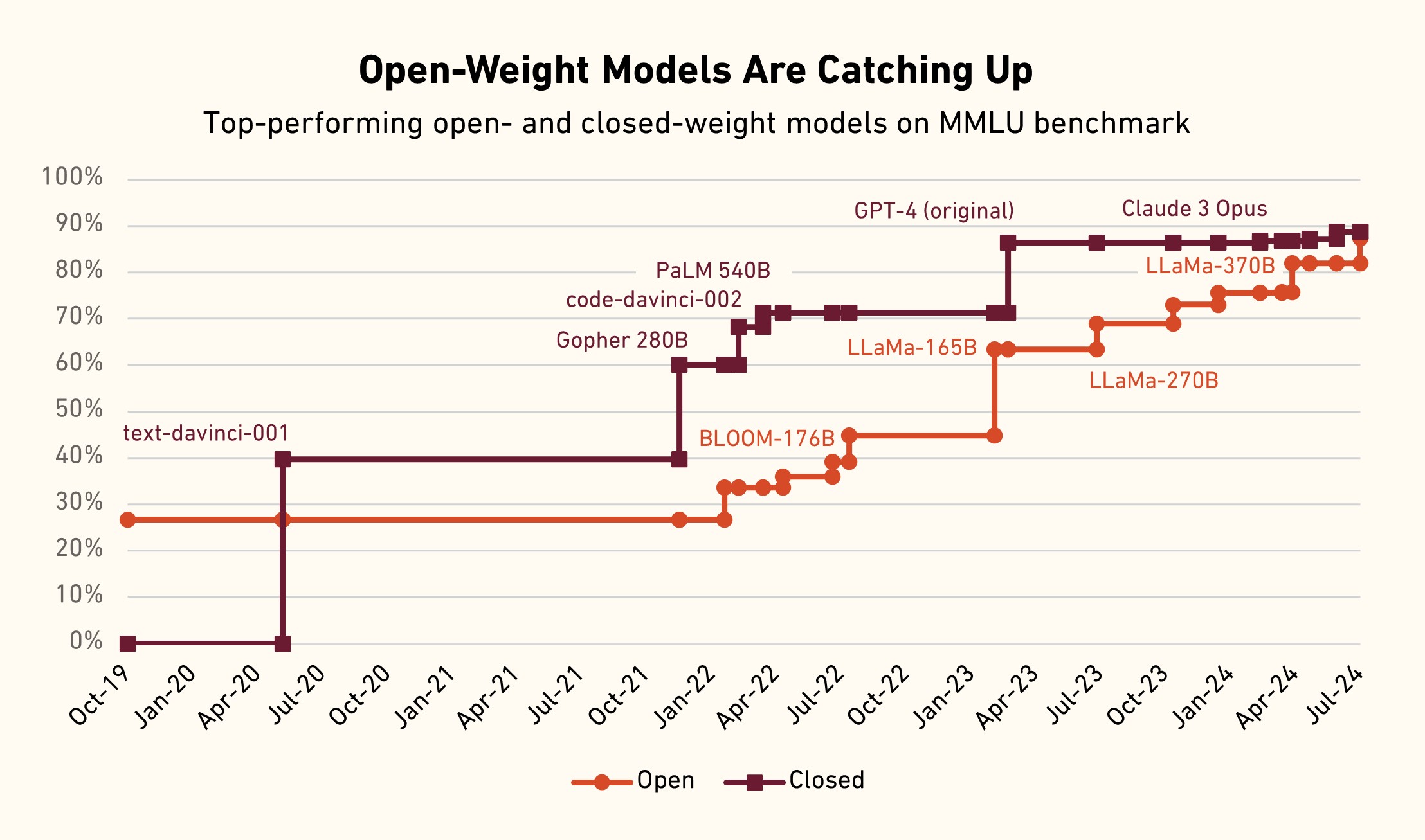

This paper from Meta AI and KAUST fits into a clear and accelerating trend we've been tracking: the push to move AI from a user of computational tools to the source of computation itself. This aligns with other research threads like Google DeepMind's SIMA, an agent trained to understand general gaming environments, and the broader industry investment in AI agents. However, Neural Computers take a more radical, integrative approach. While SIMA learns to act within a given game engine, the NC aims to become the engine.

Meta's research organization has been particularly active in foundational agent research. This work on Neural Computers can be seen as a theoretical follow-up to their previous projects like Cicero (diplomacy-playing agent) and the Toolformer line of work, which taught models to call external APIs. Those projects accepted the external runtime as a given. Neural Computers question that premise entirely.

The partnership with KAUST is also notable. KAUST has emerged as a significant hub for ambitious, long-term AI research, often collaborating with major tech labs on projects that are more exploratory than product-driven. This paper is a classic example of that dynamic.

The central technical challenge highlighted here—maintaining a stable, reliable latent state for computation—is the same fundamental problem plaguing long-context LLMs and stateful agents. If the field can solve this "state decay" issue for Neural Computers, the breakthroughs would likely transfer back and improve today's more conventional AI systems. Practitioners should watch for future work on latent state management and internal computation verification as key indicators of progress in this paradigm.

Frequently Asked Questions

What is a Neural Computer?

A Neural Computer is a proposed AI architecture where a machine learning model internalizes the functions of a computer runtime—computation, memory, and input/output—within a single, evolving latent state. Instead of relying on an external operating system (like Windows or Linux) to execute commands and manage state, the model itself learns to simulate these dynamics, generating the resulting interface (e.g., a terminal or GUI) as an output.

How is a Neural Computer different from an AI agent?

A traditional AI agent is a program that runs on a computer. It uses the computer's OS and tools (like a calculator app or a database) to complete tasks. A Neural Computer aims to be the computer. The agent and the runtime are fused into one learned system. The goal is to eliminate the dependency on and latency of communicating with an external, symbolic machine.

What were the key results from Meta's paper?

The key experimental results include a 98.7% success rate for GUI cursor control within the generated interface and a dramatic improvement on an internal arithmetic reasoning task, where accuracy rose from 4% to 83% using a reprompting technique to refresh the model's internal state. These demonstrate early feasibility for precise control and internal computation.

What are the main challenges facing Neural Computers?

The researchers identify three major open challenges: 1) Symbolic Reliability: ensuring the learned computation is as consistently accurate as a traditional computer for logic and math, 2) Stable Reuse: the ability to reliably call and re-use learned "subroutines" within the latent state, and 3) Runtime Governance: how to control, debug, and ensure the safety of a system whose entire operation is a learned black-box dynamic.