A brief but pointed observation from AI researcher and professor Ethan Mollick has ignited discussion about the strategic direction of Meta's AI releases. Based on what appears to be leaked information, Meta's upcoming model, internally referred to as "Spark," is expected to be a closed-weight release. This represents a potential sea change for a company that has built immense goodwill and industry influence by open-sourcing its most significant AI models, from Llama 2 to the recent Llama 3 series.

What Happened

On X (formerly Twitter), Ethan Mollick commented on what seems to be pre-release information about a new Meta AI model called "Spark." His central observation was not about benchmark scores or capabilities, but about licensing: "The most important thing to note is that it is not open weights."

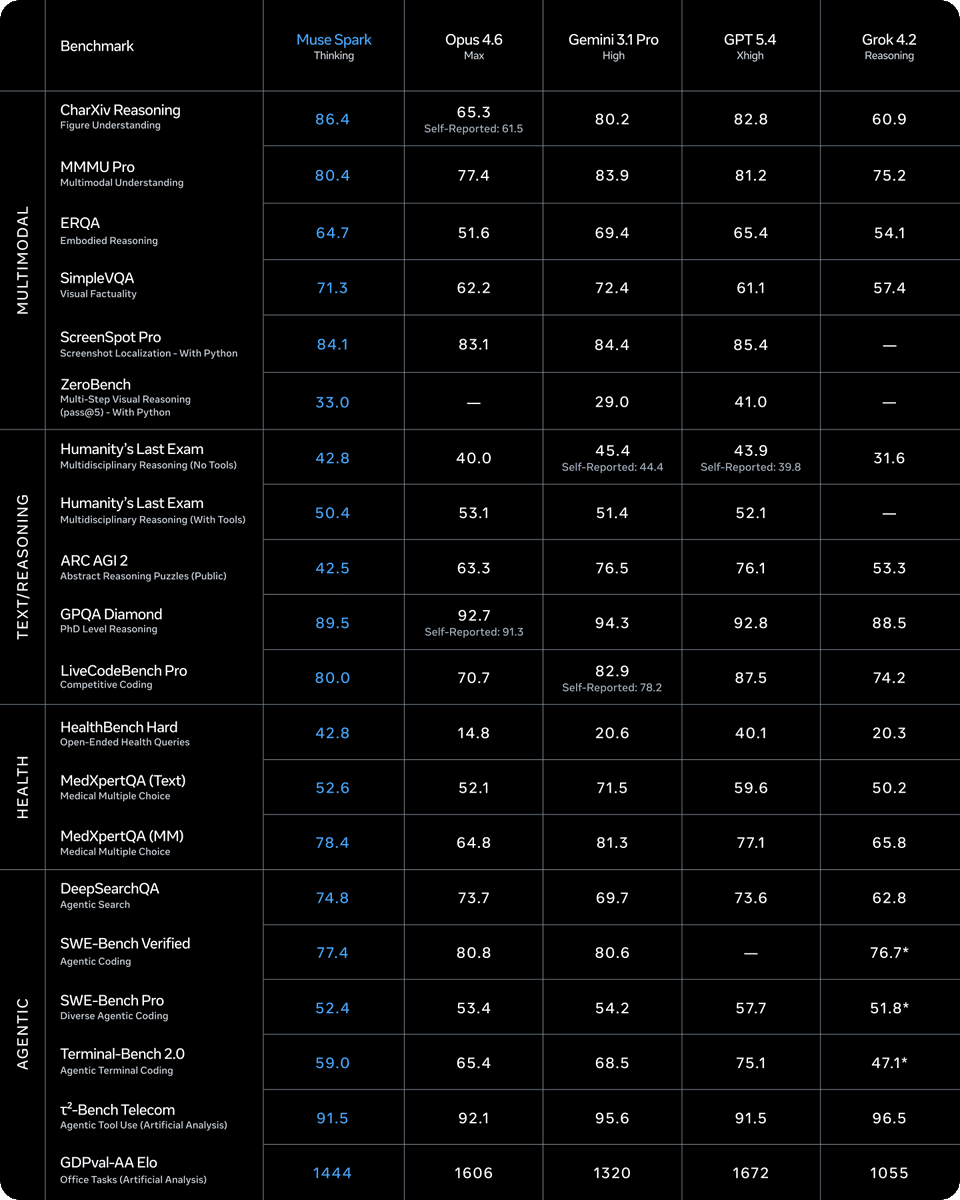

He noted that while Spark seems to be "a good model," it is "still trailing the current series of releases" from leading frontier labs like OpenAI, Anthropic, and Google DeepMind. The defining characteristic—and the core of the discussion—is the apparent departure from Meta's established open-weight strategy. Mollick concluded that without open weights, "it is a lot harder to predict the value of Spark," highlighting how the model's impact is now tied to proprietary performance rather than open ecosystem effects.

Context: Meta's Open-Weight Legacy

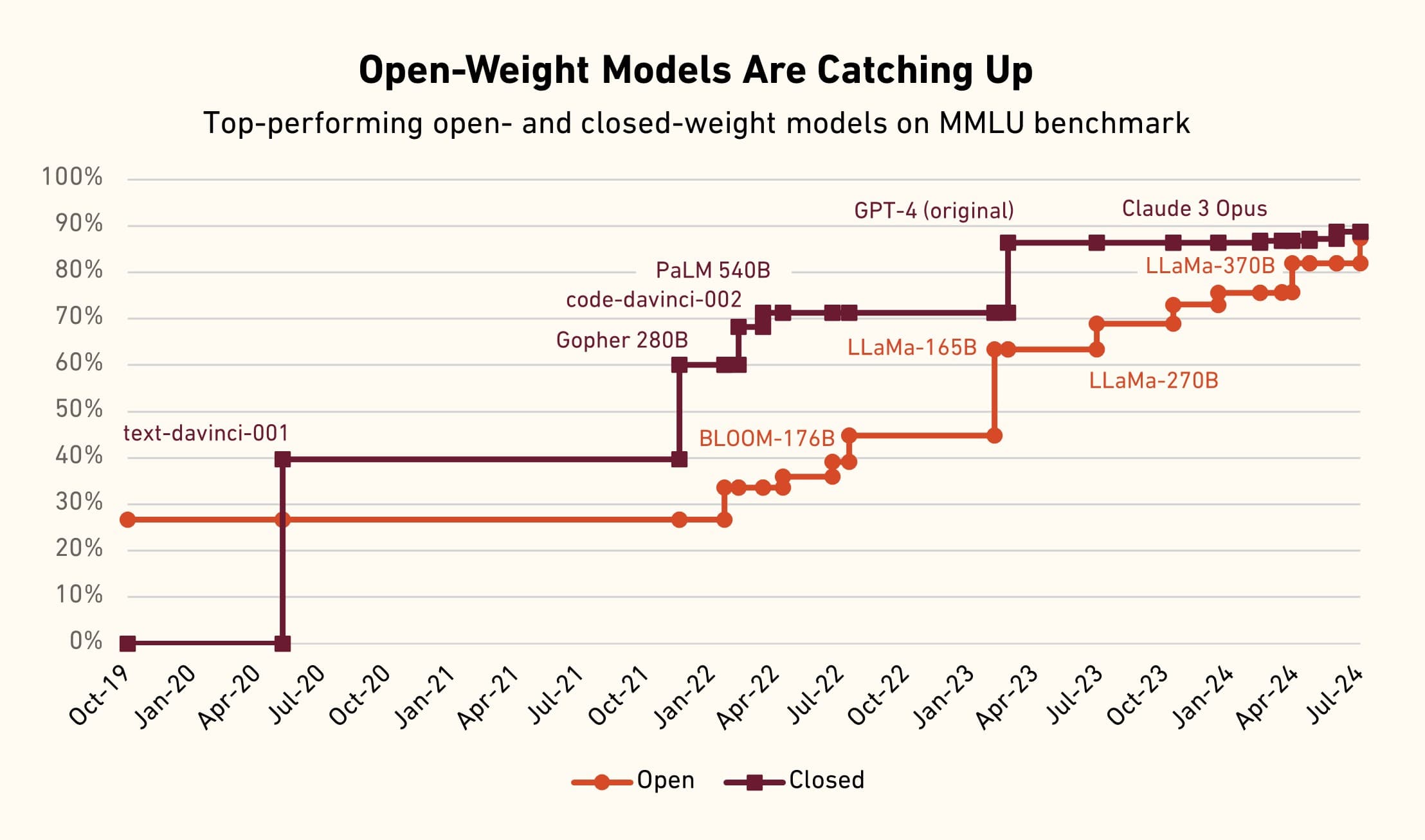

Meta's commitment to open-weight AI has been a defining pillar of the current AI landscape. The release of Llama 2 in July 2023 provided the first truly capable, commercially usable open-weight large language model, catalyzing a wave of innovation, fine-tunes, and deployments that would have been impossible with a closed model. Its successor, Llama 3, released in April 2024, solidified this position, offering state-of-the-art performance in the 8B and 70B parameter classes that became the backbone for countless startups, researchers, and developers.

This strategy provided Meta with significant benefits: it established the company as a de facto standard in the open model space, fostered a massive developer ecosystem, and applied competitive pressure on rivals whose models were entirely closed. The value of a Meta model release was previously twofold: its raw capability and its open accessibility.

The Strategic Implications of a Closed 'Spark'

If the leak is accurate, Spark signifies a strategic pivot. The model's value proposition shifts from being a public good and ecosystem play to a more traditional, competitive product. Its success would be measured purely on its performance against closed models like GPT-4o, Claude 3.5 Sonnet, and Gemini 1.5 Pro, rather than on its ability to empower an open-source community.

This move could indicate several strategic realities for Meta AI:

- Competitive Pressure: The frontier of AI capability is increasingly expensive and difficult to maintain. Meta may believe that to compete at the very top tier, it needs to keep its most advanced models proprietary to protect its R&D investment and create a differentiated product for its own services (Facebook, Instagram, WhatsApp, Ray-Ban Meta smart glasses).

- A Two-Tier Strategy: Meta could be adopting a portfolio approach: continuing to release powerful but not frontier-leading models as open-weight (the Llama series), while keeping its absolute best, cutting-edge models (like Spark) closed. This would allow it to maintain its open-source leadership while still competing directly for the high-end market.

- Commercialization: The open-weight strategy, while influential, does not directly generate revenue from the model itself. A closed, top-tier model like Spark could be the foundation for a new, premium API service or advanced features locked within Meta's consumer products.

What We Don't Know

The leak is sparse on critical details:

- Spark's Specifications: Model size, architecture, training compute, and key benchmark results are unknown.

- Official Release Plans: Whether Spark is intended for a public API, internal use only, or a specific product integration.

- The Future of Open Weights at Meta: Whether this is a one-off experiment or a permanent new direction for the company's frontier research.

Without open weights, the burden of proof for Spark shifts entirely to its demonstrated capabilities. The community will no longer be able to validate its performance independently, audit its behavior, or build upon its foundation. Its "value," as Mollick notes, becomes much harder to assess pre-release and will depend on Meta's own benchmarking and the experience of early API users.

gentic.news Analysis

This potential pivot by Meta must be viewed through the lens of the intensifying AI arms race of 2025-2026. As we covered in our analysis of Google's Gemini 2.0 launch last quarter, the pressure to monetize massive AI R&D investments is leading to increased productization and tighter control over flagship models. Meta's open-weight strategy was always an anomaly among the trillion-dollar tech giants, and its partial retreat from it aligns with a broader industry trend where openness is being strategically scaled back at the frontier.

The move also directly relates to the competitive dynamics with OpenAI, which has never open-sourced a frontier model, and Anthropic, which maintains a closed model strategy. By potentially closing Spark, Meta is signaling its intention to compete head-to-head in the high-stakes, closed-model API market, a space where it has previously ceded ground. However, this comes with significant risk to its developer mindshare. The open-source community that rallied around Llama may feel alienated if Meta's best models become walled gardens.

This development follows Meta's previous major open-weight release, Llama 3.1, in the summer of 2025. If Spark is indeed closed, it creates a clear bifurcation in Meta's AI lineup: the open, accessible Llama lineage for the ecosystem, and a closed, potentially more powerful Spark lineage for competitive product warfare. The long-term success of this strategy hinges on whether Spark can genuinely match or exceed the capabilities of its closed-source rivals. If it cannot, Meta risks losing the unique strategic advantage—open-source leadership—that defined its AI identity for the past three years.

Frequently Asked Questions

Is Meta's Spark model officially announced?

No. As of now, information about the "Spark" model comes from what appears to be a leak or early access information commented on by a third-party researcher. Meta has not made any official announcement regarding a model by this name or its release strategy.

What does 'not open weights' mean?

"Not open weights" means the model's parameters—the core trained files that define the AI's knowledge and capabilities—would not be publicly released for anyone to download, run, modify, or study. Instead, access would likely be restricted through an API (Application Programming Interface) where users can send queries but cannot see or control the underlying model. This is the approach used by OpenAI's GPT-4 and Anthropic's Claude.

Why was Meta's open-weight strategy so important?

Meta's decision to release models like Llama 2 and Llama 3 with open weights democratized access to powerful AI. It allowed startups, academics, and independent developers to run state-of-the-art models on their own hardware, fine-tune them for specific tasks, audit them for safety and bias, and integrate them into products without relying on a corporate API. This fostered massive innovation, created a standard, and gave Meta enormous influence in the AI ecosystem.

Will Meta still release open-weight models like Llama?

Based on the current leak, it is unclear. The most plausible interpretation is that Meta is adopting a dual-track strategy: continuing the open-weight Llama series for broad ecosystem development, while developing a separate, more advanced (and closed) "Spark" series to compete directly with the leading proprietary models. The future of the Llama line remains a critical question for the open-source community.