AI researcher and professor Ethan Mollick has issued a pointed warning on the state of enterprise AI security preparedness. In a recent social media post, he expressed concern that very few large organizations and their Chief Information Security Officer (CISO) offices are treating critical AI red teaming reports—such as those from the group Mythos—with the urgency they demand.

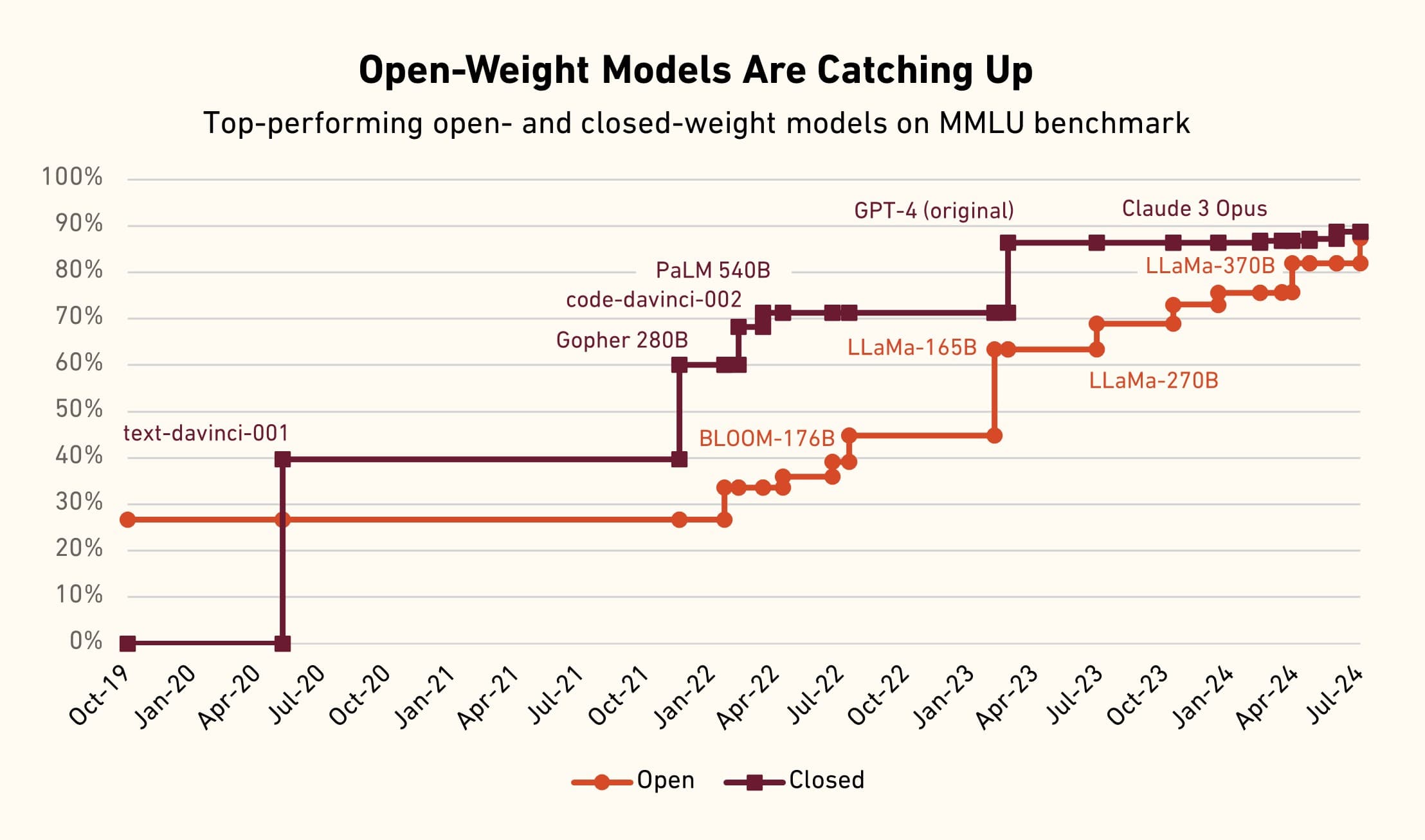

Mollick's central argument is based on observable historical trends in AI capability diffusion: once a significant AI vulnerability or exploit capability is documented and reported by elite red teams, malicious actors typically gain access to similar capabilities within six to nine months. This creates a narrow, critical window for defenders to patch systems, update policies, and deploy mitigations before the threat landscape escalates.

"Based on historical trends in AI they have, at most, about six to nine months until those capabilities become widely diffused to bad actors."

What Happened

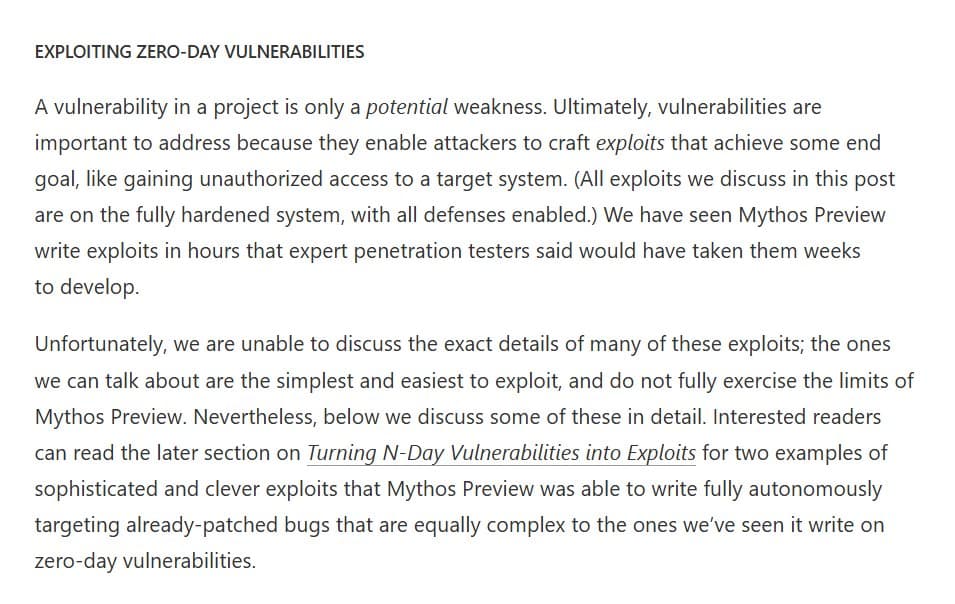

Mollick's comment specifically references Mythos red team reports. While the exact contents of the latest reports are not detailed in his post, the context implies they contain findings on severe, novel AI vulnerabilities or attack vectors. Red teaming in AI involves security experts acting as adversaries to systematically probe AI systems (like LLM APIs, deployed agents, or training pipelines) for flaws that could lead to data exfiltration, system takeover, or malicious use.

His observation is that these high-fidelity warnings are not triggering a "red alert" response at the organizational level commensurate with the impending risk.

The Historical Diffusion Timeline

The 6-9 month estimate is not arbitrary. It aligns with the observed lifecycle of AI research and exploit development:

- Discovery & Private Reporting: A vulnerability is found by researchers or red teams and reported to vendors or disclosed in limited circles.

- Contained Knowledge: The technique is known only to a small group of experts.

- Diffusion Phase: The core idea, proof-of-concept code, or method description begins to circulate—through academic papers, conference talks, technical blogs, or underground forums.

- Weaponization & Commoditization: Malicious actors adapt the technique, integrate it into toolkits, and use it at scale.

For AI vulnerabilities, this cycle has accelerated. Techniques like prompt injection, model theft, or training data extraction demonstrated in research settings have rapidly appeared in real-world attack chains.

The CISO Preparedness Gap

Mollick's suspicion that "very few" CISO offices are adequately reacting points to a systemic issue. AI security is often siloed from traditional IT security, and the rapid evolution of threats outpaces typical enterprise risk assessment and procurement cycles. Treating an AI red team report with the same urgency as a critical zero-day vulnerability in a mainstream software product is not yet standard practice.

What This Means in Practice

For security teams, this post is a call to action. It suggests that reports from specialized AI red teams like Mythos should be prioritized as actionable intelligence with a known, short shelf-life for defensive advantage. The recommended response includes:

- Immediately assessing exposure to the specific vulnerabilities outlined.

- Accelerating deployment of AI security guardrails and monitoring.

- Rehearsing incident response plans for AI-specific attacks.

gentic.news Analysis

Mollick's warning fits into a clear and troubling trend we've been tracking. In November 2025, we covered the formation of the AI Security Alliance, a consortium of tech giants and security firms explicitly created to share threat intelligence and standardize red-teaming practices for AI systems. The very existence of this alliance underscores the industry's recognition of the unique, fast-moving threat landscape that Mollick describes.

His reference to Mythos is particularly salient. Mythos has emerged as a leading entity (📈) in offensive AI security research. Their work often focuses on practical exploit chains against production AI systems, not just theoretical academic attacks. This aligns with our previous reporting on the shift from AI safety to AI security, where the focus moves from long-term alignment risks to immediate, operational vulnerabilities that can be exploited today.

The core tension Mollick identifies—between the pace of offensive AI discovery and the speed of enterprise response—is the central challenge of AI security in 2026. Defensive tools and practices are evolving, but organizational processes and risk appetites lag. As we noted in our analysis of CISA's AI Secure Development Guidelines, regulatory and framework guidance is still in its infancy, leaving individual CISOs to interpret and prioritize these novel threats.

Ultimately, Mollick is applying a classic cybersecurity principle—the "defender's dilemma"—to the AI domain. Defenders must be right all the time; attackers only need to be right once. When the knowledge gap between red teams and attackers closes in under a year, the pressure on defenders to act on intelligence in near-real-time becomes immense. Organizations that fail to internalize this timeline may find themselves responding to breaches rather than preventing them.

Frequently Asked Questions

What is an AI red team report?

An AI red team report is a document produced by security professionals who simulate adversarial attacks against artificial intelligence systems. Their goal is to identify vulnerabilities, such as ways to bypass safety filters, extract sensitive training data, manipulate model outputs, or use the AI for unauthorized purposes. These reports provide detailed methodologies and proof-of-concept attacks to help defenders strengthen their systems.

Who or what is Mythos?

Mythos is a recognized group or company specializing in offensive AI security research and red teaming. They are known for conducting in-depth security assessments of AI models and platforms, discovering critical vulnerabilities, and publishing reports or disclosing findings to vendors. They are considered a leading source of high-quality, practical AI threat intelligence for enterprise security teams.

Why is the diffusion timeline for AI threats only 6-9 months?

This accelerated timeline is based on historical observation of the AI research-to-exploit lifecycle. Unlike some complex software vulnerabilities, AI attack techniques often rely on conceptual breakthroughs (like a new prompt injection method) that can be described and replicated without needing access to proprietary source code. Once the core idea is public—via a report, paper, or talk—it can be quickly understood, adapted, and weaponized by a global community of threat actors, leading to widespread diffusion in under a year.

What should a CISO do upon receiving a report like this?

A CISO should treat a high-severity AI red team report with the same urgency as a critical zero-day alert. Immediate steps include: convening the AI and security teams to review the findings, determining the organization's specific exposure (which models, APIs, or deployments are affected), implementing recommended mitigations or patches, updating monitoring rules to detect attempted exploits, and briefing executive leadership on the risk and response plan. The goal is to leverage the warning window before malicious actors widely adopt the technique.