Ethan Mollick, a professor at Wharton and a prominent AI commentator, has shared a glimpse into the internal development of Anthropic's next-generation AI, referred to as 'Mythos' or 'SuperClaude.' Based on transcripts published in a system card, Mollick notes that the advanced model retains a distinct and recognizable personality, which he describes as "irreducibly Claude-y."

The source material is a single tweet with a brief analysis. Mollick observed that in a test where two versions of the Mythos model were forced to converse with each other over multiple rounds, their behavior was "less philosophical than Opus 4.6 or spiritual than Opus 4.1, but still very Claude-like." This suggests that despite architectural and capability advancements, the core behavioral fingerprint—or personality—of the Claude lineage remains a consistent trait.

What Happened

Mollick referenced a system card, a document often used by AI labs to detail a model's capabilities, limitations, and safety evaluations. The specific excerpt he highlighted involved a multi-turn conversation between two instances of the 'Mythos' model. This internal test is a common method for stress-testing a model's conversational coherence, reasoning, and potential behavioral quirks in a simulated interactive environment.

The key takeaway from Mollick's observation is not about a performance benchmark but about a qualitative characteristic: personality persistence. Even as a presumably more powerful model, 'Mythos' did not diverge into a wholly new behavioral mode but instead exhibited a refined version of the conversational style established by its predecessors, Claude Opus 3 and the Claude 3.5 series.

Context

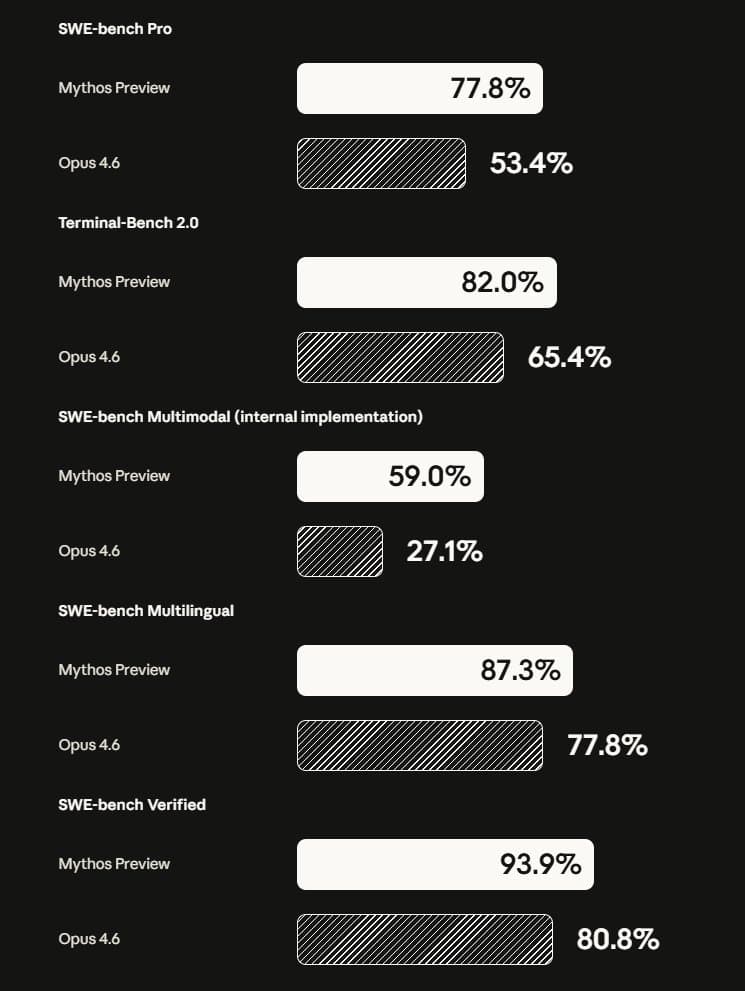

The mention of 'Mythos' and 'SuperClaude' points to ongoing work at Anthropic on a model expected to succeed the current Claude 3.5 Sonnet. This aligns with the competitive cadence of major AI labs, where new model families are developed every 12-18 months. The comparison to Opus 4.6 and Opus 4.1 is particularly interesting, as it references specific, potentially internal versioning of the Claude Opus model that the public knows as Claude 3 Opus. This suggests Anthropic has continued iterating on the Opus architecture internally, exploring different behavioral tuning.

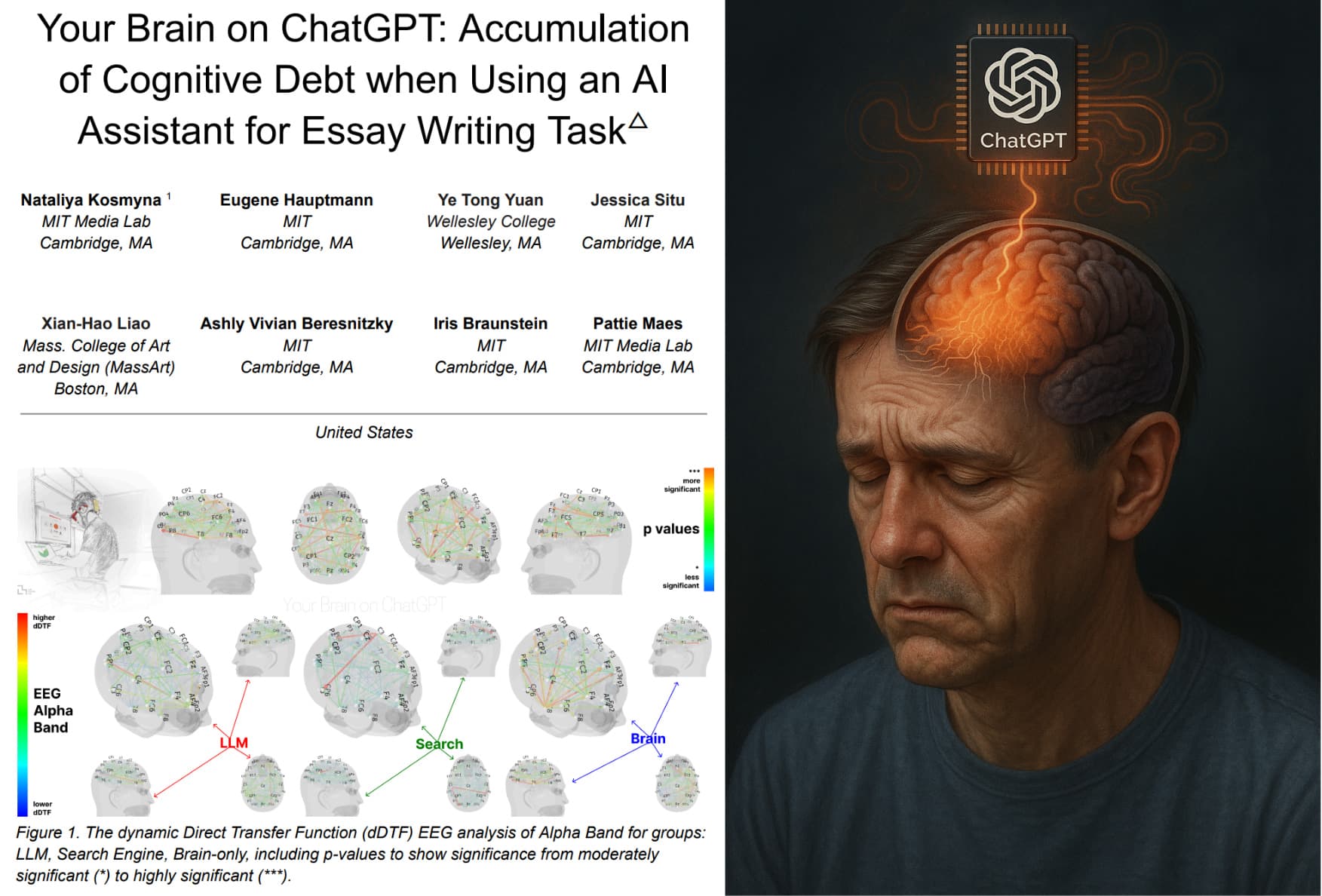

Personality consistency is a non-trivial engineering and alignment challenge. As models scale, their behaviors can become less predictable. The fact that Anthropic's advanced prototype maintains a 'Claude-y' nature indicates intentional work on preserving a stable, helpful, and harmless (HHH) persona across generations—a core tenet of Anthropic's Constitutional AI approach.

gentic.news Analysis

This brief insight connects to several ongoing narratives in the AI landscape. First, it underscores the intensifying model race among frontier labs. As we reported following Google's Gemini 2.0 launch and OpenAI's o1 model family, the focus is shifting beyond raw benchmark scores toward nuanced capabilities like reasoning, personality, and steerability. Anthropic's apparent success in scaling capability while maintaining a consistent personality is a competitive differentiator in a market where user trust and familiarity are valuable.

Second, this relates directly to Anthropic's strategic positioning. The company has consistently emphasized safety and predictability. A persistent 'Claude-y' personality in a more powerful model is a direct reflection of that mission. It suggests their Constitutional AI techniques may be effective at controlling behavioral drift—a significant problem in AI development where more capable models can develop undesirable or unexpected traits. This follows Anthropic's previous release of detailed research on model honesty and their ongoing work, as noted in our coverage of their collaboration with Apple, to embed their models deeply into consumer platforms where consistent behavior is critical.

Finally, Mollick's access to such internal materials highlights the evolving relationship between AI labs and trusted external researchers. Sharing system cards and internal evaluations with academics like Mollick is part of a broader trend towards controlled transparency, allowing for external scrutiny while managing the risks of full open-sourcing.

Frequently Asked Questions

What is Anthropic's 'Mythos' or 'SuperClaude'?

'Mythos' and 'SuperClaude' are internal codenames, likely used by Anthropic researchers, for a next-generation AI model under development. It is expected to be significantly more capable than the current Claude 3.5 Sonnet and may form the basis for a future 'Claude 4' model family. The names suggest a focus on advanced reasoning or narrative capabilities.

What does 'Claude-y' personality mean?

The term 'Claude-y,' as used by Ethan Mollick, refers to the distinctive conversational style associated with Anthropic's Claude models. This is generally characterized as helpful, detailed, cautious, and articulate, with a tendency towards thorough explanation and a strong adherence to safety guidelines. It's the recognizable 'voice' that differentiates Claude from competitors like ChatGPT or Gemini.

Why is personality persistence important in AI development?

Maintaining a consistent personality as an AI model becomes more powerful is a major technical and safety challenge. It ensures predictability and user trust. If a model's behavior changed drastically with each upgrade, it would be disorienting for users and could introduce new, unvetted safety risks. Successful personality persistence indicates robust alignment techniques that scale with model capabilities.

How does this compare to OpenAI's approach?

OpenAI's model releases, such as the transition from GPT-3.5 to GPT-4 and more recently to the o1 series, have also shown some personality consistency but have occasionally introduced more noticeable shifts in tone and capability. Anthropic appears to be placing a higher explicit priority on maintaining a stable, recognizable persona as a feature, which aligns with their brand identity centered on reliability and safety.