A significant software supply chain attack affecting the popular JavaScript HTTP client library axios began not with a technical exploit, but with a highly sophisticated social engineering campaign directed at one of its developers. The incident, which led to malicious versions of the package being published to the npm registry, serves as a stark warning to open source maintainers about the evolving threat landscape where human factors are the primary target.

What Happened

According to a warning shared by developer Simon Willison, the compromise of the axios package on npm started when an attacker successfully used social engineering against a member of the axios development team. The attacker gained sufficient access to publish trojanized versions of the library (axios@1.7.7 and axios@1.7.8), which contained obfuscated malware designed to steal environment variables and sensitive data from affected systems.

The malicious packages were live for a brief period before being identified and removed. The attack did not involve a direct breach of the axios GitHub repository or a compromise of the maintainer's npm account via stolen credentials alone. Instead, the initial vector was a personalized, convincing interaction that tricked the developer into granting access.

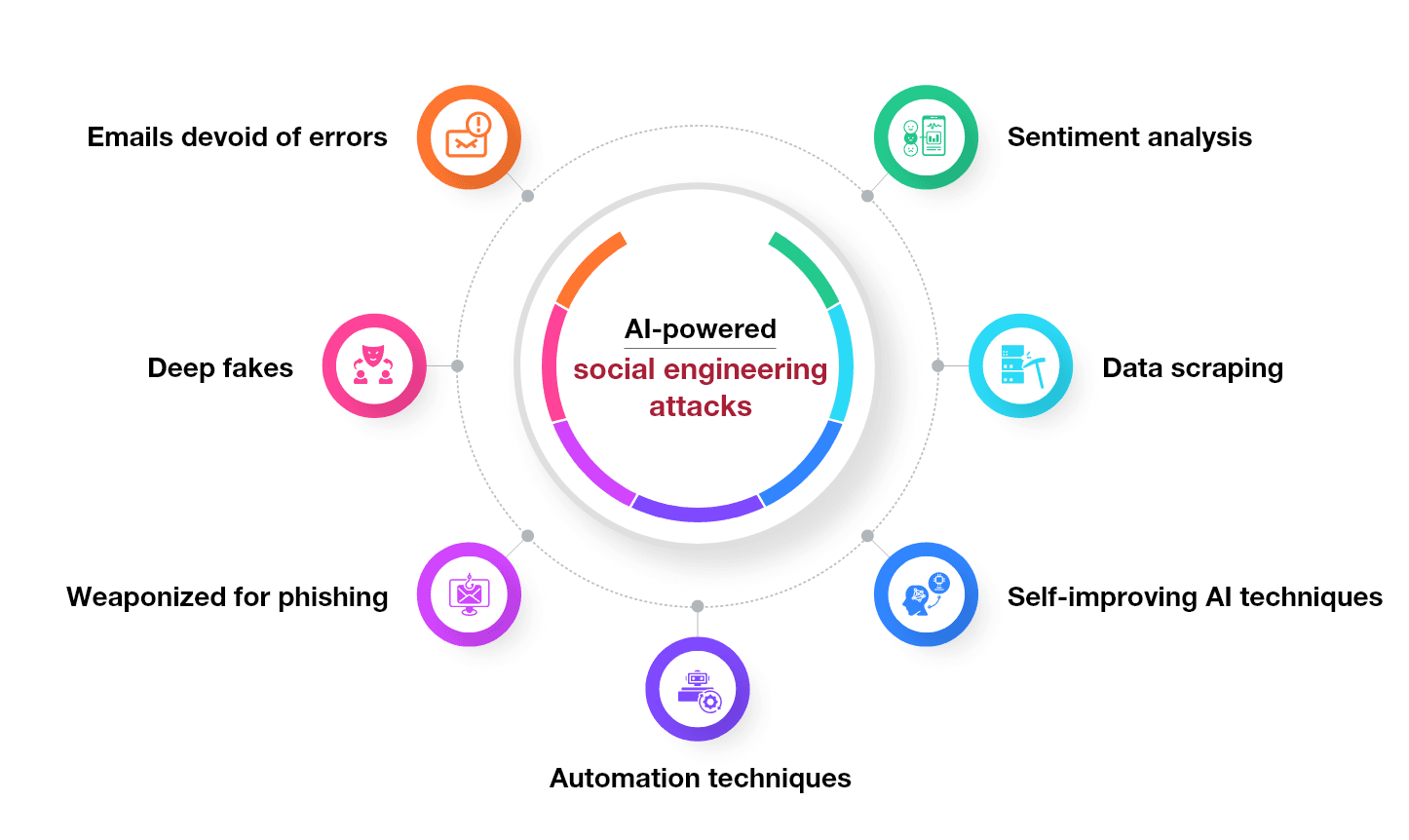

The Rising Threat of AI-Augmented Social Engineering

While the specific details of the social engineering tactic are not public, security experts note that such attacks are becoming increasingly sophisticated. The era of generic phishing emails is giving way to highly targeted campaigns, often called "spear-phishing" or "whaling." Generative AI tools can dramatically enhance these attacks by:

- Crafting Flawless Communication: Generating perfectly grammatical, context-aware messages in the style of a colleague or community member.

- Creating Fake Context: Fabricating convincing fake issues, pull requests, or support tickets that reference real project details.

- Impersonation: Mimicking the writing style and knowledge of trusted individuals within a project's ecosystem based on their public communications.

For an open source maintainer, a message that accurately references a recent commit, a specific project pain point, or a known community discussion is far more likely to bypass skepticism.

Implications for Open Source Security

This attack pattern represents a fundamental shift. The security focus for many projects has been on technical measures: strong passwords, 2FA, secure CI/CD pipelines, and code signing. The axios incident underscores that the human maintainer is now the critical attack surface.

- Trust is the Vulnerability: The open source model relies on collaboration and trust. Attackers are exploiting this very principle.

- Beyond Technical Hardening: While 2FA on npm and GitHub is essential, it can be circumvented if a developer is tricked into approving a session or access request.

- Supply Chain Amplification: Compromising a widely used library like

axios(which sees millions of weekly downloads) creates a massive blast radius, potentially affecting countless downstream applications and services.

Recommended Defensive Posture for Maintainers

- Zero-Trust for Access Requests: Institute a formal, out-of-band verification process for any request related to account access, repository permissions, or publishing rights. A quick confirmation via a separate, pre-established channel (like a Signal or Telegram group for core maintainers) can stop most attacks.

- Mandatory Security Awareness: Maintainer teams should discuss these specific, sophisticated social engineering threats. Training should include examples of AI-aided impersonation.

- Require Multi-Factor Approval for Publishes: For critical packages, consider requiring a second maintainer's approval (via a pull request review or a registry-specific feature) before a new version can be published to the public registry.

- Monitor for Anomalous Activity: Use tools that alert on package publishes from new IP addresses, unusual times, or changes in publishing patterns.

gentic.news Analysis

This axios incident is not an isolated event but part of a dangerous and accelerating trend in the software supply chain. It follows a pattern set by earlier attacks, such as the 2021 compromise of the ua-parser-js library, where the maintainer's npm account was hijacked after a malicious package was planted in a dependency. However, the explicit shift to social engineering as the primary vector marks a tactical evolution. Attackers have realized that breaching the human layer is often more effective and cheaper than finding a zero-day in tooling.

This trend dovetails with the proliferation of accessible generative AI. Tools that can scrape a developer's public comments on GitHub and Stack Overflow to create a convincing persona are a force multiplier for attackers. We are moving from an era of automated credential stuffing to one of personalized, AI-generated persuasion campaigns. For the open source ecosystem, which runs on voluntary labor and informal trust, this is an existential challenge. It places a new burden on maintainers who are already often overworked and under-resourced.

Furthermore, this attack highlights the critical importance of software bill of materials (SBOM) and real-time vulnerability scanning. While those tools can't prevent the initial compromise, they are essential for rapid detection and response once a malicious package is released. The speed at which the axios packages were identified and flagged by the community and security firms likely limited the damage, demonstrating that defensive monitoring at the consumer level is just as vital as hardening at the source.

Frequently Asked Questions

What was the Axios supply chain attack?

The Axios supply chain attack occurred when a malicious actor used sophisticated social engineering to trick an Axios developer into granting them access. The attacker then published compromised versions (axios@1.7.7, axios@1.7.8) of the popular HTTP client library to the npm registry. These versions contained obfuscated code designed to steal environment variables and sensitive data from any application that installed them.

How can open source maintainers protect against such social engineering attacks?

Maintainers should adopt a zero-trust approach to access requests, verifying them through a separate, pre-established communication channel. Enforcing multi-factor authentication (MFA) everywhere is table stakes. For high-impact packages, implementing a multi-approver system for publishing new versions can add a critical safety check. Regular security awareness discussions focusing on these advanced, personalized phishing tactics are also essential.

Is using axios safe now?

Yes, the malicious versions (1.7.7 and 1.7.8) have been removed from the npm registry and flagged as compromised. The axios maintainers and npm security teams acted swiftly. You should ensure your projects are using a clean, official version (e.g., 1.7.5 or earlier, or a patched later version once released). Always verify your installed version and run npm audit or use a dependency scanning tool to check for known vulnerabilities.

Could AI have been used in this attack?

While not confirmed in this specific case, the description of "very sophisticated social engineering" aligns perfectly with capabilities enhanced by generative AI. AI can be used to create highly personalized, context-aware messages, impersonate trusted individuals, and generate fake supporting materials, making such attacks far more scalable and convincing. The open source community must assume that adversaries are already leveraging these tools.