A new benchmark from security research firm Fabraix introduces a game-theoretic approach to AI agent security, measuring not just if an agent can be broken, but how much it would cost an adversary to do so. The Adversarial Cost to Exploit (ACE) benchmark quantifies the token expenditure—and thus the dollar cost—an autonomous attacker must invest to breach an LLM agent's defenses.

In its first public run, ACE tested six "budget-tier" models with identical agent configurations against an autonomous red-teaming attacker. The results reveal a stark security hierarchy, with Anthropic's Claude Haiku 4.5 proving dramatically more resistant than its peers.

What the Researchers Built

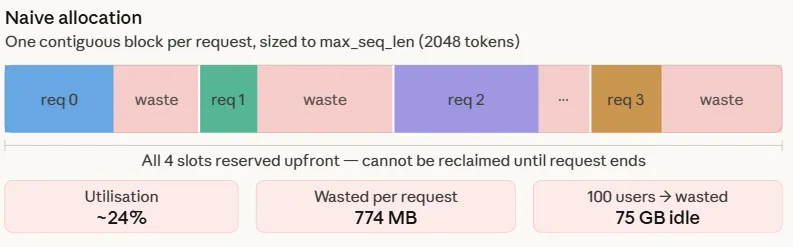

ACE moves beyond traditional binary pass/fail security benchmarks by introducing an economic dimension. Instead of asking "can the agent be compromised?" ACE asks "how much would it cost to compromise this agent?" This approach enables game-theoretic analysis of when an attack becomes economically rational for an adversary.

The benchmark framework consists of:

- Standardized Agent Configuration: All tested models run identical agent setups with the same tools and capabilities

- Autonomous Red-Teaming Attacker: A consistent, automated adversary that attempts to exploit vulnerabilities

- Token Cost Accounting: Every prompt, response, and reasoning step from the attacker is tracked and converted to dollar costs based on model pricing

- Economic Breakpoint Analysis: Determining the point where attack costs exceed potential rewards

Key Results: A Stark Security Hierarchy

The initial ACE evaluation tested six models that represent the current "budget tier" of production-ready AI agents:

Claude Haiku 4.5 $10.21 10x baseline GPT-5.4 Nano $1.15 1.1x baseline Gemini Flash-Lite $0.87 Below $1 threshold DeepSeek v3.2 $0.76 Below $1 threshold Mistral Small 4 $0.68 Below $1 threshold Grok 4.1 Fast $0.52 Below $1 thresholdClaude Haiku 4.5 stands as a clear outlier, requiring an order of magnitude more investment to breach compared to every other model tested. The $10.21 mean cost represents a significant economic barrier for would-be attackers.

GPT-5.4 Nano shows modest resistance at $1.15, while the remaining four models all fall below the psychologically important $1 threshold, suggesting they could be compromised for less than a dollar in adversarial compute costs.

How ACE Works: From Tokens to Economics

The ACE methodology breaks down into several key components:

1. Attack Scenario Definition

Each test begins with a clear objective for the autonomous attacker—typically to extract sensitive information, escalate privileges, or cause the agent to perform unauthorized actions. The attacker has access to the same toolset as the agent being tested.

2. Autonomous Attack Execution

The red-teaming system uses its own LLM to generate and execute multi-step attack strategies. It can:

- Probe for vulnerabilities through conversation

- Attempt prompt injection attacks

- Exploit tool misconfigurations

- Chain multiple vulnerabilities together

3. Cost Accounting

Every token consumed by the attacker is tracked and converted to dollar costs using each model's published API pricing. This includes:

- Attack planning and reasoning tokens

- Prompt tokens sent to the target agent

- Processing of the agent's responses

- Tool execution and result analysis

4. Success Determination

The benchmark runs multiple attack attempts with different strategies. The "adversarial cost to exploit" represents the mean expenditure across successful attacks. If an attack fails after significant investment, that cost still contributes to the economic resistance metric.

5. Game-Theoretic Analysis

By converting security to economics, ACE enables analysis of whether attacking a particular agent makes financial sense. A $10.21 break cost might deter attacks seeking to steal $5 worth of data, while a $0.52 cost makes nearly any attack economically viable.

Why This Matters for AI Agent Deployment

The ACE results arrive at a critical moment for AI agent adoption. According to our knowledge graph, a March 2026 report revealed that 86% of AI agent pilots fail to reach production, highlighting systemic industry gaps in reliability and security. Benchmarks like ACE provide concrete metrics for evaluating agent robustness before deployment.

For developers choosing between budget-tier models, the $10.21 vs. $0.52 cost differential represents more than just price-performance—it's a security multiplier. Claude Haiku 4.5's resistance suggests either superior inherent alignment or more effective guardrails against manipulation.

This economic framing also changes how organizations might budget for AI security. Instead of abstract "security hardening" efforts, teams can now think in terms of raising the adversary's break cost above specific thresholds based on the value of what's being protected.

Limitations and Future Work

The Fabraix team explicitly notes this is early work with evolving methodology. Current limitations include:

- Testing only six budget models (premium models like Claude Opus 4.6 or Gemini 2.5 Pro not yet evaluated)

- Simplified economic model that doesn't account for attacker time/value

- Limited attack scenario diversity in initial release

- Potential configuration dependencies that might affect results

The researchers are actively seeking community feedback to iterate on the benchmark methodology.

gentic.news Analysis

This benchmark arrives as AI agents are experiencing unprecedented attention, appearing in 26 articles this week alone in our coverage. The economic security metric fills a critical gap identified in our April 4 coverage of multi-tool coordination failures—while that research focused on agent reliability, ACE addresses agent security through an innovative economic lens.

Claude Haiku 4.5's standout performance aligns with Anthropic's established focus on AI safety and constitutional AI principles. However, it's notable that this testing focuses on the budget-tier Haiku rather than Anthropic's flagship Claude Opus 4.6 model. This suggests either that Haiku inherits robust security properties from its larger siblings, or that Anthropic has specifically optimized its smaller models for deployment scenarios where cost-conscious users might otherwise sacrifice security.

The results create an interesting competitive dynamic. While Gemini models have shown breakthrough reasoning capabilities in other benchmarks (as covered in our Gemini 2.5 Pro reporting), their budget-tier Flash-Lite offering shows vulnerability in this security-focused test. This highlights how different model families may optimize for different dimensions of performance—some for raw capability, others for security under constraint.

Practically, these findings should influence how teams select models for different agent use cases. For high-risk applications handling sensitive data or critical operations, the 10x security premium of Claude Haiku 4.5 may justify its cost even in budget-constrained scenarios. For lower-risk informational agents, the sub-$1 models might remain viable.

Frequently Asked Questions

What exactly does "$10.21 to breach" mean in practice?

It means that an autonomous attacker using the same model pricing would need to spend approximately $10.21 in API costs to successfully compromise a Claude Haiku 4.5 agent in the tested scenarios. This includes all the tokens consumed while planning attacks, interacting with the target agent, and processing responses. In economic terms, it makes attacks unprofitable unless the value of what's being stolen or disrupted exceeds $10.21.

Why test only budget-tier models and not premium ones like Claude Opus 4.6?

The initial focus on budget models reflects real-world deployment patterns—organizations experimenting with AI agents often start with cheaper models to control costs. Premium models like Opus 4.6 or Gemini 2.5 Pro would be interesting future test subjects, but their higher per-token costs would naturally inflate break costs, potentially making direct comparison difficult. The researchers likely wanted to compare models in the same price category first.

How does this benchmark relate to traditional security testing for AI agents?

Traditional security testing typically uses binary metrics: either an attack succeeds or fails. ACE adds an economic dimension that's particularly relevant for autonomous agents that might be targeted by automated attacks at scale. A system that requires $10,000 worth of compute to breach might be effectively secure against all but nation-state attackers, while one that costs $0.50 to breach could be mass-exploited.

Couldn't companies just game this benchmark by artificially inflating token costs?

Potentially, but that would be counterproductive in real deployment. If a company modified its agent to consume excessive tokens during normal operation just to inflate attack costs, they would also pay those costs during legitimate use. The benchmark assumes standard, efficient agent configurations—the security should come from the model's inherent resistance to manipulation, not from artificial cost inflation.