A community developer has successfully ported Google's recently released Gemma 4 language model to the MLX-Swift framework, enabling local execution on Apple Silicon devices. The port, created by developer @adrgrondin, is now available for testing through the LocallyAI application, bringing a 27-billion parameter open-weight model directly to Macs and iOS devices.

What Happened

Developer Adrian Grondin (@adrgrondin) has adapted the Gemma 4 model weights to run within the MLX-Swift ecosystem. MLX-Swift is Apple's machine learning framework designed specifically for Apple Silicon, allowing models to run efficiently on the unified memory architecture of M-series chips. The port makes the model immediately accessible through the LocallyAI app, a platform focused on running large language models locally on consumer hardware.

This represents one of the first community-driven ports of Gemma 4 to a non-Google inference stack, significantly expanding the model's accessibility beyond its official Hugging Face and Google Cloud implementations.

Technical Context

Gemma 4 is Google's latest open-weight language model family, released in late 2025. The 27B parameter variant represents a significant architectural advancement over previous Gemma models, featuring improved reasoning capabilities and multilingual support. Unlike closed models, its open-weight nature allows for community modifications and ports to various inference frameworks.

MLX-Swift is Apple's answer to efficient on-device AI. Built upon the Metal Performance Shaders framework, it leverages Apple Silicon's neural engine and unified memory to run models that would traditionally require discrete GPUs. The framework has gained traction among developers looking to deploy LLMs on Apple hardware without cloud dependencies.

The LocallyAI app serves as a user-friendly interface for these local models, abstracting away command-line complexity and providing a chat-based interface similar to cloud-based AI assistants.

What This Means in Practice

For developers and researchers with Apple hardware, this port provides:

- Local inference without API costs or latency

- Privacy-preserving AI with all processing occurring on-device

- Experimental access to Gemma 4's capabilities without Google Cloud dependencies

- Benchmarking opportunities comparing Gemma 4's performance across different hardware and frameworks

Early users report the model runs efficiently on M3 Max and M4 Pro chips with 32GB+ of unified memory, though performance on base M-series chips with less memory may be limited.

gentic.news Analysis

This development continues two significant trends we've been tracking: the democratization of frontier models through community ports, and Apple's growing ecosystem for on-device AI. As we covered in our February 2026 analysis of MLX 2.0 Expands Apple's On-Device AI Ambitions, Apple has been aggressively positioning MLX as the framework of choice for local AI development on their hardware. This Gemma 4 port represents exactly the type of community adoption Apple needs to build a viable alternative to CUDA-based ecosystems.

The timing is particularly notable given Google's recent emphasis on Gemma 4 as their flagship open model. While Google provides official support through Hugging Face and their cloud platform, community ports like this one extend the model's reach into ecosystems where Google has less presence. This creates an interesting dynamic: Google benefits from wider adoption of their architecture, while Apple gains access to state-of-the-art models without developing them in-house.

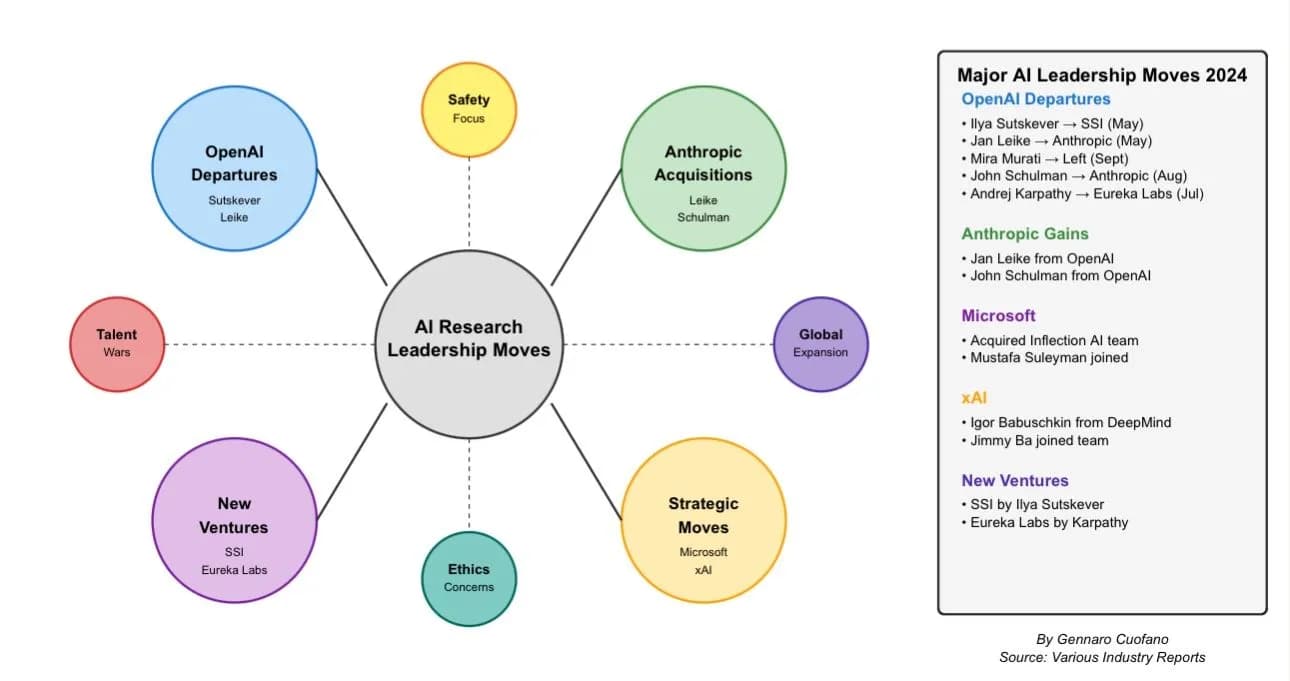

Looking at the competitive landscape, this follows similar community efforts with other models. Meta's Llama 3.2 saw extensive MLX ports within weeks of release, and Mistral's models have strong MLX support. The rapid porting of Gemma 4—likely within days of its official release—suggests the MLX developer community is maturing and can quickly adapt new architectures.

For practitioners, the key takeaway is the acceleration of model accessibility. Where previously trying a new model like Gemma 4 required cloud credits or specialized Linux setups, developers can now experiment locally on their MacBooks. This lowers the barrier to entry for model evaluation and application development, though with the tradeoff of hardware limitations compared to cloud GPU instances.

Frequently Asked Questions

What is Gemma 4?

Gemma 4 is Google's latest family of open-weight language models, released in late 2025. The 27B parameter version offers strong reasoning capabilities and multilingual support while being small enough to run on consumer hardware with sufficient memory.

How do I run Gemma 4 on my Mac?

You can download the LocallyAI app from the developer's repository and load the MLX-Swift port of Gemma 4. The model requires Apple Silicon (M1 or later) with at least 16GB of unified memory for basic functionality, though 32GB+ is recommended for optimal performance.

How does MLX-Swift compare to other frameworks for local AI?

MLX-Swift is specifically optimized for Apple Silicon's architecture, offering better memory efficiency and performance on M-series chips compared to generic frameworks like PyTorch or Transformers. However, it's limited to Apple hardware, whereas other frameworks support wider hardware ecosystems.

Is this an official Google release?

No, this is a community port by independent developer Adrian Grondin. Google provides official Gemma 4 implementations through Hugging Face and Google Cloud, but this MLX-Swift adaptation is unofficial and community-maintained.