What Happened

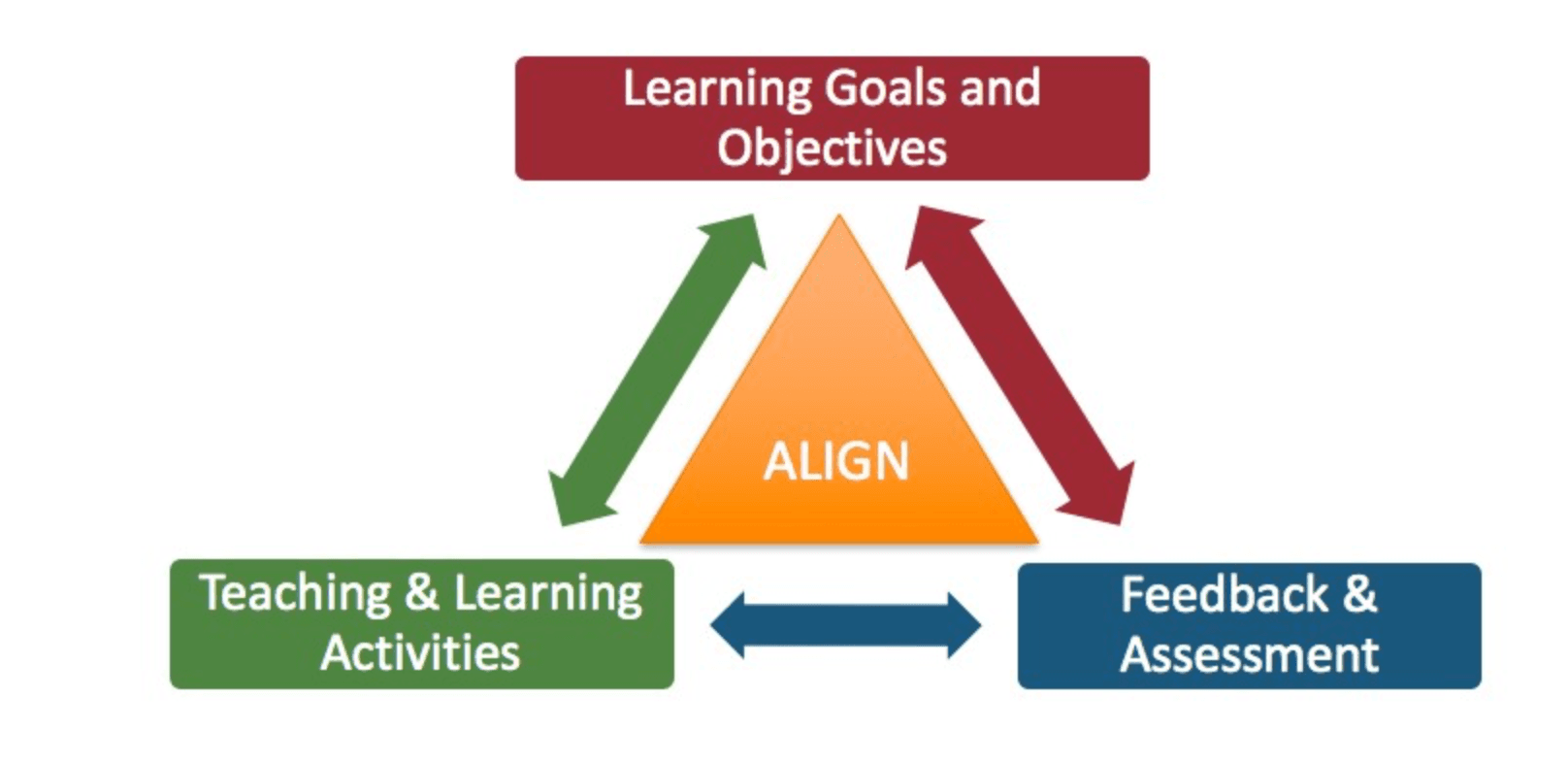

The source article from the Data Science Collective introduces the concept of Goal-Aligned Recommendation Systems, drawing lessons from a specific model architecture called the Return-Aligned Decision Transformer (RADT). The core argument is that traditional recommendation engines often fail to optimize for the true, long-term business goals they are designed to support. Instead, they become proficient at maximizing short-term, proxy metrics like clicks or engagement, which may not correlate with ultimate objectives such as customer lifetime value (LTV) or sustained revenue.

The article posits that target signals—the explicit business goals—are frequently "ignored" by models during training. RADT is presented as a framework to fix this misalignment by directly incorporating a notion of "return" or cumulative reward into the decision-making process of the transformer-based recommender. This shifts the paradigm from predicting the next likely interaction to generating a sequence of actions (recommendations) that are explicitly aligned with maximizing a defined long-term outcome.

Technical Details

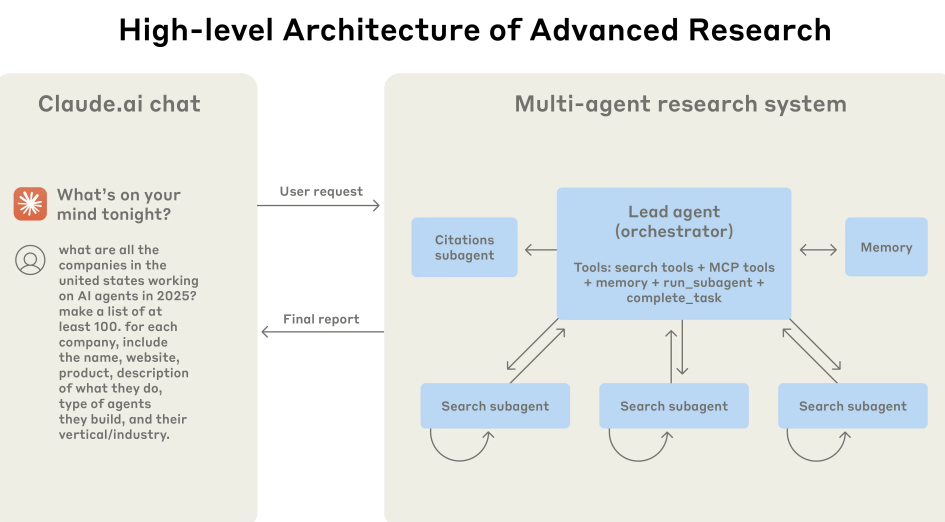

While the source is a blog post and not a formal research paper, it explains the high-level mechanism of RADT. It builds upon the Decision Transformer architecture—a model that treats sequential decision-making as a conditional sequence modeling problem. In a standard setup, the model is conditioned on a desired return (the goal) and past states/actions to generate the next optimal action.

RADT applies this principle to recommendations. The "state" could be the user's historical interaction sequence. The "actions" are the items recommended. The critical innovation is the explicit conditioning on a return-to-go signal. This signal represents the cumulative reward the system should aim to achieve from the current point in the user journey onward. By training the model to generate recommendation trajectories that achieve specific return targets, it theoretically learns to make suggestions that are directly instrumental to the business objective, whether that's driving a purchase, increasing basket size, or reducing churn.

This approach contrasts with standard methods that use implicit feedback (clicks) or final outcomes (purchases) as isolated labels. RADT frames the entire user session as a trajectory where each recommendation contributes to a cumulative score, forcing the model to understand the delayed impact of its suggestions.

Retail & Luxury Implications

The implications for retail and luxury are significant, though the technology is in a research-oriented phase. The fundamental problem RADT aims to solve is endemic to e-commerce: recommendation systems that are brilliant at generating endless scroll but poor at guiding a customer toward a high-value purchase or a brand-strengthening experience.

For a luxury brand, the long-term goal is rarely a single transaction. It's about cultivating a lasting relationship, building brand affinity, and guiding a client through a curated journey—from discovery to first purchase, then to complementary items and eventually to high-margin, exclusive products. A standard "users who bought this also bought" engine does not have this strategic lens.

A goal-aligned system like RADT could, in theory, be trained with a return signal defined as Customer Lifetime Value (CLV). The model would then learn to recommend items that not only have a high probability of being purchased but also those that increase the likelihood of future high-value engagements. For example, it might learn that recommending a classic handbag (a high-consideration item) early in a new customer's journey, even if the immediate conversion rate is lower, leads to a higher CLV because those customers become brand loyalists. Conversely, it might deprioritize repeatedly recommending low-margin accessories that drive quick clicks but do not build relationship equity.

In practice, this means moving beyond optimizing for “Add to Cart” and toward optimizing for “Client Portfolio Value.” It aligns the AI's objective with the strategic goals of the Maison: driving full-price sales, introducing customers to new categories, and reinforcing brand aesthetics over time.

Implementation Approach & Challenges

Implementing a RADT-inspired system is non-trivial and sits at the cutting edge of applied AI research. The primary requirement is a well-defined, measurable long-term goal. For retail, this could be 6-month CLV, but modeling and attributing value accurately is a massive challenge in itself.

The technical stack requires expertise in sequential modeling with transformers, as covered in our prior articles on Transformer Architectures. Training requires rich, longitudinal user interaction data to model complete trajectories. The model must also deal with the extreme sparsity of positive outcomes (a user makes only a few purchases among thousands of impressions) and the long time horizons between recommendation and realized value.

Furthermore, this is not a plug-and-play solution. It would likely involve significant custom development, starting with a robust offline reinforcement learning (RL) framework to train the policy on historical data before any live deployment. The computational cost is higher than traditional two-tower retrieval models.

Governance & Risk Assessment

Maturity Level: Medium-Low (Academic/Proof-of-Concept). The core ideas are published in reinforcement learning and recommender systems research (as noted in our KG, significant papers on agent-driven reports and personalization were published in early March 2026). RADT is a specific instantiation of these broader trends. Production deployments in complex retail environments are likely years away for most.

Key Risks:

- Goal Specification Risk: The system is only as good as the goal you define. A poorly specified return signal (e.g., over-emphasizing short-term revenue) could lead to exploitative recommendations that damage brand trust.

- Bias Amplification: If historical data reflects biases (e.g., only marketing certain products to certain demographics), a policy trained to maximize long-term value could perpetuate and even amplify these patterns.

- Explainability: Transformer-based sequential models are complex. Explaining why a particular item was recommended as part of a long-term strategy is far more difficult than explaining a similarity-based recommendation.

- Data Dependency: The model's success is wholly dependent on the quality and scope of historical behavioral data. For new customers or new products (the "cold start" problem), it may have little to offer.

gentic.news Analysis

This discussion on goal alignment is part of a clear and accelerating trend in recommender systems research toward more sophisticated, causal, and long-horizon optimization. Our Knowledge Graph shows Recommender Systems as a frequently covered topic, with recent articles on frameworks for multi-behavior recommendation (MCLMR) and continual distillation (DIET). These all address different facets of moving beyond simple collaborative filtering.

The mention of RADT's foundation in Transformer Architectures connects it directly to the dominant paradigm in AI. However, as we covered in "MMM4Rec," there is also growing interest in more efficient architectures like Mamba for sequential tasks. The key insight for luxury retail leaders is that the field is rapidly evolving from "what is most similar" to "what action best achieves a strategic outcome."

For now, the immediate practical step is not to build a RADT system but to rigorously audit existing recommendation engines. Ask: What short-term metric are they optimizing for, and how misaligned is it with our true business goals? Begin the work of defining and modeling long-term customer value. The algorithms like RADT are emerging to solve this problem, but they require the foundational strategic and data groundwork to be laid first. This article is a signal that the tools for truly strategic, brand-aligned AI curation are on the horizon.