Meta is preparing to release its first large language model developed under the technical leadership of Scale AI founder Alexandr Wang, according to industry sources. The company reportedly plans to eventually open-source versions of the new model family, though not all components will be available initially.

What Happened

Meta has been developing a new family of large language models under the guidance of Alexandr Wang, who joined Meta in a senior technical role after founding and leading Scale AI. The models are reportedly nearing release, though exact timing remains unspecified.

According to sources familiar with the development, Meta acknowledges these models won't outperform leading competitors like OpenAI and Anthropic across all benchmarks. Instead, the company is focusing on specific areas of consumer application strength to maintain relevance in the increasingly competitive AI landscape.

Context

Alexandr Wang founded Scale AI in 2016, building it into one of the most prominent AI data labeling and evaluation platforms. His move to Meta represents a significant talent acquisition for the social media giant as it seeks to compete in the foundational model space against well-funded rivals.

Meta has been aggressively pursuing open-source AI strategies with its Llama family of models, which have gained significant traction in the developer community. The company's decision to eventually open-source versions of this new model family aligns with this broader strategic direction.

The Competitive Landscape

The AI model space has become increasingly crowded, with OpenAI's GPT series, Anthropic's Claude models, Google's Gemini, and various open-source alternatives all vying for developer mindshare and market position. Meta's approach appears to be one of strategic differentiation rather than attempting to outperform competitors on every benchmark.

By focusing on specific consumer application strengths, Meta may be targeting integration points across its existing product ecosystem, including Facebook, Instagram, WhatsApp, and its Reality Labs division working on AR/VR technologies.

What to Watch

Key questions remain unanswered:

- What specific consumer applications is Meta targeting?

- How will the eventual open-source release be structured?

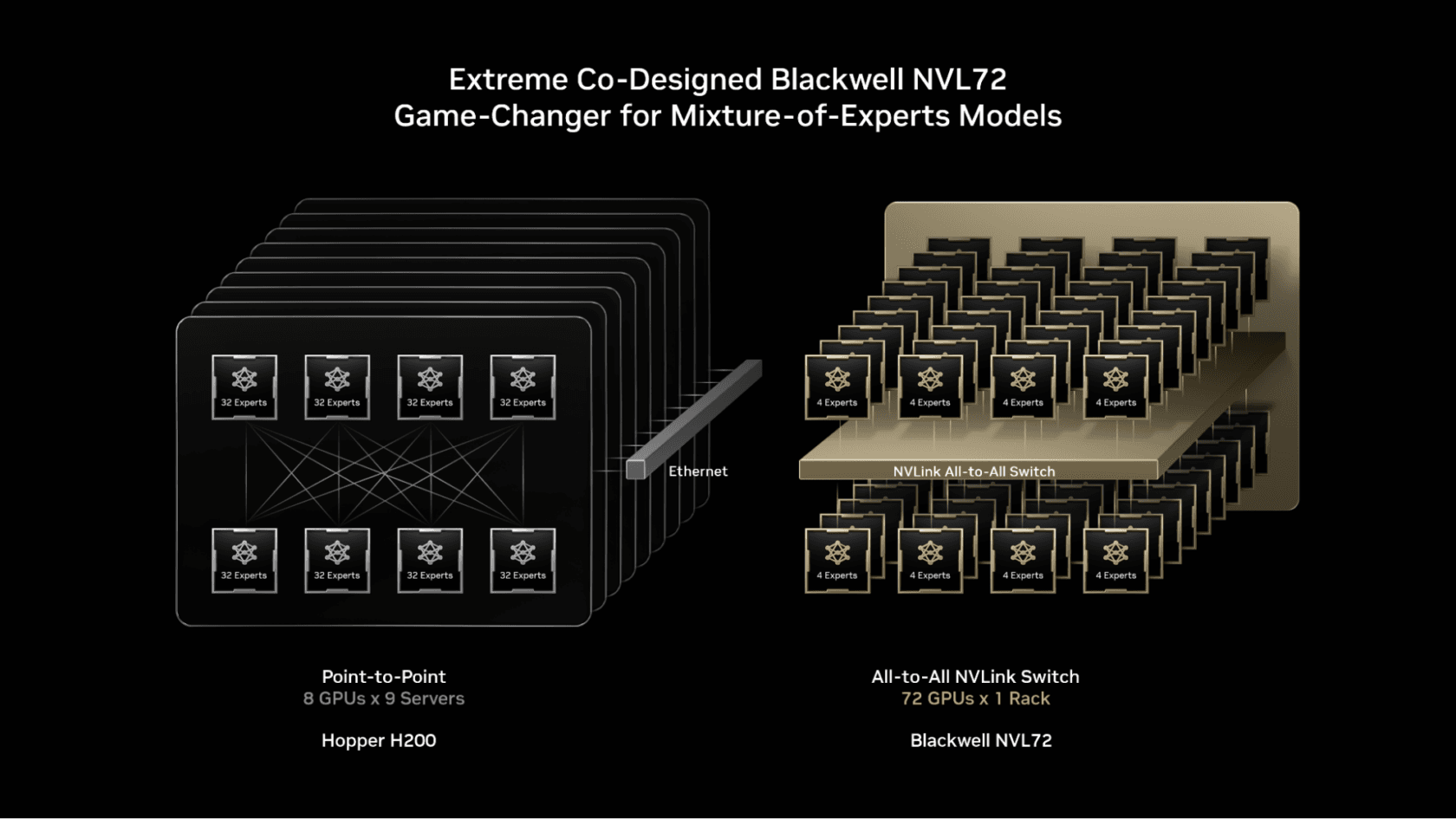

- What architectural innovations might Wang's team have introduced?

- How will this model family relate to or differ from the existing Llama series?

Until Meta makes an official announcement with technical details and benchmarks, the actual capabilities and positioning of these models remain speculative.

gentic.news Analysis

This development represents Meta's continued doubling down on its open-source AI strategy, but with a notable shift in technical leadership. Bringing in Alexandr Wang—whose company Scale AI has been instrumental in evaluating and benchmarking AI models for years—signals Meta is serious about improving model quality and evaluation rigor. Wang's expertise in data labeling, evaluation, and the practical challenges of deploying AI at scale could address some of the weaknesses critics have identified in previous Meta releases.

This move follows Meta's pattern of acquiring top AI talent from across the industry. Wang's hiring is particularly significant given Scale AI's role as a neutral third-party evaluator for many AI companies, including potentially Meta's competitors. His insider knowledge of evaluation methodologies and benchmark design could give Meta an edge in preparing models that perform well on industry-standard tests.

The acknowledgment that these models won't beat OpenAI or Anthropic "across the board" is a rare moment of public realism from a major AI player. It suggests Meta is adopting a more pragmatic, application-focused approach rather than engaging in a pure benchmark arms race. This aligns with trends we've observed across the industry as companies shift from chasing leaderboard positions to building models optimized for specific use cases and deployment environments.

Frequently Asked Questions

Who is Alexandr Wang?

Alexandr Wang is the founder and former CEO of Scale AI, a company that provides data labeling and AI evaluation services. He joined Meta in a senior technical role overseeing LLM development. Scale AI has worked with numerous AI companies and government agencies, making Wang one of the most knowledgeable figures in practical AI deployment and evaluation.

How does this relate to Meta's existing Llama models?

Details are scarce, but this appears to be a new model family developed under different technical leadership. It may represent a next-generation architecture or a specialized variant targeting different use cases than the general-purpose Llama models. The relationship between this new family and existing Llama releases will become clearer when Meta releases technical details.

When will these models be released?

The source indicates release is "very soon," but no specific date is provided. Given typical development cycles and the need for extensive testing, a reasonable estimate would be within the next 1-3 months, though this is speculative. Meta will likely announce the models at a developer conference or through a technical blog post.

Will these models be completely open source?

According to the source, "not all of it will be open," but Meta plans to "eventually open-source versions of the new family." This suggests a similar approach to Llama, where certain model weights are released with usage restrictions, while some components (possibly training data, evaluation suites, or specialized modules) remain proprietary.