A major investigative report from The New Yorker, highlighted by AI commentator Rohan Paul, has surfaced explosive new details about OpenAI's internal governance, historical safety commitments, and the 2023 leadership crisis. The report draws on over 200 pages of private notes from former OpenAI safety lead Dario Amodei and previously undisclosed "Ilya Memos," providing a granular look at the tensions that have defined the company.

What the Investigation Reveals

The report paints a picture of an organization where structural contradictions—between its original non-profit mission and its capped-profit ambitions, and between safety commitments and product velocity—created persistent internal fault lines.

The 'Merge and Assist' Clause: Perhaps the most startling revelation is that OpenAI's original governing documents once contained a "merge and assist" clause. This provision stipulated that if a rival organization (like Google) were to achieve safe AGI first, OpenAI would cease competition and assist that rival in building it safely. This stands in stark contrast to standard competitive behavior in the technology industry and underscores the foundational, almost altruistic, safety ethos that some founders initially envisioned.

The 2023 Crisis as a 'Case File': The November 2023 board coup against CEO Sam Altman is reframed not as a sudden mutiny but as the culmination of a secret internal investigation. According to the report, Chief Scientist Ilya Sutskever compiled a dossier of approximately 70 pages of evidence, including Slack messages, HR materials, and photos from company systems. He reportedly sent these to board members via disappearing messages, building a case that led to Altman's brief ouster.

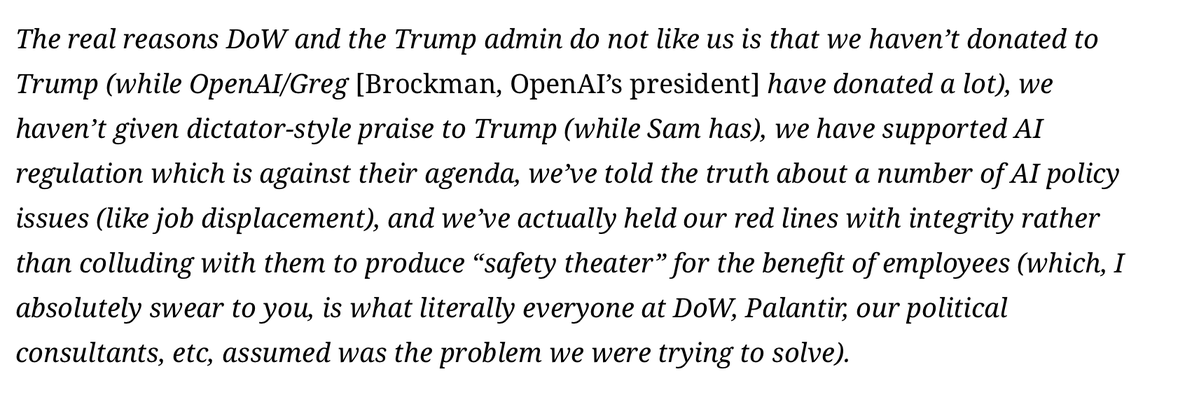

Long-Running Trust Erosion: The investigation suggests the trust crisis predates 2023. Dario Amodei, who left OpenAI in 2021 to found rival AI safety company Anthropic, had been keeping over 200 pages of private notes for years, documenting concerns. This indicates the board drama was a "late-stage eruption of a long-running internal concern."

The Powerless Non-Profit Board: The report details how the original non-profit board's legal authority was rendered moot by financial realities. Once Microsoft's involvement, an $86 billion deal with Thrive Capital, and employee liquidity events were at stake, the board was trapped between reversing its decision or watching the company disintegrate. During the comeback, Altman was directly texting Microsoft CEO Satya Nadella with proposed replacement board lineups.

Safety Promises vs. Operational Reality

The investigation alleges significant gaps between public safety commitments and internal resource allocation.

- Compute Allocation: While OpenAI publicly promised its Superalignment team 20% of secured compute, sources close to the team claimed the real figure was closer to 1-2%, often on older chips, before the effort was effectively shut down in July 2024.

- Product Governance Bypasses: A similar pattern emerged in product releases. The report cites a disputed internal approval for a GPT-4 feature and a claimed release in India that bypassed a required safety review, framing the core conflict as "speed vs. safeguards."

Unconventional AGI Fundraising and Strategic Pivot

The report traces ambitious and unorthodox fundraising ideas entertained by leadership years ago, including a "countries plan" to pressure governments into backing OpenAI as part of a global AGI race. It also mentions pitches to wealthy individuals in Bel-Air for a crypto token tied to future AGI access.

The investigation concludes that OpenAI has fundamentally transformed from a research lab with a governance problem into a "future $1T strategic machine" deeply entangled with government contracts, defense-adjacent systems, and geopolitics, raising questions about control that extend far beyond Sam Altman.

gentic.news Analysis

This investigation provides crucial documentary heft to narratives that have circulated in the AI community since the November 2023 crisis. The "merge and assist" clause is a bombshell that validates long-held suspicions about the almost religious dedication to AI safety that characterized OpenAI's earliest days—a ethos that has visibly strained under the pressures of scaling a leading AI product company. This deepens the context around the departures of key safety-focused researchers like Dario Amodei, who went on to found Anthropic with a explicit focus on reliable, steerable AI, a move that now reads as a direct response to the internal conflicts documented in his notes.

The timeline is critical. The internal documentation from Sutskever and Amodei didn't emerge in a vacuum. It follows a period of intense scrutiny on AI lab governance, including the U.S. AI Safety Institute's (AISI) collaboration with OpenAI on frontier model testing and ongoing congressional hearings. The report's allegation that safety compute allocations were a fraction of what was promised directly contradicts OpenAI's public-facing safety rhetoric and will fuel existing regulatory arguments for mandatory external audits of safety claims, a topic we explored in our analysis "AI Safety Institutes Emerge as De Facto Auditors for Frontier AI Labs."

Furthermore, the depiction of a board neutered by financial dependencies on Microsoft ($MSFT) underscores a central tension in the "capped-profit" model. It demonstrates that non-profit governance structures are vulnerable when a subsidiary's commercial success becomes critical to the valuation and liquidity of major partners. This aligns with broader industry trends where technical capability and compute resources, often concentrated in a handful of cloud providers and chipmakers like NVIDIA ($NVDA), create deep structural dependencies that can override formal governance.

Frequently Asked Questions

What was OpenAI's "merge and assist" clause?

The clause, found in OpenAI's early governing documents, was a promise that if a competitor like Google achieved safe Artificial General Intelligence (AGI) first, OpenAI would stop competing and instead help that rival ensure its AGI was developed safely. It reflects an extreme, mission-driven prioritization of safe outcomes over commercial victory.

What are the "Ilya Memos" mentioned in the New Yorker report?

The "Ilya Memos" refer to a roughly 70-page dossier of evidence compiled by OpenAI co-founder and former Chief Scientist Ilya Sutskever ahead of the November 2023 board coup. The dossier reportedly included Slack messages, HR materials, and photos from company systems, which Sutskever sent to board members as disappearing messages to build a case for Sam Altman's removal.

How does this report change the view of the November 2023 OpenAI crisis?

The report reframes the crisis from a sudden board rebellion to the planned culmination of a secret internal investigation. It also shows the crisis was a symptom of years-long internal safety and trust conflicts, documented by figures like Dario Amodei, rather than a spontaneous rupture.

What does the report say about OpenAI's safety resource allocation?

The investigation alleges a significant discrepancy between public promises and internal reality. While OpenAI publicly committed 20% of its secured compute to its Superalignment safety team, internal sources claimed the actual allocation was only 1-2%, often on older hardware, before the team was disbanded.