A new analysis circulating in technical circles highlights the sheer scale of Google's artificial intelligence infrastructure. According to an estimate shared by AI analyst @kimmonismus, Google now possesses the computational equivalent of roughly 5 million Nvidia H100 GPUs. This colossal resource is positioned as the engine behind the rapid scaling and distribution of models from leading AI lab Anthropic.

The core argument is straightforward: in the modern AI race, algorithms and data are necessary but insufficient without unprecedented compute. Google, with its established revenue streams, custom Tensor Processing Units (TPUs), and global distribution channels (Google Cloud, Search, Workspace), provides the "shovels" for the generative AI "gold rush." Anthropic, in turn, is leveraging this infrastructure to deploy and scale its Claude models, moving from pure research to a platform with broad enterprise reach.

What This Means for the AI Infrastructure Race

The 5 million H100-equivalent figure, while an estimate, underscores a critical and often underappreciated dimension of the AI competition: raw computational horsepower. For context, a single Nvidia H100 GPU has a peak theoretical performance of nearly 4 PetaFLOPS for FP16/BF16 precision. A fleet of this magnitude represents an almost unimaginable concentration of training and inference capability, likely spread across Google's global data centers and encompassing its latest TPU v5p and TPU v4 systems.

This scale directly benefits Anthropic's operational needs. Training frontier models like Claude 3 Opus requires tens of thousands of chips running for months. Serving these models to millions of users via API and consumer-facing products demands even more distributed inference capacity. By partnering deeply with Google Cloud—Anthropic's preferred cloud provider—the lab can access this infrastructure on demand, avoiding the capital expenditure and logistical nightmare of building and maintaining its own comparable fleet.

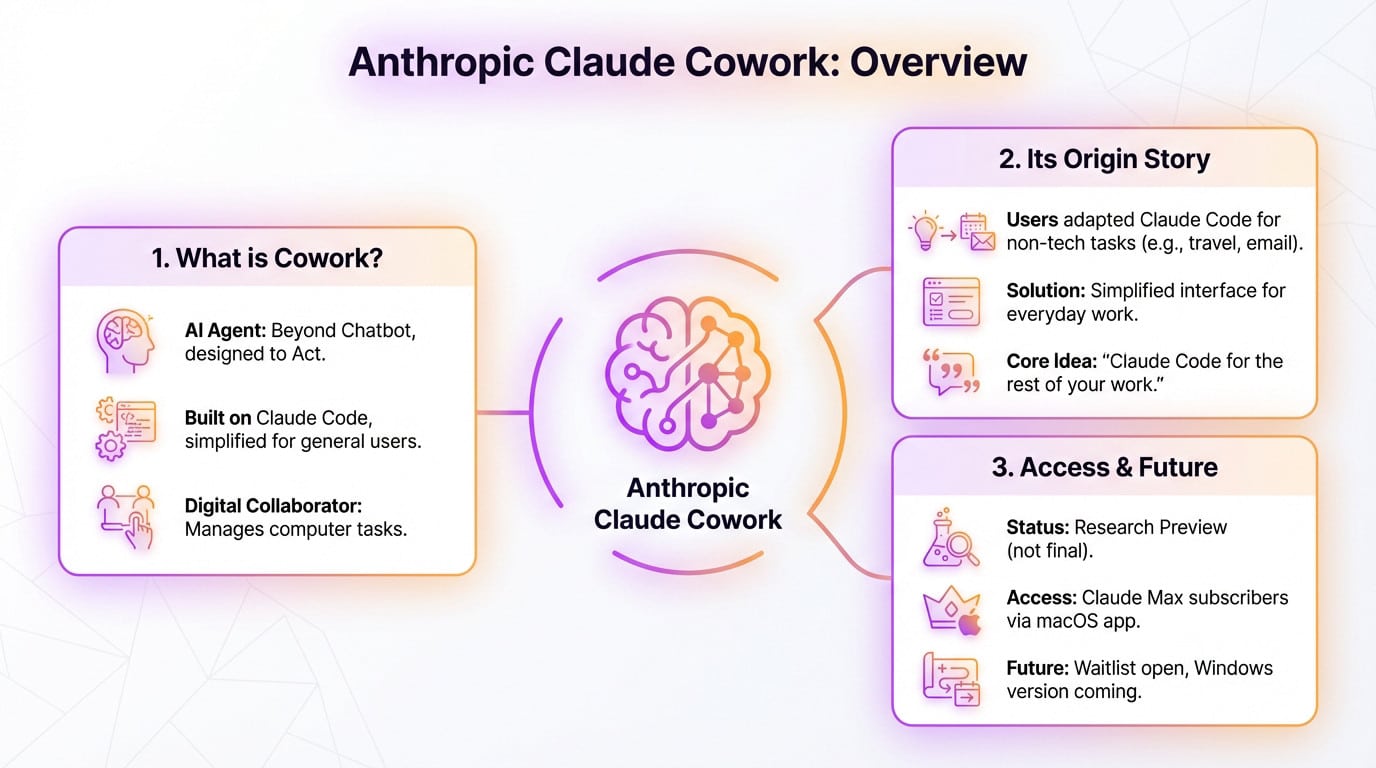

The Strategic Shift: From Lab to Platform

The analysis frames Anthropic as now "selling shovels." This marks a strategic evolution. Initially a pure research organization focused on AI safety, Anthropic has successfully productized its work. The Claude API and consumer chat interface are its primary shovels. However, to sell them effectively, it needs a reliable, scalable, and performant foundation—Google's infrastructure.

Google's position is described as "exceptionally well-positioned" due to a triple advantage:

- Strong Revenue Streams: Profits from search and advertising fund massive, sustained capital investment in AI infrastructure.

- Its Own Chips: The TPU lineage, now in its fifth generation, provides cost and performance efficiency for large-scale training and reduces reliance on the constrained Nvidia supply chain.

- Distribution: Google Cloud provides the enterprise sales channel, while products like Search (via the Search Generative Experience) and Workspace offer potential integration points for billions of users.

This creates a symbiotic, yet asymmetric, relationship. Anthropic gains instant, global scale. Google Cloud gains a flagship, best-in-class AI model (Claude) to compete directly with Microsoft Azure's partnership with OpenAI and its own offerings like Gemini.

The Competitive Landscape

This dynamic intensifies the infrastructure layer of AI competition. The key players are now:

Google Cloud Anthropic (Primary), Google DeepMind (Gemini) TPU v5p/v4, ~5M H100-equiv. estimated fleet Scale, vertical integration, distribution Microsoft Azure OpenAI (Primary), Mistral AI, others Nvidia H100/A100 clusters, custom Maia chips First-mover via OpenAI, enterprise entrenchment Amazon AWS Anthropic (Minority stake), Stability AI, Cohere Custom Trainium & Inferentia chips, Nvidia clusters Breadth of AI partners, dominant cloud market share CoreWeave / Lambda (Infrastructure Provider) Dense Nvidia H100 clusters Specialized, high-performance compute for trainingThe estimate of Google's capacity suggests it may hold a raw compute advantage, or is at least competitive with Microsoft's massive investments. The race is no longer just about the next model release, but about who can build and operate the most efficient, largest-scale "AI factory."

gentic.news Analysis

This estimate, while unverified, aligns with the opaque but staggering infrastructure build-out we've tracked since the transformer explosion. If accurate, a 5 million H100-equivalent fleet would represent a multi-hundred-billion-dollar investment over several years, a scale only accessible to the largest tech conglomerates. This creates an almost insurmountable moat for training frontier models, directly impacting the strategies of all other AI labs.

Anthropic's deepening reliance on Google Cloud, following its [KNOWLEDGE GRAPH: historical context needed for Anthropic-Google partnership timeline] and contrasting with its [KNOWLEDGE GRAPH: relationship needed - e.g., minority investment from Amazon], exemplifies the complex, multi-cloud alliances forming. Labs are hedging infrastructure risk while aligning with cloud providers for distribution. This is a repeat of the pattern we saw with OpenAI and Microsoft, but with a key difference: Google is also a leading AI lab itself (DeepMind). This creates an inherent tension—Google is both Anthropic's primary infrastructure provider and its direct competitor in the model market with Gemini.

For practitioners, the implication is clear: the center of gravity for cutting-edge AI is consolidating around a few hyperscale compute platforms. Access to state-of-the-art models will increasingly be mediated by these platforms' APIs and marketplaces. The independence of a research lab is fundamentally constrained by its access to scale, making partnerships like Anthropic-Google and OpenAI-Microsoft not just convenient, but existential.

Frequently Asked Questions

How was the "5 million H100-equivalent" estimate calculated?

The source tweet does not detail the calculation methodology. Such estimates typically extrapolate from publicly disclosed capital expenditure (CapEx) figures from Alphabet/Google, known costs of H100 clusters, and inferred efficiency ratios between Google's custom TPUs and Nvidia's H100 GPUs. It is a back-of-the-envelope industry estimate meant to illustrate scale, not a verified audited number.

Does Google actually use 5 million Nvidia H100s?

No. The estimate is for "H100-equivalent" compute. Google's infrastructure is predominantly based on its custom Tensor Processing Units (TPUs). The figure translates Google's total available AI-accelerated compute into the equivalent number of today's leading data center GPU (the H100) to provide an intuitive sense of scale for industry observers.

Why does Anthropic partner with Google instead of building its own infrastructure?

Building a global AI infrastructure fleet at this scale requires tens of billions of dollars in continuous capital investment, years of lead time, and expertise in data center construction, energy procurement, and chip-level engineering. As a lab, Anthropic's comparative advantage is in model research and development. Partnering with Google Cloud allows it to instantly access world-class infrastructure, convert capital expenditure to operational expenditure, and leverage Google's distribution channels to reach customers.

How does this affect the availability of GPUs for other AI companies?

The massive procurement by hyperscalers like Google, Microsoft, and Amazon for their own clusters and partner needs is the primary driver of the ongoing GPU shortage. It concentrates the most advanced chips (H100, B100, etc.) within these few companies, raising costs and extending lead times for smaller startups and research institutions trying to buy hardware directly.