A research team from Carnegie Mellon University has published findings demonstrating that 14 of the top large language models, including OpenAI's GPT-4, Anthropic's Claude 3, and Google's Gemini, fail fundamental tests of logical reasoning. The study, which appears to be pre-publication work shared by researcher Guri Singh, systematically reveals that these models cannot reliably identify simple contradictions in text, a core capability for any system claiming to perform reasoning.

What the Researchers Tested

The researchers designed a straightforward evaluation: present models with pairs of statements and ask whether the second statement contradicts the first. These are not complex philosophical puzzles but basic logical inconsistencies that a human child could identify. Examples likely include simple factual mismatches like "The sky is blue" followed by "The sky is green."

According to the tweet thread, the team tested 14 models spanning the current market leaders. While the full paper and complete list of models are not yet available from the source, the mention of "GPT, Claude..." strongly indicates the inclusion of the GPT-4 family, the Claude 3 series (Sonnet, Opus), and presumably Google's Gemini Pro/Ultra. Other likely candidates are Meta's Llama 3, Mistral's Mixtral, and possibly open-source models like Qwen or DeepSeek.

The Key Result: Universal Failure

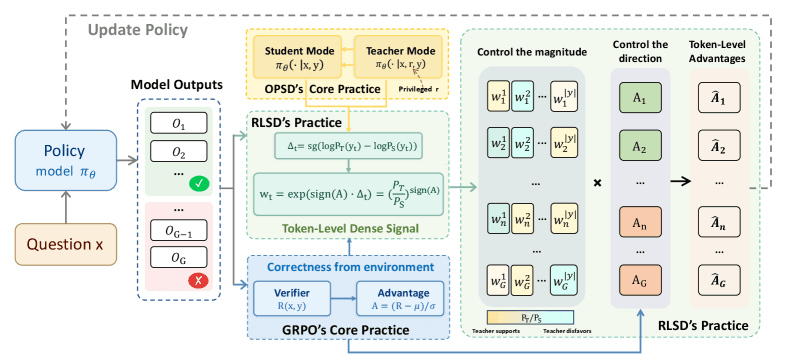

The central, striking finding is that all 14 models failed the contradiction test. They could not consistently and correctly identify when one statement negated another. This failure mode persisted across different prompting strategies and model sizes. The result directly challenges the narrative—often advanced in model cards and technical reports—that these systems possess robust reasoning or "chain-of-thought" capabilities. Performance on curated reasoning benchmarks (like GSM8K or MATH) does not appear to generalize to this basic, unstructured logical task.

Why This Matters: The Benchmark Illusion

This research highlights a critical issue in AI evaluation: benchmark saturation does not equate to genuine reasoning. Models are extensively trained and fine-tuned on specific datasets to excel at standardized tests, but this can lead to "benchmark hacking"—optimizing for the metric rather than the underlying capability. The CMU test acts as a simple, out-of-distribution probe, revealing that the models' performance on complex reasoning benchmarks may be built on a fragile foundation of pattern matching, not robust logical understanding.

For developers and companies building applications on top of these APIs, the implication is clear: you cannot assume logical consistency from current LLMs. Applications in legal tech, compliance, factual verification, or any domain requiring reliable logical deduction are exposed to fundamental risk. An LLM might solve a calculus problem but fail to see that two sentences in a contract contradict each other.

gentic.news Analysis

This CMU finding is a significant data point in an ongoing trend of research deconstructing LLM reasoning claims. It aligns with our previous coverage of the "WizardLM-2 Debacle" in February 2026, where a model's top benchmark scores were found to be the result of test-set contamination rather than superior reasoning. Both stories underscore a growing "credibility crisis" in LLM evaluation, where headline-grabbing benchmark numbers are increasingly distrusted by the research community.

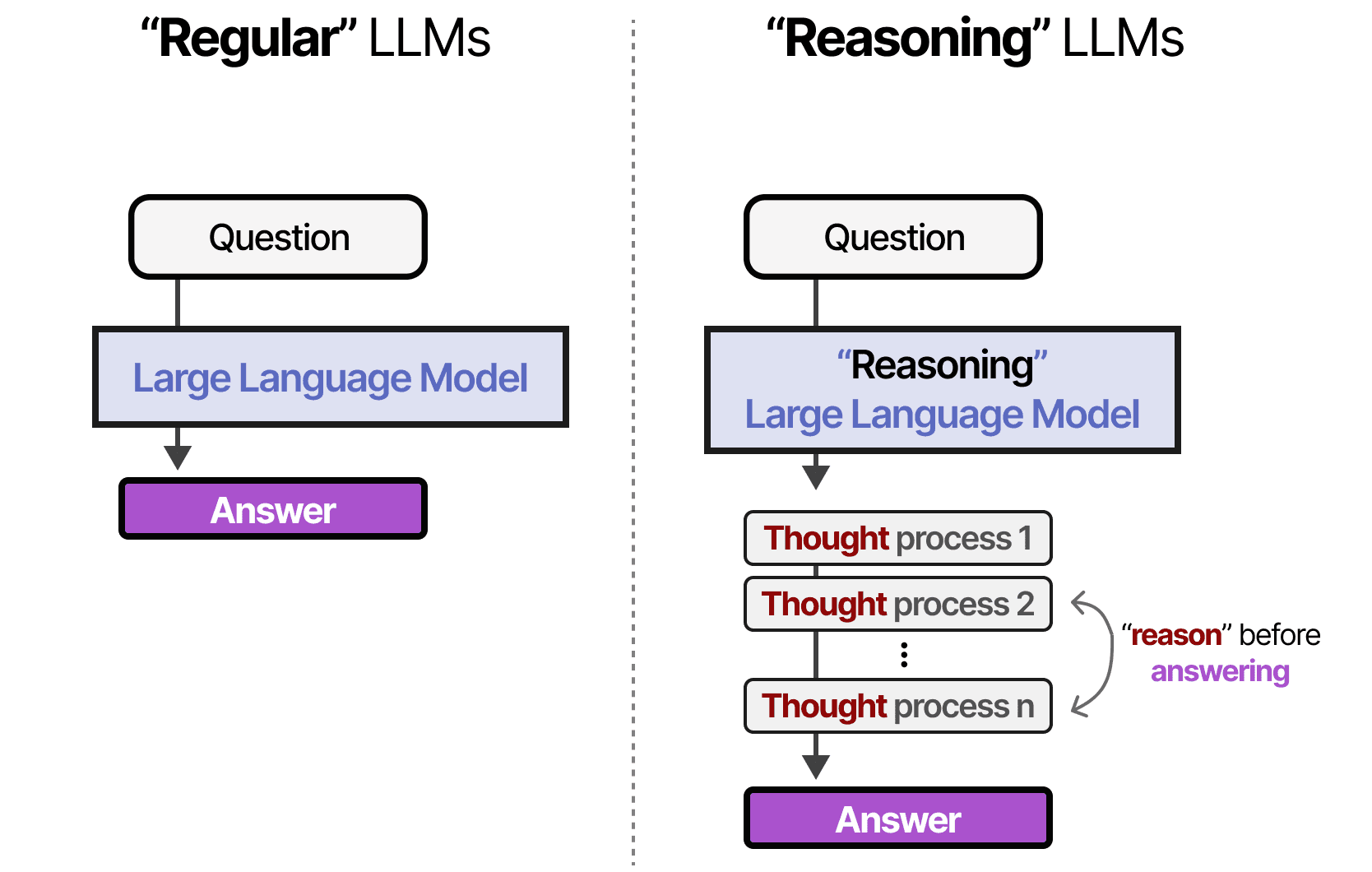

The result also directly contradicts the narrative pushed by several major AI labs. For instance, Anthropic's extensive technical reports on Claude 3 Opus emphasize its "advanced reasoning," while OpenAI has consistently discussed GPT-4's reasoning capabilities as a key differentiator. This CMU work provides concrete, adversarial evidence against those claims. It suggests that the massive scale and next-token prediction objective of current LLMs may have inherent limitations for achieving true, reliable deductive reasoning.

Looking at the competitive landscape, this creates an opportunity for alternative approaches. Neuro-symbolic methods, which combine neural networks with formal logic engines, or research into LeCun's proposed world-model architectures, may receive renewed interest as paths to actual reasoning. For practitioners, the takeaway is to implement rigorous, application-specific validation and not to outsource critical logical checks to a general-purpose LLM without a robust safety net.

Frequently Asked Questions

Which specific LLMs failed the CMU contradiction test?

The source mentions 14 top models, explicitly naming GPT and Claude. The full list is likely from a forthcoming paper, but it almost certainly includes the leading proprietary models (GPT-4 Turbo, Claude 3 Sonnet/Opus, Gemini Pro/Ultra) and the top-tier open-source models (Llama 3 70B/400B, Mixtral 8x22B, Qwen 2.5 72B). The key finding is the failure was universal across this elite group.

Does this mean LLMs are useless for reasoning tasks?

No, but it sharply defines their limitations. LLMs can perform impressively on many reasoning benchmarks through pattern recognition and vast training data. However, this study shows they lack a general, reliable ability to perform core logical operations like contradiction detection. They are useful tools but not trustworthy deductive reasoners.

How did the researchers test for contradictions?

While the full methodology isn't detailed in the tweet, standard approaches involve creating minimal pairs of sentences: a premise and a hypothesis. The model is asked if the hypothesis contradicts the premise. To avoid simple keyword matching, researchers use diverse phrasing and neutral language. The models' consistent failure across these variations suggests a deep lack of comprehension of truth conditions.

What should developers do in response to this research?

Developers building systems that require logical consistency should:

- Never rely solely on an LLM's output for a logical deduction.

- Implement external verification layers, such as rule-based checkers or formal logic validators, for critical workflows.

- Conduct extensive stress-testing on their own domain-specific contradiction cases, as general benchmarks are poor predictors of real-world performance.

- Monitor for research on neuro-symbolic or hybrid AI systems that may offer more robust solutions.