A new research claim, highlighted in a social media thread, asserts the development of an AI system that uses 100 times less energy than current models while also being more accurate. The thread describes this as a potential rule-rewriting development for the AI industry, though it links to a detailed analysis rather than a primary source like a research paper.

What Happened

The announcement, made by a technical commentator, states that researchers have built an AI system with a dramatic improvement in computational efficiency. The core claim is twofold:

- 100x Reduction in Energy Use: The system purportedly consumes one-hundredth the energy of comparable contemporary AI models during inference or training.

- Increased Accuracy: Contrary to the typical trade-off between efficiency and performance, the system is claimed to be more accurate than the models it is compared to.

The source material is a high-level summary pointing to a deeper technical thread. As of this writing, the specific architectural details, training methods, benchmark datasets, and precise accuracy metrics are not provided in the initial post. The claim's impact hinges on the validation of these efficiency and accuracy numbers against standard industry benchmarks.

Context

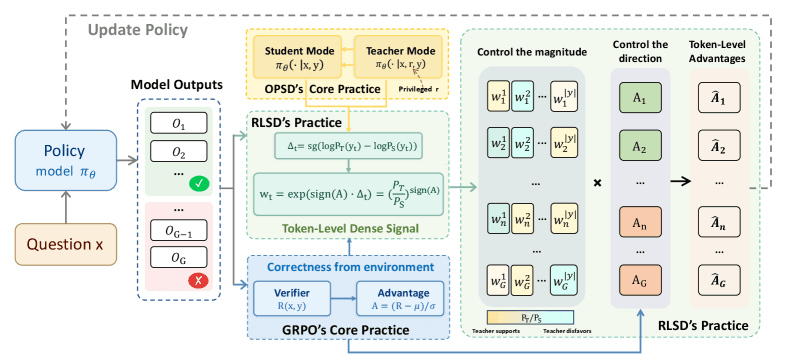

The pursuit of energy-efficient AI is a dominant theme in machine learning research, driven by the soaring computational costs and environmental footprint of training and running large models. Techniques like model pruning, quantization, knowledge distillation, and the development of novel, sparser architectures (e.g., Mixture-of-Experts) are actively explored to improve FLOPs-per-watt.

A claim of a 100x efficiency gain represents an order-of-magnitude leap beyond most published incremental improvements. For context, a typical efficiency-focused paper might report a 2x to 5x reduction in energy or latency with minimal accuracy loss. A 100x claim, if realized and generalizable, would represent a paradigm shift in the feasible scale and accessibility of powerful AI models.

The Critical Need for Validation

While the claim is extraordinary, the AI engineering community treats such announcements with caution until full methodological disclosure and independent replication. Key questions remain unanswered by the initial source:

- Baseline: Which "current models" is it being compared to (e.g., GPT-4, Claude 3, Llama 3 70B)?

- Task & Metric: On what specific tasks (MMLU, GSM8K, HumanEval) and using what accuracy metrics is it "more accurate"?

- Measurement: How is "energy use" measured (total training FLOPs, inference latency/watt on specific hardware)?

- Architecture: What is the fundamental technical innovation enabling this gain? Is it a new model architecture, a training algorithm, or a hardware-software co-design?

The promise is immense—democratizing high-performance AI by slashing its cost and carbon footprint. However, the burden of proof is on the researchers to provide the technical details that would allow the community to assess the claim's validity and scope.

gentic.news Analysis

This announcement lands in a period of intense focus on AI efficiency. Our previous coverage, such as "Mamba-2 State Space Model Claims 5x Training Speed-Up Over Transformers" (December 2025), highlighted architectural innovations targeting the fundamental computational bottlenecks of the Transformer. The 100x claim, if rooted in a similar architectural breakthrough, would represent a logical but vastly more aggressive progression along that trendline.

The claim also directly confronts the central economic tension in AI today. As we noted in "Cloud AI Inference Costs Are Stalling Startup Innovation" (February 2026), the operational expense of running large models is a major barrier. A verified 100x efficiency improvement would not just be a technical curiosity; it would be an existential threat to current cloud pricing models and a massive boon for on-device AI applications. It aligns with the commercial goals of entities like Apple (📈), which has consistently prioritized on-device performance and efficiency for its ML features, and could disrupt the market leverage of cloud providers whose business relies on high compute throughput.

However, extraordinary claims require extraordinary evidence. The history of AI research is littered with claims of "breakthrough efficiency" that did not generalize beyond narrow, cherry-picked benchmarks. The community will be watching closely for a preprint on arXiv or a presentation at a major conference like NeurIPS that provides the necessary rigor for evaluation. Until then, it represents a highly ambitious target for the field rather than a confirmed new state-of-the-art.

Frequently Asked Questions

What does "100x less energy" mean in practice?

It likely means the new system consumes 1% of the electrical energy required by a baseline model to perform the same task (e.g., generate a 500-word article or answer 100 quiz questions). This could translate to running a model on a laptop that currently requires a server-grade GPU, or reducing a data center's AI compute power bill from $10 million to $100,000.

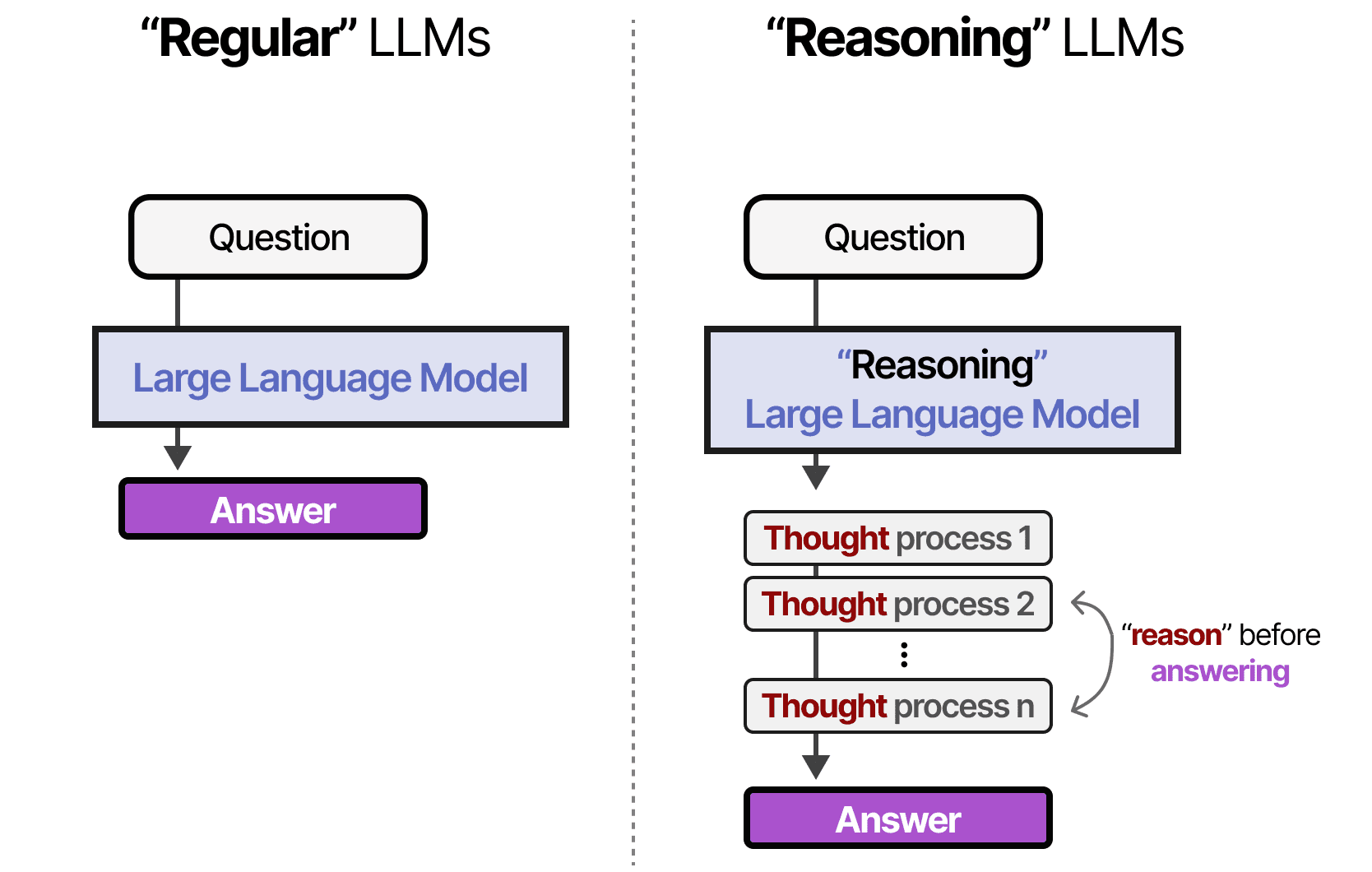

How can an AI model be more accurate while using less energy?

This challenges the common accuracy-efficiency trade-off. Possible methods include a fundamentally more efficient algorithm that requires fewer, more meaningful computations (e.g., selective state spaces, improved sparse attention), or a novel training regimen that produces a vastly more compact yet capable model representation. The claim implies a discovery that decouples parameter count/flops from predictive performance.

Who are the researchers behind this claim?

The initial social media post does not identify the research team or their affiliation. Validating this claim requires knowing the authors and their institution to assess their credibility and track record in efficient ML research.

When will we know if this claim is real?

The claim will move from speculation to credible fact once a peer-reviewed paper or a detailed technical report is published with full methodology, code, and reproducible benchmarks. The community typically expects this within weeks of such a prominent announcement. Follow-up posts from the original thread author or citations in other technical discussions may provide earlier clues.