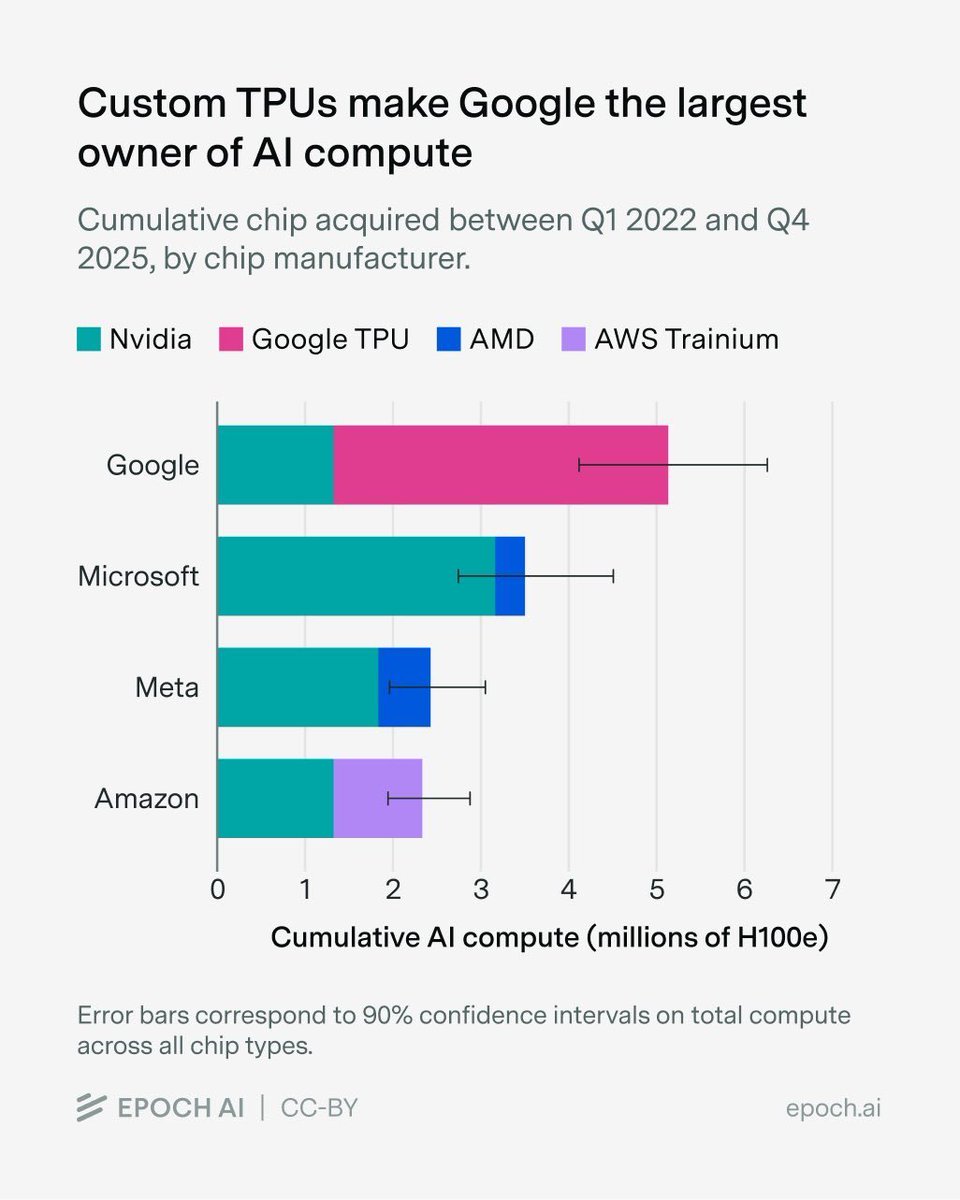

Anthropic has announced a major infrastructure partnership, signing an agreement with Google and Broadcom to secure "multiple gigawatts" of next-generation Tensor Processing Unit (TPU) capacity. This capacity is slated to come online starting in 2027 and will be dedicated to training and serving future frontier iterations of the Claude AI model family.

The announcement, made via a post on X, signals a significant long-term commitment to Google's custom AI accelerator hardware. While specific financial terms, exact wattage figures, and detailed technical specifications of the "next-generation" TPUs were not disclosed, the scale implied by "multiple gigawatts" is substantial. For context, a single gigawatt of power could theoretically support tens of thousands of high-end AI accelerator chips running concurrently.

The Deal

The core of the announcement is a tripartite agreement:

- Anthropic: The AI safety and research company will be the end-user, utilizing the compute for its Claude model development.

- Google: Provides the cloud infrastructure and the next-generation TPU systems.

- Broadcom: Likely involved as the co-designer and manufacturer of the TPU chips themselves, continuing its long-standing partnership with Google on custom silicon.

The deal locks in supply for Anthropic years in advance, a critical move in an industry where advanced AI hardware is a scarce and fiercely contested resource. The 2027 start date indicates these are not currently deployed systems but a future generation of Google's AI accelerator roadmap.

Strategic Context: The Compute Arms Race

This agreement represents a deepening of the existing strategic partnership between Anthropic and Google. In February 2024, Google made a $2 billion investment in Anthropic, following a previous $500 million commitment. A core component of that partnership was Anthropic's agreement to use Google Cloud's TPU v5e and v5p accelerators, in addition to NVIDIA GPUs, for its model training and inference.

The new multi-gigawatt deal for next-gen hardware extends this collaboration far into the future. It provides Anthropic with a guaranteed, massive-scale compute pathway independent of the volatile GPU market, dominated by NVIDIA. For Google, it secures a flagship, frontier AI lab as a marquee customer for its proprietary silicon, validating its TPU roadmap and cloud AI strategy against competitors like Microsoft Azure (closely tied to OpenAI) and AWS.

What This Means for Anthropic's Roadmap

The commitment of this scale of specialized compute directly fuels Anthropic's pursuit of "frontier" models. The company's research has consistently pushed toward larger-scale training runs and more capable models, from Claude 3 Opus to the recently previewed Claude 3.5 Sonnet. Securing next-generation TPU capacity for 2027 and beyond is a prerequisite for the multi-trillion parameter training runs anticipated for future model generations.

It also suggests a continued bet on a specialized hardware-software co-design approach. Anthropic will likely work closely with Google and Broadcom to ensure its model architectures and training frameworks (like its distributed training library) are optimized for the upcoming TPU generation, potentially yielding efficiency gains over using more generic hardware.

gentic.news Analysis

This is a defensive and offensive maneuver in the high-stakes AI infrastructure war. Defensively, it guarantees Anthropic a seat at the table for the most advanced AI chips coming out of the Google-Broadcom pipeline, insulating it from supply shortages. Offensively, it provides the raw computational horsepower needed to compete with OpenAI's massive Azure-backed supercomputers and xAI's growing cluster of NVIDIA H100s and beyond.

The 2027 timeline is particularly telling. It confirms that the planning horizon for training the next-next-generation of frontier models is now measured in years, not months. This deal isn't for the hardware to train Claude 4 or 5, but likely for Claude 6 or 7. It reflects a maturation of the industry, where strategic capital allocation is as important as algorithmic breakthroughs.

This move further cements the emerging oligopoly in frontier AI. The prohibitive cost and scarcity of cutting-edge compute mean that only companies with deep-pocketed hyperscaler partnerships—OpenAI with Microsoft, Anthropic with Google, and potentially xAI with Oracle/AWS—can realistically compete at the very largest scales. This agreement makes Anthropic's position in that top tier more secure, but also highlights the increasing barriers to entry for new players without similar partnerships.

Frequently Asked Questions

What are TPUs?

Tensor Processing Units (TPUs) are application-specific integrated circuits (ASICs) developed by Google specifically to accelerate machine learning workloads. They are optimized for the large matrix operations fundamental to neural network training and inference and are a key alternative to NVIDIA's general-purpose GPUs in the AI hardware landscape.

Why is "multiple gigawatts" a significant scale?

In high-performance computing, power consumption is a direct proxy for computational capacity. A single modern AI accelerator chip can consume 500-1000 watts. "Multiple gigawatts" (where a gigawatt is 1 billion watts) implies infrastructure capable of running millions of such chips simultaneously. This is the scale required for training trillion-parameter models on massive datasets.

How does this affect the competition between Anthropic and OpenAI?

It levels the playing field in terms of long-term compute access. OpenAI has exclusive access to massive supercomputers built by Microsoft on Azure. This deal gives Anthropic a similarly scaled, dedicated pipeline via Google Cloud. The competition will increasingly hinge on which team can more effectively utilize this raw compute through better algorithms, data, and training techniques.

Does this mean Anthropic will stop using NVIDIA GPUs?

Not necessarily. The announcement states this TPU capacity is for training and serving "frontier" Claude models. Anthropic's current infrastructure is almost certainly a heterogeneous mix of TPUs and GPUs (like the H100). They will likely continue to use the best hardware for specific tasks. This deal ensures they have a leading-edge, non-NVIDIA option for their largest training runs.