A new investigation from The New Yorker magazine has shed light on the internal dynamics and pivotal moments at OpenAI, focusing on the relationship between CEO Sam Altman and co-founder Ilya Sutskever. The report, highlighted by AI commentator Rohan Pandey, provides previously undisclosed context surrounding Sutskever's departure from the company in mid-2025.

What the Investigation Reveals

The core of the investigation appears to center on the divergent philosophies between Altman and Sutskever regarding the pace of AI development versus safety considerations. While specific quotes from the full article were not included in the social media post, the framing suggests the report details a significant clash over OpenAI's direction following the release of its most powerful models.

Ilya Sutskever, who co-founded OpenAI in 2015 and served as its Chief Scientist, was long considered the spiritual and technical leader of the company's research efforts, particularly in alignment and safety. His departure marked a major shift in OpenAI's leadership and signaled a potential change in how the company balances aggressive product development with its original mission to ensure artificial general intelligence (AGI) benefits all of humanity.

Context of the Departure

Sutskever's exit followed a period of intense scrutiny and internal turmoil at OpenAI, including the November 2023 board saga where Sutskever initially voted to oust Altman before later expressing regret. He subsequently took a less public role before formally leaving the company in 2024 to launch his own AI safety-focused venture, Safe Superintelligence Inc. (SSI).

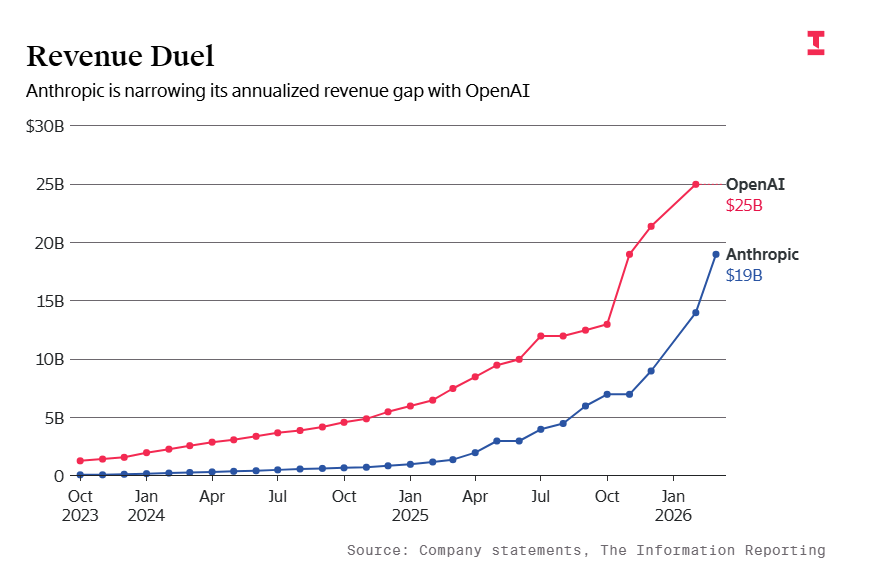

The New Yorker investigation likely connects these public events to deeper, ongoing tensions about resource allocation, research priorities, and the commercial pressures facing OpenAI as it competes with giants like Google, Anthropic, and xAI.

Broader Implications for AI Governance

This investigation enters a crowded field of narratives about OpenAI's internal culture. It follows other major profiles and books that have attempted to dissect the unique pressures on a company trying to commercialize cutting-edge AI while maintaining a non-profit governance structure through its OpenAI Global LLC board.

The details about Sutskever's exit are particularly relevant for the AI safety community, which has watched key researchers depart major labs over concerns that safety is becoming a secondary priority to capability and product milestones. Sutskever's subsequent founding of SSI, with its explicit "one goal, one product" mission to build safe superintelligence, is a direct continuation of this philosophical stance.

gentic.news Analysis

This investigation, while journalistic rather than technical, touches on the most critical governance question in modern AI: who controls the trajectory of the most powerful models? The reported clash between Altman and Sutskever is a microcosm of the field's central tension. As we covered in our analysis of Sutskever's SSI launch, his departure from OpenAI was not just a personnel change but a fissioning of the company's original DNA—the pragmatic product builder versus the cautious alignment researcher.

The timeline here is crucial. Sutskever's reduced role began after the November 2023 board crisis, a period we analyzed as a failed governance correction. The New Yorker report likely provides the connective tissue between that public drama and the private disagreements over technical direction. This aligns with reporting from other outlets suggesting that following GPT-4's release, internal debates intensified about how quickly to push toward GPT-5 and what safety benchmarks were necessary.

For technical leaders building with OpenAI's APIs, this is more than corporate gossip. The philosophical leanings of a lab's leadership directly influence model behavior, release thresholds, and safety mitigations. A lab prioritizing caution might release more heavily redacted models with stricter usage policies. Sutskever's exit, and the ascendancy of a more product-focused leadership, may partially explain the aggressive rollout of features like GPT-4o's real-time voice and the rapid iteration of ChatGPT. The market has rewarded this pace—OpenAI's valuation has skyrocketed—but the safety community continues to voice concerns, a dynamic we explored in our piece on AI scientist departures.

Frequently Asked Questions

What was Ilya Sutskever's role at OpenAI?

Ilya Sutskever was a co-founder of OpenAI and served as its Chief Scientist. He was one of the most influential figures in deep learning, having co-authored the seminal AlexNet paper and worked under Geoffrey Hinton. At OpenAI, he led key research efforts, particularly on AI alignment and safety, and was instrumental in the development of models like GPT-3 and GPT-4.

Why did Ilya Sutskever leave OpenAI?

While the full details are complex, the core reason cited in reports and this new investigation is a fundamental disagreement with CEO Sam Altman over the balance between rapid AI development and safety precautions. Sutskever advocated for a more cautious approach, prioritizing alignment research, while Altman pushed for faster product deployment and capability scaling. This philosophical clash culminated in Sutskever's departure in 2024.

What is Ilya Sutskever working on now?

Following his exit from OpenAI, Ilya Sutskever co-founded a new company called Safe Superintelligence Inc. (SSI). The company's stated mission is singular: to build a safe superintelligent AI. It aims to avoid productization distractions and focus entirely on the technical challenge of alignment, positioning itself as a direct counterpoint to the product-focused roadmaps of labs like OpenAI and Anthropic.

How does this affect OpenAI's current direction?

Sutskever's departure solidified a shift in OpenAI's leadership toward a more aggressive product and commercialization strategy. The company has since released iterative model updates like GPT-4 Turbo and GPT-4o, expanded enterprise offerings, and pursued partnerships with media and tech firms. The internal safety team's influence appears to have been restructured, with oversight moving to a new Safety and Security Committee led by board members, including Altman.