In a recent interview with Axios, OpenAI CEO Sam Altman made a direct, quantifiable claim about the current impact of AI on software development: "On the kind of knowledge-work side, I suspect that current models are maybe making people twice as productive, or 3 times as productive, as they used to be as coders."

This statement moves beyond the common hype of "AI will change everything" and provides a specific multiplier for the productivity gains he's observing. Altman extrapolated this trend to a near-future scenario where the scaling of individual capability meets abundant compute: "And maybe you'll start to hear people say, 'I'm able to do the work of a whole team with these tools.' So if it's me and x hundred GPUs, we can do the work of a whole software team."

He summarized this shift with a concise phrase: "AI is moving from 'copilot' to 'company.'" The vision is one person, powerful models, and significant compute resources producing output equivalent to an entire team.

What This Means in Practice

Altman's comments point to two distinct phases:

- The Current State (2-3x Multiplier): This aligns with widespread anecdotal reports from developers using tools like GitHub Copilot, Cursor, or ChatGPT for coding. The gain isn't just in writing boilerplate faster, but in debugging, documentation, refactoring, and exploring new libraries or frameworks.

- The Emerging Frontier ('Company' Scale): This suggests a more autonomous future where a single developer can orchestrate AI agents to handle discrete, complex components of a project—frontend, backend, testing, deployment—effectively acting as a project manager and architect for a team of AI "workers."

The Competitive and Economic Context

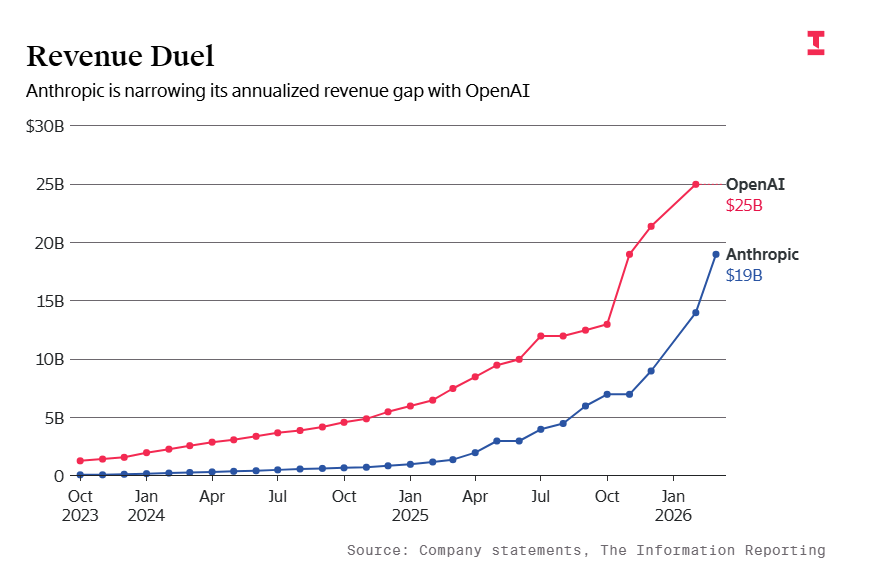

This vision directly impacts the business case for AI adoption. A 2-3x productivity gain fundamentally alters project timelines, staffing requirements, and startup economics. It also raises immediate questions about the valuation and role of traditional software engineering teams. If one developer with AI tools can match the output of 3-5 developers, the economic incentive for companies to adopt these tools at scale becomes overwhelming.

The phrase "me and x hundred GPUs" also underscores the shifting cost structure. Capital expenditure may increasingly shift from human payroll to compute budgets, a trend already visible in leading AI labs and a core thesis behind the massive investment in GPU infrastructure by cloud providers and private companies.

gentic.news Analysis

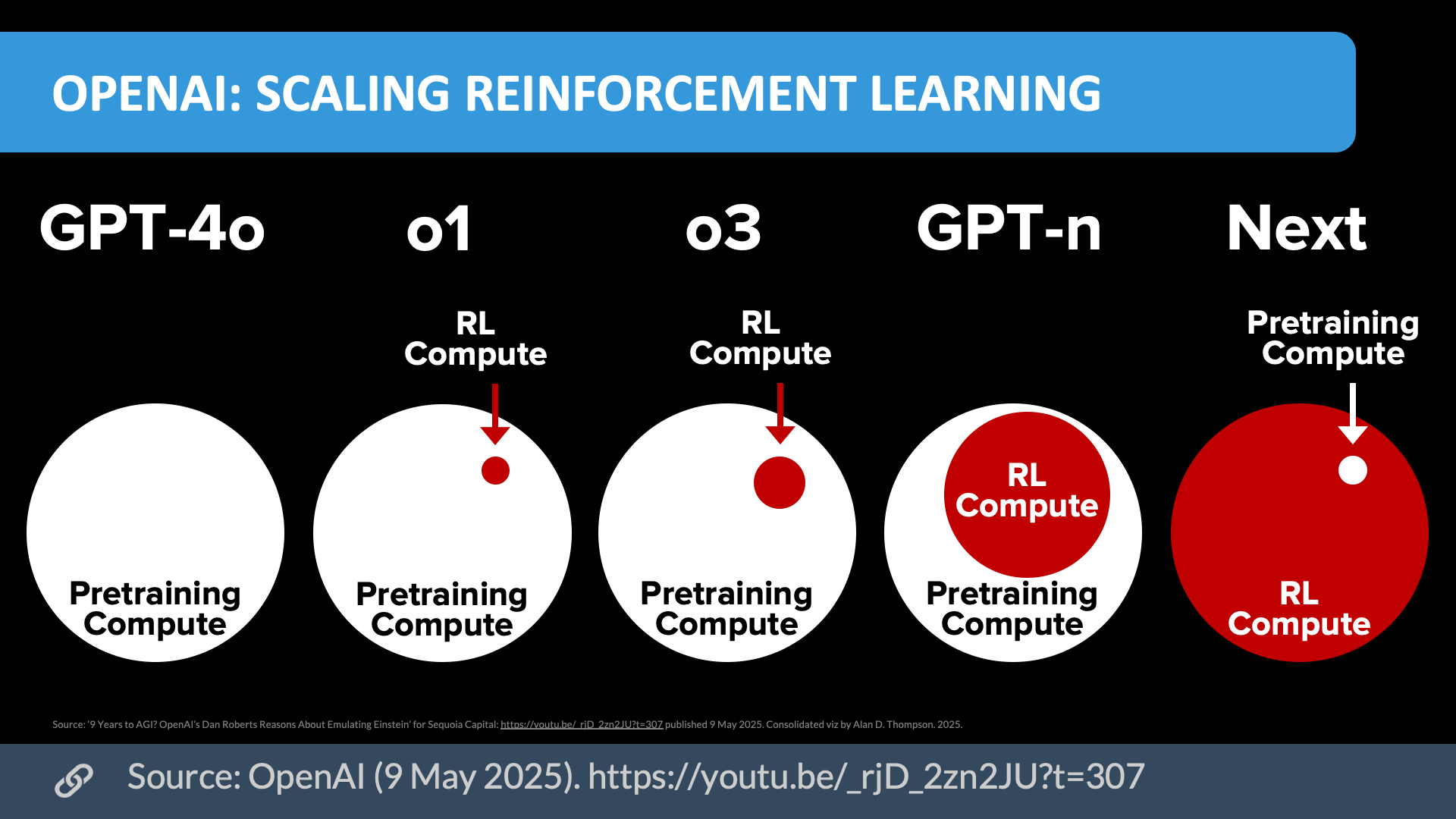

Altman's 2-3x productivity claim is notable for its specificity and its source. As OpenAI's CEO, his perspective is informed by both internal use of models like GPT-4 and Claude 3.5 Sonnet for coding, and by feedback from millions of developers using ChatGPT and the API. This claim is less a prediction and more a report from the field, suggesting these multipliers are already being realized by advanced users.

This interview follows a clear pattern in Altman's recent public commentary, where he has consistently emphasized AI as a force multiplier for individual capability rather than just a corporate efficiency tool. This aligns with the thesis behind tools like Devin from Cognition AI, an autonomous AI software engineer we covered in March 2025, which aims to handle entire development workflows from scratch. While Devin targets full autonomy, Altman's "copilot to company" framework describes a spectrum where human oversight remains central but scope expands dramatically.

The economic implication—substituting human team costs for compute costs—also connects directly to the ongoing scarcity and geopolitical tension around high-end GPUs. If Altman's vision holds, demand for Nvidia's H100/B100 and equivalent chips will extend far beyond model training labs and into every software company and independent developer looking to leverage this "company-in-a-box" productivity. This could further strain supply and intensify the competition between cloud providers (AWS, Google Cloud, Microsoft Azure) and sovereign AI initiatives to secure capacity.

Finally, this shifts the competitive moat for software companies. The barrier to building a product may lower significantly (one founder with AI), but the barrier to building a great, scalable, and maintainable product may become even more dependent on high-quality data, robust evaluation frameworks, and sophisticated AI orchestration—areas where established tech giants still hold a considerable advantage.

Frequently Asked Questions

How does Sam Altman's 2-3x productivity claim compare to actual research?

Independent academic and industry studies on AI pair programmers have shown a wide range of results, typically between a 10% and 55% increase in task completion speed or code quality. Altman's 100-200% claim is at the very high end of these estimates. It likely reflects the productivity of expert users leveraging the most advanced models (like GPT-4o or Claude 3.5 Sonnet) across an entire development workflow, not just within a controlled study for a single task.

What does "AI is moving from 'copilot' to 'company'" actually mean?

"Copilot" implies an AI assistant that helps a human with specific tasks (completing a line, suggesting a fix). "Company" implies an AI-augmented system that can take on the breadth of responsibilities typically distributed across a team—planning, coding, reviewing, testing, and deploying. The human transitions from a hands-on coder to a strategic director and prompt engineer for a suite of AI agents.

What tools exist today that enable this "company" level of productivity?

No single tool fully realizes this vision yet. However, developers are combining several: AI-powered IDEs (Cursor, Windsurf), autonomous coding agents (Devin, SWE-Agent), cloud development environments (GitHub Codespaces, Gitpod), and orchestration platforms (LangChain, LlamaIndex) to automate larger parts of the stack. The trend is toward tighter integration of these capabilities.

Does this mean software engineering jobs are at immediate risk?

Not immediately, but the role is evolving rapidly. The demand is shifting from pure code-writing proficiency towards skills in AI prompt engineering, system architecture, code review for AI-generated output, and managing AI development workflows. Engineers who leverage AI to amplify their impact will be highly valued; those whose skills are limited to tasks AI can now reliably automate may face pressure.