OpenAI CEO Sam Altman has issued a stark warning in a new interview, stating that artificial superintelligence (ASI) is "so close" and its potential impact so "mind-bending" and disruptive that the United States must forge a new social contract to manage the transition. The remarks, made in a half-hour interview with Axios, frame the coming AI era as a societal shift on par with the Progressive Era of the early 1900s and the New Deal during the Great Depression.

The Core Warnings

Altman's central thesis is that the leap to superintelligence—AI systems that surpass human intelligence across all domains—is not a distant science-fiction scenario but a near-term reality with profound, immediate consequences. He outlined several critical risks:

- Widespread Job Loss: The automation of cognitive labor is expected to be far more extensive than previous industrial revolutions, potentially displacing vast swaths of the workforce.

- Unprecedented Cyber Threats: Altman specifically warned that "soon-to-be-released AI models could enable a world-shaking cyberattack this year." He stated, "I think that's totally possible. I suspect in the next year, we will see significant threats we have to mitigate from cyber."

- Social Upheaval and Loss of Control: The interview highlighted risks of societal instability and the potential for creating autonomous machines that humans cannot reliably control.

The Proposed Solution: A New Social Contract

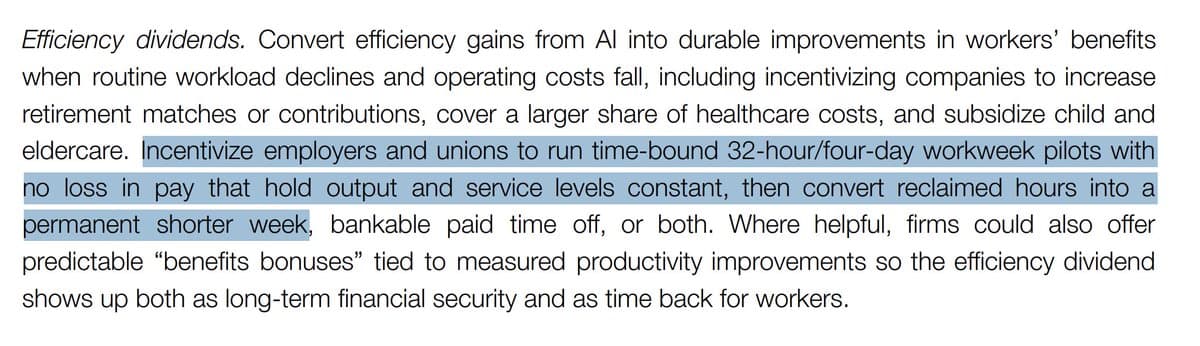

Facing these systemic risks, Altman argues that incremental policy adjustments will be insufficient. Instead, he advocates for a comprehensive reimagining of the relationship between citizens, the economy, and the state—a "new social contract." While details were not fully elaborated in the sourced comments, the historical analogies suggest policies could involve major reforms in economic redistribution, job retraining, social safety nets, and governance structures designed to distribute the benefits of AI and mitigate its harms.

The call echoes themes Altman has explored previously, including his advocacy for universal basic income (UBI) experiments and his involvement in initiatives like Worldcoin, which aims to create a global identity and financial network.

The Timeline: "This Year" and "The Next Year"

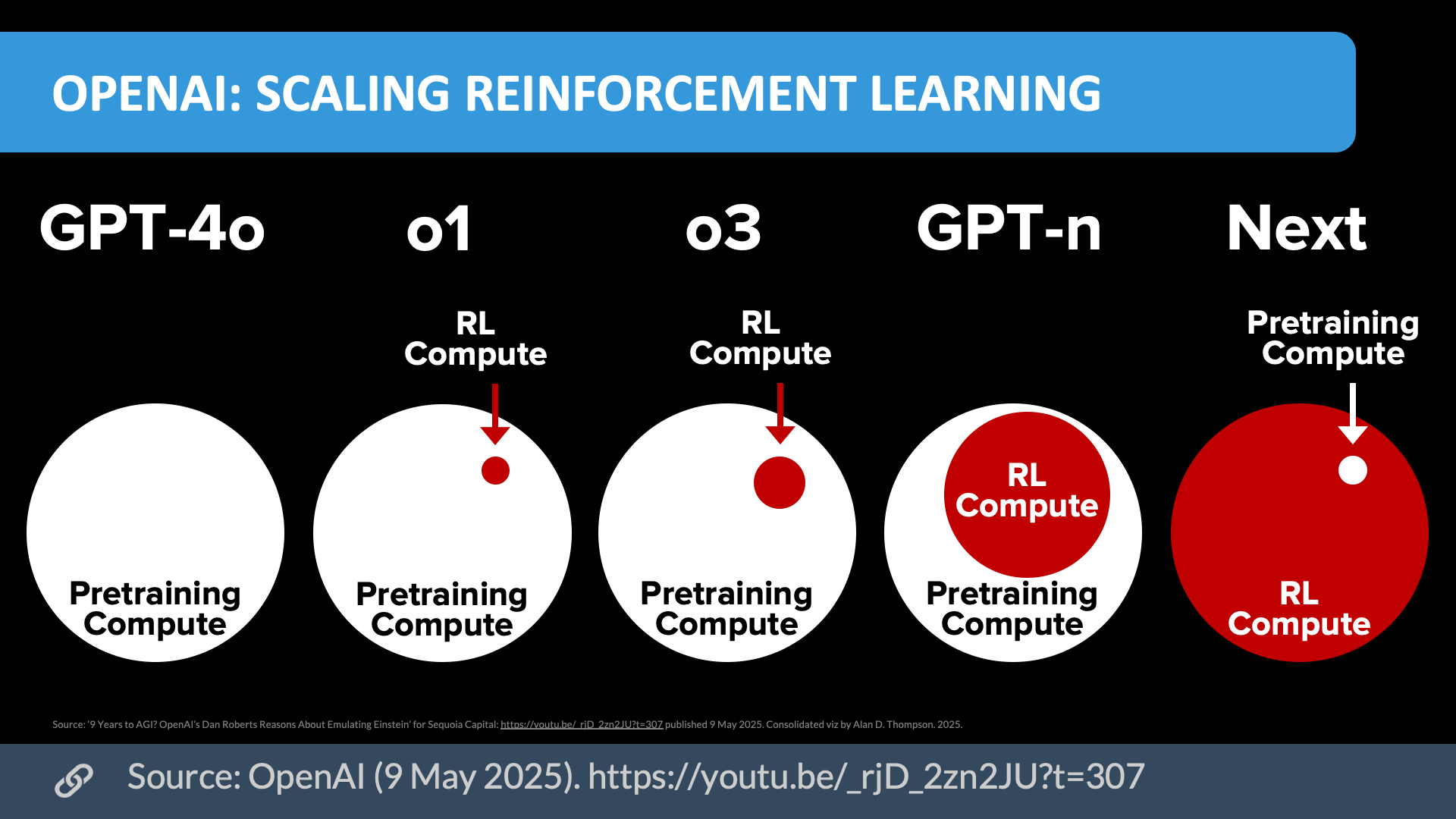

The most concrete and urgent aspect of Altman's warning concerns cybersecurity. His prediction that new AI models could enable a catastrophic cyberattack within the year directly ties the abstract risk of superintelligence to tangible, near-term danger. This suggests that the capabilities of models on the immediate horizon—potentially including OpenAI's own next-generation systems—represent a qualitative leap in offensive cyber capabilities.

gentic.news Analysis

Altman's public shift towards emphasizing near-term, high-stakes disruption is a significant rhetorical escalation. For years, the AI safety debate has oscillated between focusing on long-term existential risk and near-term ethical issues like bias. Altman is now squarely placing a high-probability, high-impact disruption timeline within the current business and political cycle.

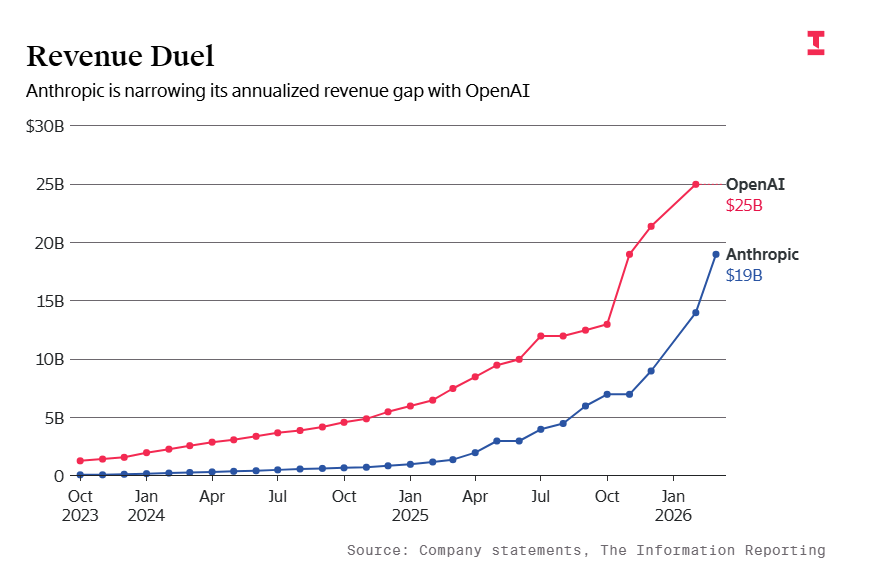

This aligns with a broader trend of escalating statements from AI lab leaders. In late 2025, Anthropic CEO Dario Amodei testified before Congress about national security risks posed by advanced AI, and Google DeepMind's Demis Hassabis has frequently discussed the accelerated pace of capability gains. Altman's interview, however, is notable for its direct invocation of historical social reforms and its specific warning about the next year.

The cyberattack warning is particularly credible given the trajectory of large language models (LLMs). As we covered in our analysis of Claude 3.5 Sonnet's coding capabilities, state-of-the-art models are already proficient at writing and exploiting code. The next step—models that can autonomously chain exploits, perform advanced reconnaissance, and adapt in real-time to cyber defenses—would indeed lower the barrier to entry for sophisticated attacks. This creates a paradox: the same AI capabilities being developed to defend systems (like automated threat detection and patch generation) could be weaponized more efficiently.

Altman's call for a new social contract is ambitious but faces immense political hurdles. It raises immediate questions: Is he advocating for a government-led initiative, a private-public partnership, or a broader cultural movement? How does this align with OpenAI's own corporate structure and profit motives? The interview seems designed to provoke a policy response, shifting the Overton window on what constitutes adequate preparation for AGI/ASI.

Frequently Asked Questions

What does Sam Altman mean by a "new social contract"?

He is referring to a fundamental restructuring of societal systems—like taxation, wealth distribution, employment, and social safety nets—to manage the economic displacement and concentration of power expected from artificial superintelligence. He compares its scale to the Progressive Era (which brought antitrust laws and labor reforms) and the New Deal (which created Social Security and major public works programs).

What AI models is Altman referring to that could enable a cyberattack?

While not named, the "soon-to-be-released" models likely refer to the next generation of large language models and agentic AI systems from leading labs like OpenAI, Anthropic, and Google. These models are expected to have significantly improved reasoning, planning, and tool-use capabilities, which could be directed towards discovering software vulnerabilities, writing malicious code, and orchestrating complex cyber operations with minimal human guidance.

Is this a change from OpenAI's previous messaging?

Yes, in tone and immediacy. While OpenAI's charter mentions managing AGI's societal impact, public communications have more often balanced optimism about AI's benefits with abstract long-term safety concerns. This interview emphasizes disruptive, near-term concrete risks (job loss, cyberattacks) and frames the response as a historic societal overhaul, marking a more urgent and politically charged stance.

How likely is a "world-shaking" AI-enabled cyberattack in the next year?

Expert opinion is divided. Many cybersecurity researchers agree that AI is already lowering the barrier for certain attacks (phishing, malware generation). A "world-shaking" attack—perhaps on critical infrastructure like the power grid or financial systems—would require not just AI capability but also access and intent. Altman's warning suggests he believes the capability threshold for such an attack will be crossed by upcoming models, making the risk significantly higher in 2026-2027.