A Yale University professor has instituted a stark policy shift in response to the pervasive influence of generative AI on student work: all writing must now be done by hand, in person, under direct supervision.

The decision, highlighted in a social media post, stems from the professor's observation that student submissions had become homogenized. "Everyone sounds polished. No one sounds original," the professor noted, identifying the hallmarks of AI-assisted or AI-generated text. To re-establish a baseline of authentic, individual thought, the professor now requires students to complete assignments using pen and paper while being watched, effectively removing the possibility of AI intervention.

This policy is a direct, low-tech response to a high-tech problem that is consuming academic institutions globally. While tools like GPT-4, Claude, and Gemini offer powerful assistance, they also enable the production of competent but generic prose that lacks a distinct student voice or original critical engagement. The professor's solution bypasses the arms race of AI detection software—which has proven unreliable and prone to false accusations—in favor of a physical guarantee of authorship.

The Core Problem: The Loss of the "Original Voice"

The professor's primary concern isn't merely plagiarism in the traditional sense, but the erosion of intellectual development. The process of struggling with syntax, structuring an argument, and finding one's own voice is a core component of learning. When AI generates a first draft (or a final submission), that foundational cognitive struggle is outsourced. The result, as observed at Yale, is a classroom where every paper is competent, polished, and eerily similar—a scenario that makes meaningful evaluation and personalized feedback nearly impossible.

A Micro-Solution with Macro Problems

While the in-person handwriting method solves the verification problem for this single classroom, it highlights a systemic crisis. As the professor mused, "I wonder what happens to the millions of classrooms that don't have him as a professor."

Most large lecture courses, online degree programs, and under-resourced schools cannot implement such labor-intensive, proctored writing sessions. The scalability of this solution is zero. It creates a two-tier system: small seminars where authenticity can be physically enforced, and everything else, where the provenance of work remains in doubt. Furthermore, it rolls back the clock on accessibility, disadvantaging students who rely on digital tools for learning differences.

The Failing Arms Race of AI Detection

This move also serves as an indictment of current technological solutions. Universities have invested in AI detection tools like Turnitin's AI writing indicator. However, these tools have been widely criticized for low accuracy, especially with non-native English writing, leading to student disputes and a breakdown of trust. When detection fails, professors are left with only a stylistic suspicion—a feeling that the work is "too polished" or lacks a personal voice—which is insufficient grounds for academic sanction. The Yale professor's policy is a retreat from this unreliable technological battlefield to the pre-digital certainty of physical observation.

gentic.news Analysis

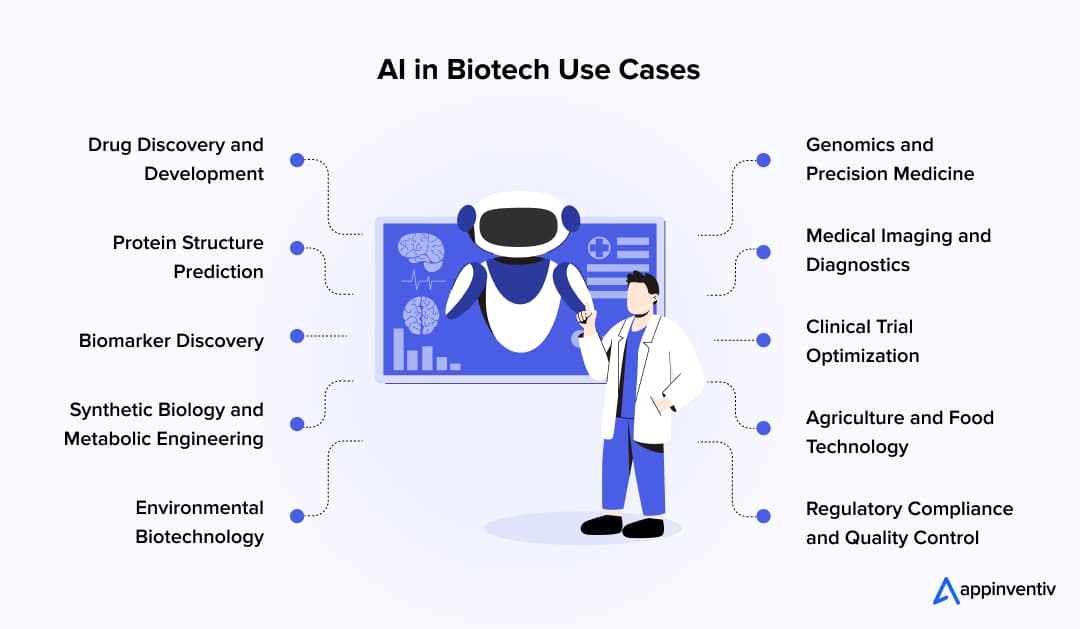

This incident is not an isolated classroom policy but a symptom of the profound disruption generative AI has caused to foundational assessment models in education. It directly follows the widespread integration of LLMs like OpenAI's GPT-4 and Anthropic's Claude into student workflows since 2023, a trend we documented in our analysis of the "Homework Apocalypse". The failure of detection tools has created a vacuum of trust, forcing educators to devise their own, often extreme, countermeasures.

This aligns with a broader trend of institutions grappling with policy formulation. Harvard, for instance, has taken a decentralized approach, letting individual schools set rules, leading to a patchwork of permissions and bans. The Yale case represents the logical endpoint of a restrictive policy: if you cannot trust the digital submission, you must witness the physical act of creation. This development contradicts the optimistic narrative pushed by some EdTech startups that AI will seamlessly become a "co-pilot" for learning. In reality, as this shows, it is often treated as an unauthorized proxy.

Looking ahead, this forces a critical question: will education bifurcate? One path leads toward a surveillance-heavy model of locked-down browsers, keystroke biometrics, and AI proctoring to preserve traditional assessment forms. The other, more transformative path, which some forward-looking departments are exploring, involves fundamentally reimagining assessment—shifting from graded essays to evaluated processes, oral defenses, in-person discussions, and project-based work that inherently verifies understanding. The Yale professor's handwriting mandate is a stopgap in this turbulent transition, highlighting that the sector is still far from a stable, scalable solution.

Frequently Asked Questions

Why can't AI detection tools solve this problem?

Current AI detectors analyze text for statistical patterns like "perplexity" and "burstiness." However, these patterns can be obscured by lightly editing AI output or using newer AI models specifically trained to evade detection. More critically, these tools have high false positive rates, especially for non-native English speakers, making them ethically and legally problematic for penalizing students. Their unreliability has eroded trust, making professors hesitant to rely on them alone.

Is requiring handwritten work a fair solution?

While it guarantees authenticity, it has significant drawbacks. It is not scalable for large classes. It disadvantages students with disabilities or conditions that make handwriting difficult. It also rejects the potential benefits of digital tools for drafting and editing. It is best viewed as a temporary, last-resort measure for specific, high-stakes assignments in small settings rather than a universal solution.

What are universities doing at a policy level?

University policies are currently in massive flux. Most have updated academic honesty policies to explicitly prohibit submitting AI-generated work as one's own. However, enforcement is the challenge. Some departments are creating "AI-permissive" assignments where use is allowed but must be documented, while others are designing "AI-proof" assessments like in-class essays or oral exams. There is no consensus, leading to the patchwork of approaches seen today.

How might assessments need to change long-term?

Long-term, many educators argue assessments must shift from evaluating a final product (the essay) to evaluating the process and the student's demonstrable understanding. This could mean more:

- In-person presentations and oral examinations (vivas)

- Staged assignments where students submit outlines, drafts, and reflections

- Project-based work with unique, personal outputs

- Collaborative, in-class problem-solving sessions

The goal is to design tasks where the journey of thinking is as important as the destination, making unauthorized AI assistance less relevant or easily spotted.