NVIDIA has spotlighted a series of major technical advances aimed at pushing AI-powered robotics from research labs into real-world deployment. The core strategy emphasizes a simulation-first training paradigm, leveraging synthetic environments to teach robots complex tasks before they ever touch physical hardware. This approach, powered by NVIDIA's Isaac and Jetson platforms, is now seeing scalable application in critical industries including agriculture, energy, and residential settings.

The development signals a maturation of AI robotics, moving beyond controlled demos to systems that must learn faster, adapt to unstructured environments, and perform autonomously. Breakthroughs in foundation models for robotics, high-fidelity synthetic data generation, and efficient edge AI inference are converging to make this possible.

What's New: From Sim to Field

The highlighted advances represent a full-stack approach to AI robotics:

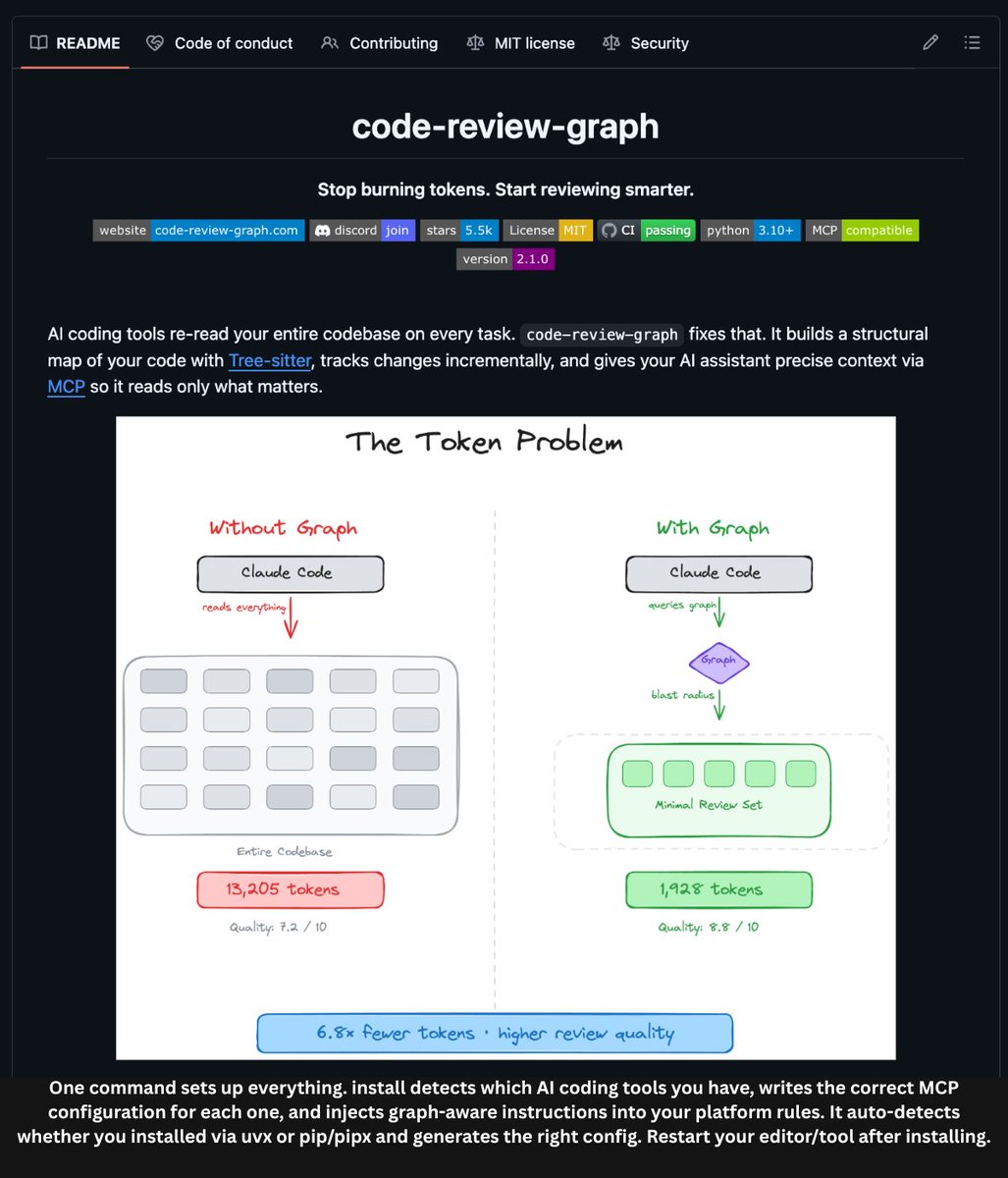

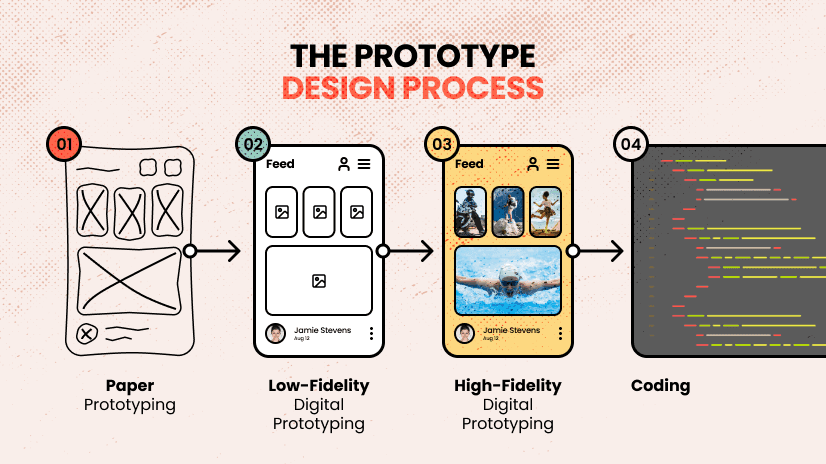

- Simulation-First Training: Instead of training solely on expensive, slow, and potentially dangerous real-world data, robots are primarily trained in NVIDIA's Isaac Sim, a scalable, photorealistic virtual environment. Here, they can practice tasks millions of times, learning physics, perception, and control in a accelerated, parallelized digital twin of the world.

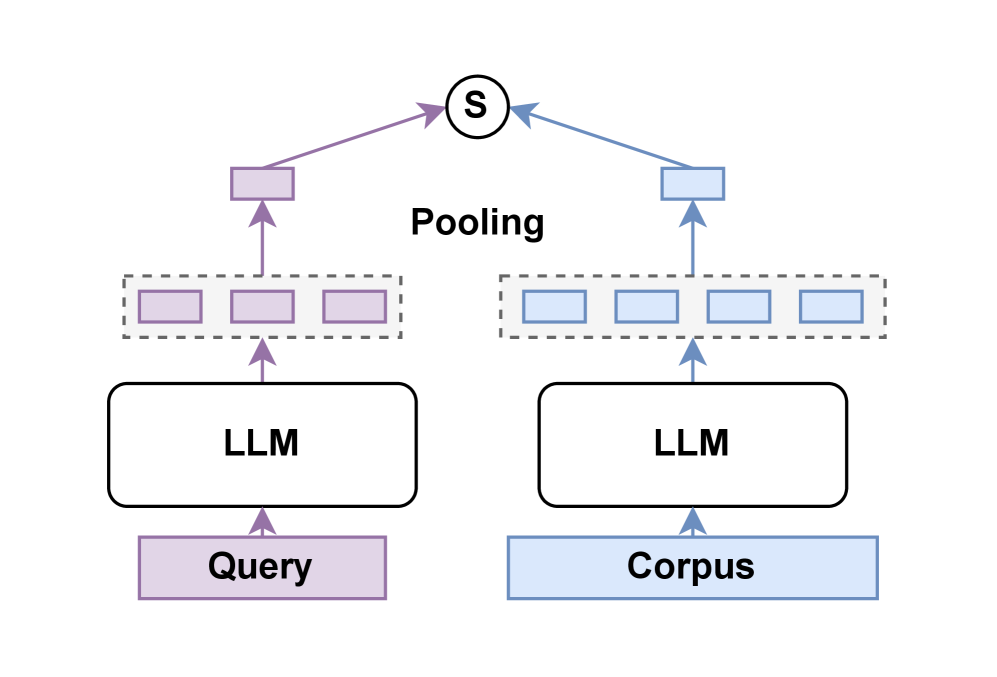

- Foundation Models for Robotics: Large, pre-trained AI models are being adapted to understand robotics tasks. These models bring in prior knowledge about objects, physics, and language, allowing robots to learn new tasks with less specific training data—a concept known as transfer learning from simulation to reality (Sim2Real).

- Scalable Edge Deployment: The trained AI models are deployed not in a distant cloud but directly on the robots themselves using NVIDIA's Jetson platform. These edge AI computers provide the necessary processing power for real-time perception and decision-making without constant, high-bandwidth connectivity.

Technical Details: The Isaac & Jetson Stack

NVIDIA's push is underpinned by its integrated software and hardware stack:

- NVIDIA Isaac Sim: Built on Omniverse, this is a robotics simulation toolchain. It generates synthetic training data, provides realistic sensor simulations (e.g., cameras, lidar), and runs massively parallel reinforcement learning training sessions. It's the core of the "simulation-first" doctrine.

- NVIDIA Jetson: A series of compact, power-efficient system-on-modules (SoMs) designed for edge AI and robotics. They run the complete AI software stack, from perception models (often trained in Isaac Sim) to control policies, enabling autonomous operation.

- NVIDIA Isaac ROS & Isaac SDK: These software frameworks provide developers with tools, libraries, and pre-built capabilities (like navigation or manipulation) to build and deploy robotics applications.

Real-World Applications: Agriculture and Energy

The tweet specifically called out two beneficial societal applications:

- Building Solar Autonomously: AI robots, trained in simulation to handle varied terrain and panel assembly, can be deployed for solar farm construction and maintenance. This increases deployment speed and reduces costs for renewable energy infrastructure.

- Increasing Productivity in Agriculture: Robots can perform precise tasks like selective harvesting, weeding, or crop monitoring. Trained in simulated farms with endless variations of crop types, growth stages, and weather conditions, these robots can adapt to real fields, aiming to boost yield and reduce resource use.

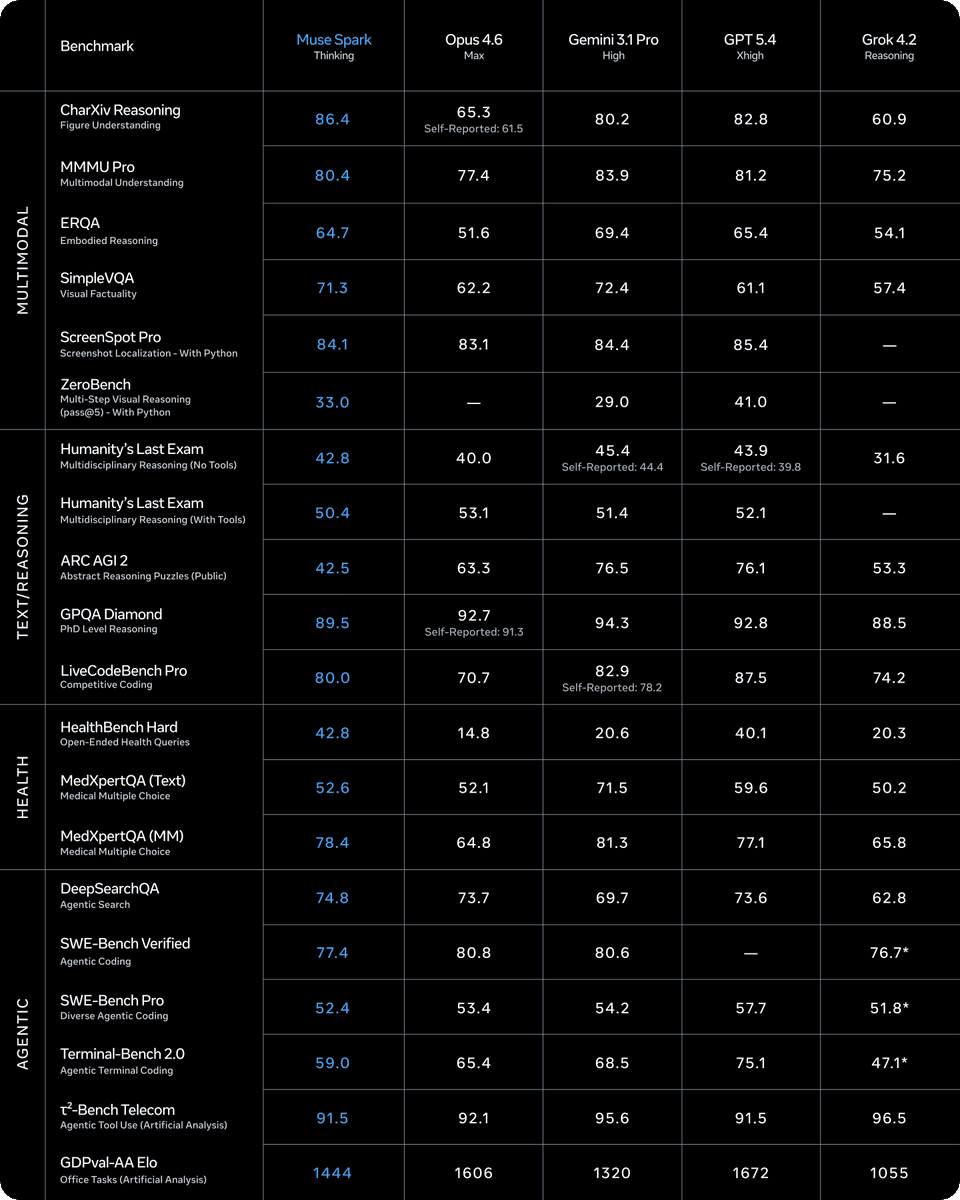

How It Compares

This NVIDIA-centric approach contrasts with and complements other industry efforts:

Simulation-First Training NVIDIA (Isaac), Google (Robotics Transformer), OpenAI (early work) Scalable, safe training in digital twins before real-world deployment. Large-Scale Real-World Data Boston Dynamics, Tesla (Optimus) Leveraging data from physical robot fleets to train models. Embodied AI Research Academic Labs (Stanford, Berkeley, CMU), FAIR Fundamental algorithms for robot learning and interaction.NVIDIA's strength is providing the infrastructure layer—the simulation engines and hardware—that other companies and researchers use to build their specific robotic solutions.

What to Watch: The Sim2Real Gap and Scalability

The primary technical hurdle remains the simulation-to-reality (Sim2Real) gap. No simulation is perfect; differences in physics, lighting, and material properties can cause a policy that excels in sim to fail in the real world. Advances in domain randomization (varying sim parameters during training) and foundation models are directly attacking this problem.

Scalability of deployment is the other key challenge. The promise of NVIDIA's stack is to move from one-off research projects to fleets of robots. Success will be measured by the volume and reliability of real-world deployments in the highlighted sectors over the next 12-24 months.

gentic.news Analysis

This spotlight from NVIDIA is less about a single new product and more about a validation of a strategic trajectory the company has been building for nearly a decade. It follows NVIDIA's consistent pattern of identifying a computationally intensive domain (initially graphics, then AI training, now robotics simulation), building a full-stack platform (CUDA, DGX, now Isaac/Jetson), and cultivating an ecosystem. This aligns with our previous coverage on NVIDIA's Omniverse platform becoming a critical tool for industrial digital twins and synthetic data generation.

The emphasis on agriculture and energy is strategically significant. These sectors represent massive, addressable markets with clear ROI for automation—labor shortages in farming and the global push for renewable energy infrastructure. By targeting these areas, NVIDIA is positioning its robotics AI not as a general-purpose humanoid play (like Tesla or Figure), but as a vertical-specific efficiency tool. This pragmatic focus may lead to faster commercial adoption.

Technically, the integration of foundation models is the most consequential trend here. As discussed in our analysis of Google's RT-2, large vision-language-action models are dramatically reducing the amount of task-specific training data needed. When combined with NVIDIA's synthetic data generation capabilities, it creates a powerful flywheel: use foundation models to bootstrap understanding, then use simulation to generate vast amounts of targeted training data for refinement. The entity relationship here is key—NVIDIA provides the synthetic data engine and deployment hardware that makes these large models operable on physical robots at the edge.

Frequently Asked Questions

What is simulation-first training in robotics?

Simulation-first training is a paradigm where AI models for robot perception and control are trained primarily within high-fidelity virtual environments before being transferred to physical robots. This allows for safe, scalable, and massively parallel training that would be too time-consuming, expensive, or dangerous to conduct in the real world. NVIDIA's Isaac Sim is a leading platform for this approach.

What is the difference between NVIDIA Isaac and Jetson?

NVIDIA Isaac is a suite of software tools for robot simulation (Isaac Sim) and development (Isaac SDK/ROS). It's used to train and program robots. NVIDIA Jetson is a family of hardware modules—edge AI computers—that are physically embedded into robots to run the AI models and software in real-time, enabling autonomy without relying on cloud connectivity.

How does AI help in building solar farms autonomously?

AI robots can be trained in simulation to perform tasks like surveying land, transporting and positioning solar panels, and connecting electrical components. In the real world, these robots, often autonomous vehicles or robotic arms, can execute these plans, working in difficult terrain and harsh weather. This accelerates construction timelines, reduces labor costs, and improves worker safety for large-scale solar deployments.

What is the simulation-to-reality (Sim2Real) gap?

The Sim2Real gap refers to the performance drop that often occurs when an AI model trained perfectly in a simulation is deployed on a physical robot. This is caused by inevitable differences between the simulated and real worlds, such as friction, lighting, sensor noise, and material properties. Closing this gap is a major focus of robotics research, using techniques like domain randomization and adaptive control.