A recent performance test has quantified a significant advantage for Qualcomm's dedicated Neural Processing Unit (NPU) over its traditional CPU when handling a common AI task. In Optical Character Recognition (OCR) workloads, the NPU demonstrated processing speeds 6 to 8 times faster than the CPU.

What the Benchmark Shows

The test, shared by developer and leaker Max Weinbach, directly compares the execution time for OCR tasks—converting images of text into machine-encoded text—on a Qualcomm-powered device. The result is a straightforward performance multiplier: the specialized NPU hardware completes the same AI inference work in roughly one-sixth to one-eighth of the time required by the general-purpose CPU cores.

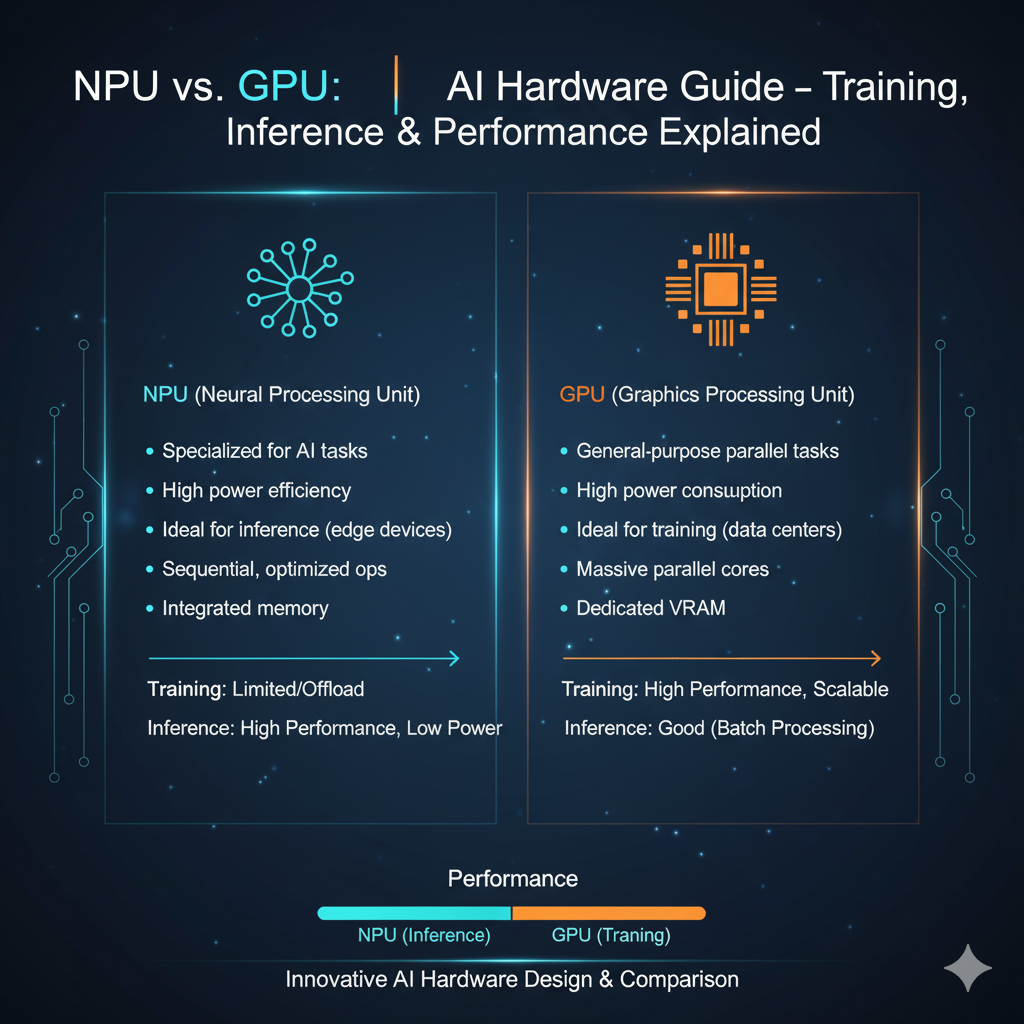

This gap underscores the fundamental architectural efficiency of dedicated AI accelerators. While CPUs are designed for sequential, general-purpose computation, NPUs are built with massively parallel operations and lower-precision math (like INT8 or FP16) that are optimal for neural network inference.

The Technical Context for On-Device AI

OCR is a foundational on-device AI workload, critical for features like live translation through a camera, document scanning in productivity apps, and extracting text from images for search. As AI models move from the cloud to the smartphone (a trend called on-device AI or edge AI), the performance and power efficiency of the NPU become primary differentiators.

A 6-8x speed-up translates directly to user experience: near-instant text recognition versus a perceptible delay, and significantly better battery life since the task completes faster and the specialized hardware is more power-efficient per operation.

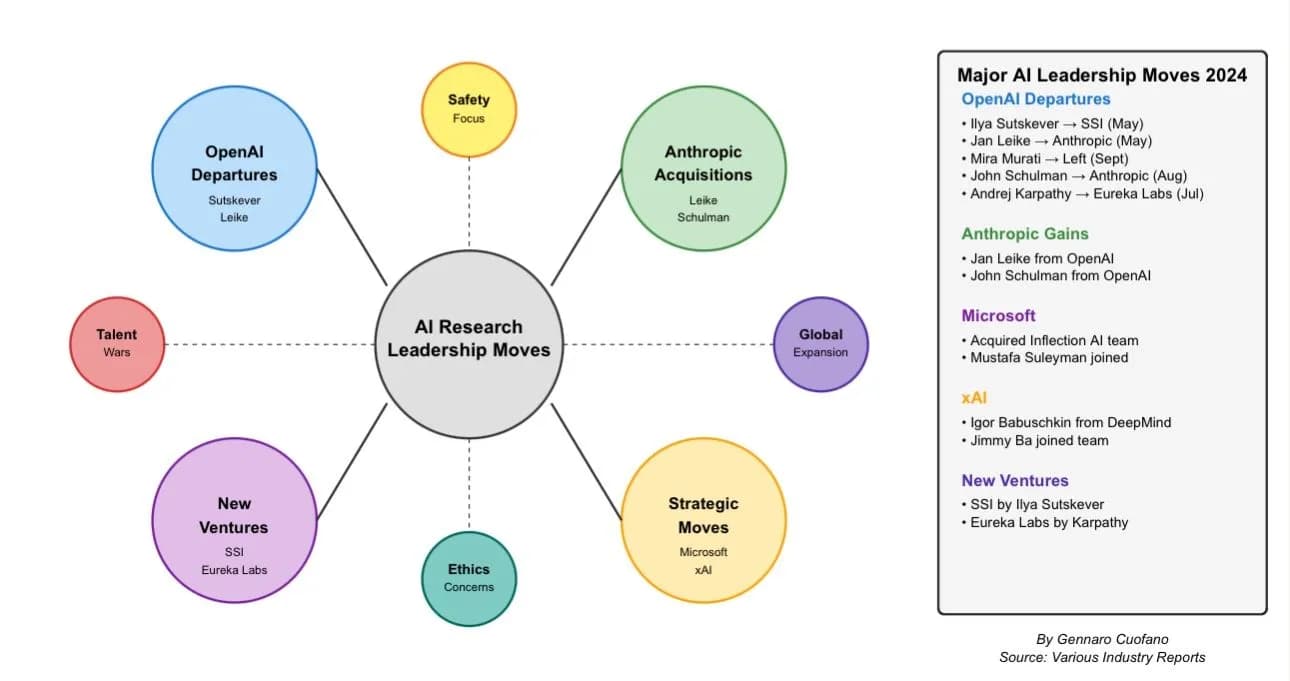

The Competitive Landscape for Mobile NPUs

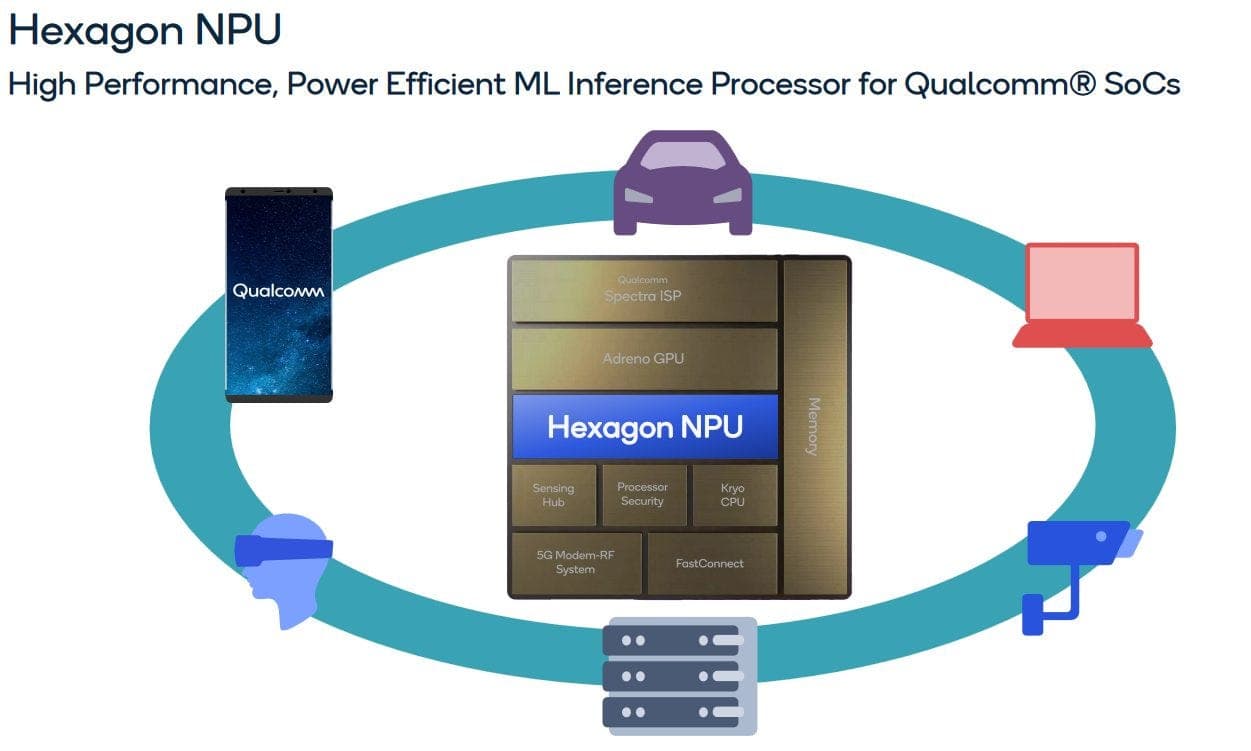

Qualcomm has been integrating NPUs into its Snapdragon platforms for several generations, with its Hexagon processor being a central component. This benchmark arrives as competition in mobile AI silicon intensifies:

- Apple has long emphasized the performance of its Neural Engine, a key selling point for features like Live Text and computational photography.

- MediaTek and Google (with its Tensor chips) are also pushing on-device AI capabilities in the Android ecosystem.

- Samsung is reportedly developing its own AI-optimized chip for future Galaxy devices.

Performance leads in specific, common workloads like OCR are crucial marketing and technical ammunition in this race.

What This Means for Developers

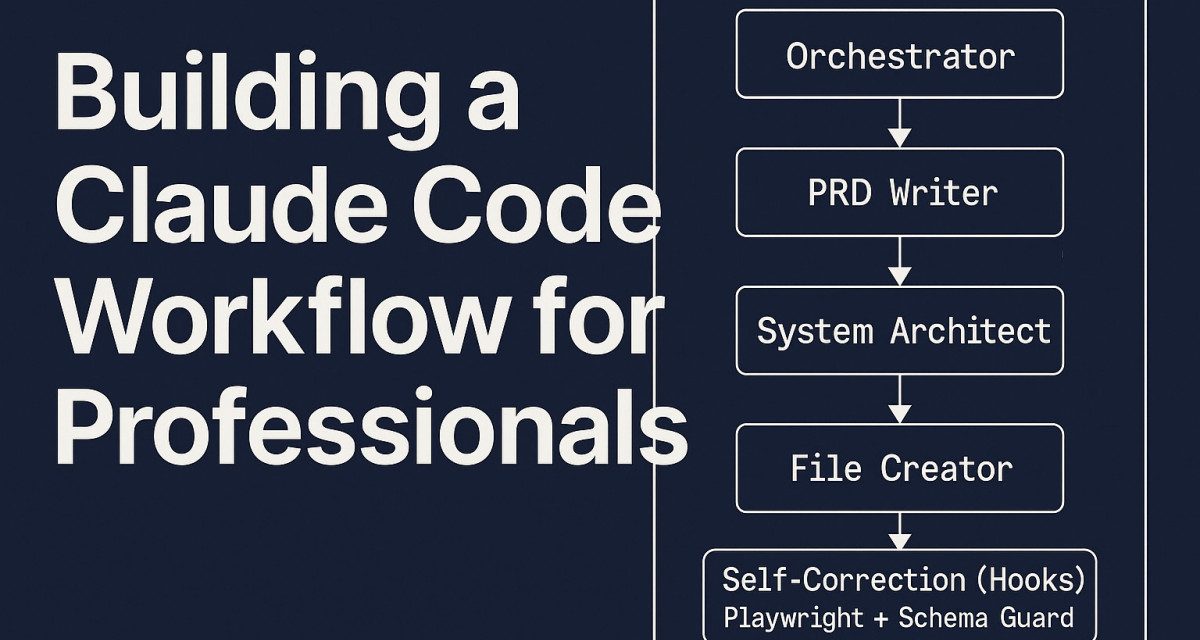

For AI engineers and application developers, this benchmark reinforces a critical directive: offload AI inference to the NPU. To achieve this, developers must use frameworks and APIs that support hardware acceleration, such as:

- Android NNAPI (Neural Networks API)

- Qualcomm's SNPE (Snapdragon Neural Processing Engine) SDK

- TensorFlow Lite or PyTorch Mobile with appropriate delegates

Failure to utilize the NPU means leaving a massive performance and efficiency gain on the table, resulting in a slower, more power-hungry app.

Limitations and Caveats

The shared result is a high-level benchmark. It does not specify:

- The exact Qualcomm Snapdragon platform (e.g., Snapdragon 8 Gen 3, 8s Gen 3, or 7-series).

- The specific OCR model architecture or size.

- The precise latency measurements in milliseconds for CPU vs. NPU.

- Power consumption measurements, which are equally important for battery life.

Real-world performance can vary based on model optimization, thermal conditions, and system load.

gentic.news Analysis

This benchmark is a data point in a long-running trend we've tracked: the relentless specialization of silicon for AI. As we covered in our analysis of MediaTek's Dimensity 9300, the industry is moving beyond simply adding an "AI core" to radically rethinking chip architecture around heterogeneous computing. The CPU is increasingly becoming a traffic controller, directing specialized tasks to optimized blocks like the NPU, GPU, and DSP.

This performance delta also validates the strategic pivot of companies like Qualcomm. Facing slowing growth in traditional smartphone markets, Qualcomm has aggressively positioned its Snapdragon platforms as the premier on-device AI hub, a narrative central to its launch of the Snapdragon 8 Gen 3. Demonstrating a 6-8x lead in a ubiquitous task like OCR is a powerful proof point for OEMs and developers.

However, raw throughput is only part of the story. The next frontier is model support and software. Apple's Neural Engine benefits from deep, framework-level integration with Core ML. Qualcomm's challenge is to ensure its SNPE SDK and support for models like Meta's Llama or Google's Gemma are as seamless for developers, turning this hardware advantage into a ubiquitous ecosystem advantage. If the software stack is cumbersome, developers may not fully harness this speed-up, blunting its market impact.

Frequently Asked Questions

What is an NPU?

An NPU, or Neural Processing Unit, is a specialized piece of hardware within a system-on-a-chip (SoC) designed specifically to accelerate the mathematical operations used in artificial neural networks. It is more efficient at these tasks than a general-purpose CPU or even a GPU for many inference workloads.

Why is OCR a good benchmark for an NPU?

Optical Character Recognition is a classic computer vision task powered by neural networks (like CNNs or Transformers). It has a clear, measurable output (text accuracy and speed), is a common user-facing feature, and represents the kind of sustained, moderate-complexity inference workload that highlights the efficiency of dedicated AI hardware versus a CPU.

Does a faster NPU mean better AI features on my phone?

Yes, but with a caveat. A more powerful and efficient NPU enables faster and more responsive AI features (like live photo search or real-time translation) and allows them to run without draining the battery quickly. However, the phone's software and apps must be explicitly built to use the NPU to unlock these benefits.

How does Qualcomm's NPU compare to Apple's Neural Engine?

Direct, apples-to-apples comparisons are difficult due to different architectures, software stacks, and benchmark methodologies. Both are industry-leading dedicated AI accelerators. Qualcomm often leads in peak TOPS (Trillions of Operations Per Second) specs, while Apple's strength is its tight vertical integration of hardware, software (Core ML), and model optimization. Real-world performance depends heavily on the specific task and software implementation.