A new video generation model, Seedance 2, is now accessible via the Lovart AI platform. The launch was highlighted by a user demonstration showing the creation of a cinematic spy transformation video from a single text prompt.

What Happened

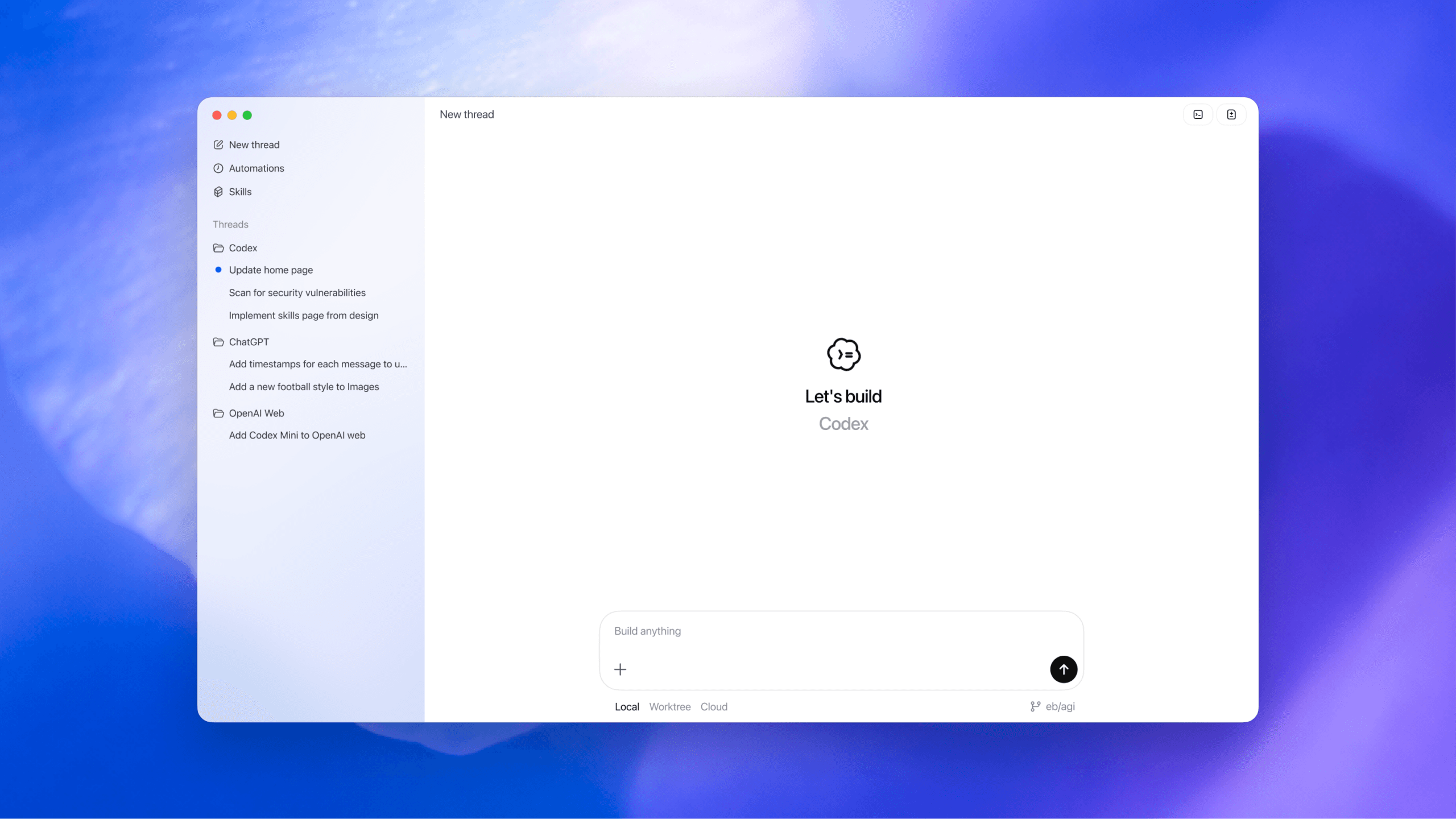

Lovart AI has integrated Seedance 2 into its platform, making the video generation model available to its users. The announcement was made via social media, accompanied by a user-generated example. The showcased video, a "cinematic spy transformation," was reportedly created using a single, detailed prompt, suggesting a streamlined workflow for complex video concepts.

Context

Seedance 2 appears to be the successor to an earlier model. Its integration into Lovart AI's platform represents the ongoing trend of AI video generation tools becoming more accessible and user-friendly, moving away from complex, multi-stage editing pipelines toward prompt-driven creation. The ability to generate a coherent, multi-scene narrative like a transformation sequence from one prompt indicates a focus on understanding temporal progression and visual consistency.

gentic.news Analysis

The launch of Seedance 2 on Lovart AI is a direct move in the highly competitive text-to-video space. This follows the pattern of specialized AI model providers partnering with or launching on application platforms to reach broader audiences, similar to how Stability AI's models are distributed. For Lovart AI, adding a capable video model like Seedance 2 is a strategic necessity to keep pace with platforms like Runway, Pika Labs, and Luma AI, which have been aggressively iterating on their own video generation features.

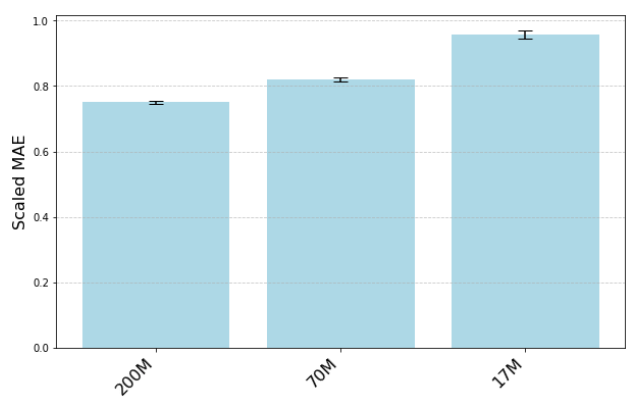

The user's claim of creating a "cinematic spy transformation" in one prompt is the key detail for practitioners. If validated, it suggests Seedance 2 may have strengths in narrative coherence and character consistency across frames—two of the most significant technical hurdles in current video generation. The major open question is benchmark performance. How does it compare to the current frontrunners like OpenAI's Sora, Veo from Google DeepMind, or the latest open-source models like Stable Video Diffusion? Metrics on temporal consistency, prompt adherence, and resolution are needed to gauge its true competitive position.

For AI engineers, the architecture and training data behind Seedance 2 will be of primary interest. Is it a diffusion-based model, a transformer, or a hybrid approach? What scale of video data was it trained on? The integration into Lovart AI suggests an API will be available, which means developers should watch for pricing, latency, and output limits, which will determine its viability for commercial applications versus the current closed beta of models like Sora.

Frequently Asked Questions

What is Seedance 2?

Seedance 2 is an AI model designed to generate video from text prompts. It is the second iteration of the Seedance model and is now hosted on the Lovart AI platform for user access.

How do I use Seedance 2?

Based on the announcement, users can access Seedance 2 through the Lovart AI platform. The process involves inputting a text description (prompt) to generate a video, as demonstrated by the creation of a cinematic spy transformation sequence.

How does Seedance 2 compare to other AI video generators?

A direct technical comparison is not yet available as no official benchmarks or model cards have been published. It enters a competitive field including models like OpenAI's Sora, Runway Gen-2, and Pika. The user demonstration suggests a focus on generating coherent multi-scene narratives from a single prompt.

Is there a waitlist or cost for Seedance 2 on Lovart AI?

The source announcement does not specify access details, costs, or potential waitlists. Users would need to check the Lovart AI platform directly for the current terms of service, pricing, and availability.