A viral social media post from AI researcher Guri Singh has highlighted a startling development: AI models can now reconstruct speech by analyzing data from smartphone sensors that capture subtle facial movements, effectively "reading" silent speech without using a microphone.

The core claim is that an AI system, trained on synchronized sensor data and audio, can learn to correlate imperceptible muscle tremors, vibrations, and skin deformations around the mouth and throat with specific phonemes and words. When the microphone is later disabled, the model can infer the spoken words solely from the sensor data.

What Happened

The post references research into using the existing suite of sensors in a smartphone—including the accelerometer, gyroscope, magnetometer, and proximity sensor—as a surrogate for audio input. These sensors can detect minute vibrations and movements caused by speech, even when no sound is intentionally produced (subvocalization) or when a user is mouthing words silently.

The technique is not a "microphone hack" but a form of sensor fusion and inference. The model is trained end-to-end on paired data: sensor readings during speech are the input, and the corresponding audio waveform or text transcript is the target output.

How It Works (Technically)

The proposed method likely involves the following steps:

- Data Collection: Recording simultaneous high-frequency streams from multiple phone sensors while a user speaks naturally, with the microphone providing the ground-truth audio.

- Feature Extraction: Processing the raw sensor signals to isolate components correlated with vocal tract activity, filtering out noise from hand movements, walking, or environmental vibrations.

- Model Training: Using a sequence-to-sequence model architecture (like a Transformer or a Temporal Convolutional Network) to map the temporal sequence of sensor features to a sequence of audio features or directly to text tokens.

- Inference: In deployment, with the microphone muted or absent, the model takes live sensor data and generates a probable speech transcript.

The key technical challenge is the extremely low signal-to-noise ratio; the speech-related movements are dwarfed by other motions. This requires sophisticated noise-invariant training and likely benefits from personalized calibration.

Context and Precedents

This concept builds upon established research areas:

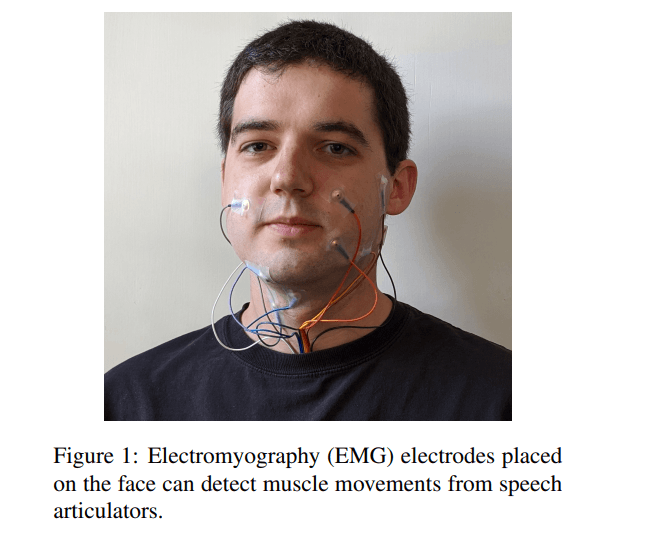

- Subvocal Speech Recognition: DARPA and research labs have long experimented with electromyography (EMG) sensors on the throat to detect nerve signals for silent communication.

- Accelerometer-based Speech Detection: Prior work has shown accelerometers pressed against the throat can capture speech vibrations.

- Motion Magnification: Computer vision techniques can amplify subtle motions in video; this applies a similar principle to inertial measurement unit (IMU) data.

The breakthrough implied here is the ability to perform this inference using the standard, non-specialized sensors already in billions of smartphones, without requiring skin contact or external hardware.

Implications and Concerns

The immediate implications are dual-use:

- Accessibility: Could enable communication for individuals who cannot vocalize.

- Privacy: Represents a profound new privacy vector. An app with sensor permissions, but not microphone permissions, could potentially eavesdrop on conversations. Standard privacy guardrails ("app X wants to use your microphone") would be circumvented.

This development forces a re-evaluation of smartphone permission models. Sensor data, often considered low-risk for privacy, may need to be guarded as stringently as microphone or camera access.

gentic.news Analysis

This report, while sensational in presentation, points to a tangible and accelerating trend in on-device AI: the extraction of high-value information from seemingly low-fidelity, multi-modal sensor streams. We've moved beyond models that understand clear audio to models that can create audio from non-audio correlates. This aligns with our previous coverage of Meta's Project Astra and Apple's advancements in on-device processing, where the fusion of camera, LiDAR, and IMU data creates a rich contextual model of the user's environment.

The privacy implications cannot be overstated. The mobile app ecosystem's permission model is built on a compartmentalized view of sensors. The microphone is sacred; the accelerometer is not. This research shatters that assumption. It creates a scenario where a seemingly innocuous fitness or game app, with permission to use the "motion & fitness" sensors, could theoretically run a silent speech inference model in the background. This would be a fundamental bypass of user intent and existing platform security paradigms.

For the AI engineering community, the technical takeaway is the continued blurring of modality boundaries. A model is no longer an "audio model" or a "vision model"; it is a sensor fusion engine. The training paradigm—using a high-quality modality (audio) to supervise learning from a low-quality modality (IMU vibrations)—is a powerful template. We should expect similar cross-modal supervision to emerge elsewhere, such as training visual activity recognition models from Wi-Fi signal perturbations or inferring typed text from subtle device motions.

Frequently Asked Questions

Can my phone really read my mind?

No. The described technology infers speech from physical muscle movements associated with subvocalization or silent mouthing of words. It is not reading thoughts or neural activity. It is an advanced form of speech recognition that uses vibration and motion sensors instead of a microphone.

Which smartphone sensors could be used for this?

The primary candidates are the accelerometer and gyroscope, which measure linear acceleration and rotational motion, respectively. These are sensitive enough to detect tiny vibrations from vocal cords and facial muscles. The magnetometer and barometer could provide additional contextual noise reduction.

How can I protect myself from this kind of privacy invasion?

Currently, be highly cautious about granting "Motion & Fitness" or sensor permissions to apps that don't have a clear, necessary use for them. On iOS and Android, you can review and revoke these permissions in Settings. In the future, operating systems may need to provide more granular, real-time control over sensor access, similar to the microphone and camera indicators.

Is this technology available in consumer apps today?

There is no public evidence that this specific capability is deployed in any mainstream consumer application. The research is likely in late-stage academic or private industry labs. However, the underlying sensor fusion and on-device AI capabilities required to build it are now commonplace in smartphones.

Source: Discussion prompted by AI researcher Guri Singh (@heygurisingh) on X, referencing emerging capabilities in sensor-based speech inference.