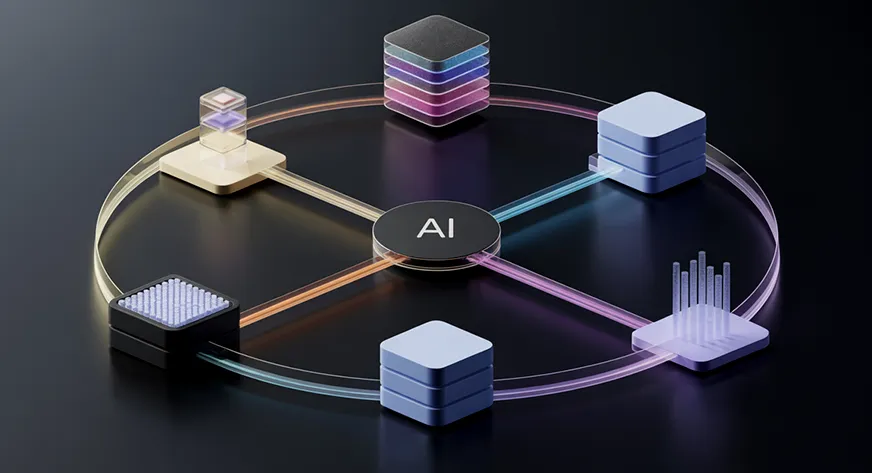

A new research paper from Stanford University presents a counterintuitive finding that challenges a foundational premise in multi-agent artificial intelligence: adding more AI agents to a system does not guarantee better performance and can actively degrade it.

The work, highlighted by researcher Omar Sanseviero, directly questions the "more agents, better results" heuristic that has driven much of the recent explosion in multi-agent frameworks and applications. Instead of linear scaling, the research identifies coordination breakdowns and performance cliffs as agent counts increase.

What the Paper Found: The Scaling Problem

The core finding is that performance in multi-agent systems often peaks at a specific number of agents and then declines with additional agents. This contradicts the intuitive assumption that throwing more computational units (agents) at a problem should monotonically improve outcomes through parallel processing, diverse perspectives, or specialized division of labor.

The researchers systematically tested multi-agent configurations across several benchmark tasks, measuring how overall system performance changed as they scaled from 2-3 agents to 10+ agents. In many configurations, they observed:

- An initial improvement phase with the first few additional agents

- A performance plateau

- A subsequent decline in accuracy, coherence, or task completion rates as more agents were added

This degradation appears linked to coordination overhead, communication bottlenecks, and conflicting outputs that current aggregation methods fail to reconcile effectively.

Why This Happens: The Coordination Bottleneck

The paper suggests several mechanisms behind this inverse scaling:

Communication Overhead: As agent count increases, the number of potential communication channels grows quadratically (n² relationships). Most current architectures don't scale efficiently to handle this complexity.

Consensus Breakdown: With more agents comes more diverse (and potentially contradictory) outputs. Simple voting or averaging mechanisms often fail to synthesize these effectively, leading to degraded final decisions.

Resource Contention: In practical deployments, additional agents compete for finite computational resources (memory, bandwidth, API calls), creating bottlenecks that weren't present with fewer agents.

Emergent Conflicts: Specialized agents may develop conflicting sub-goals or strategies that undermine overall system objectives when not properly coordinated.

Technical Implications for Multi-Agent Design

This research has immediate implications for how engineers and researchers design multi-agent systems:

- Optimal Agent Count Exists: Teams should empirically determine the optimal number of agents for their specific task rather than assuming "more is better."

- Coordination Architecture Matters More at Scale: Simple master-slave or broadcast architectures may work for 2-3 agents but fail completely at 10+ agents. More sophisticated coordination mechanisms (market-based, hierarchical, or learned coordination) become essential.

- Benchmarking Must Include Scaling Curves: Future multi-agent evaluations should report performance across different agent counts, not just at a single configuration.

The Broader Context: Multi-Agent AI's Rapid Growth

This paper arrives during a period of intense interest in multi-agent AI systems. In recent months, frameworks like CrewAI, AutoGen from Microsoft, and LangGraph have gained popularity for building teams of LLM-powered agents. The dominant narrative has emphasized that complex tasks benefit from decomposition among specialized agents—a surgeon, a radiologist, and an anesthesiologist working together should outperform a single general practitioner.

Stanford's findings suggest this medical team analogy has limits: without perfect coordination, adding more specialists can lead to miscommunication, duplicated efforts, or contradictory diagnoses that harm the patient.

gentic.news Analysis

This research provides crucial empirical grounding to what many practitioners have observed anecdotally: multi-agent systems often become unstable and unpredictable as they scale. We've covered several related developments that contextualize this finding.

First, this aligns with our December 2025 coverage of Google's "Sparrow" multi-agent framework, which emphasized "orchestration layers" as the critical component for managing agent interactions. Google's approach used a dedicated "conductor" agent whose sole purpose was managing inter-agent communication—essentially an admission that naive peer-to-peer communication doesn't scale.

Second, this research contradicts some optimistic projections from the multi-agent startup ecosystem. Several venture-backed companies (including MultiOn and Adept) have built their valuation narratives around the premise that agent teams scale linearly with task complexity. Stanford's findings suggest these companies may face fundamental architectural challenges as they move from demos to production systems.

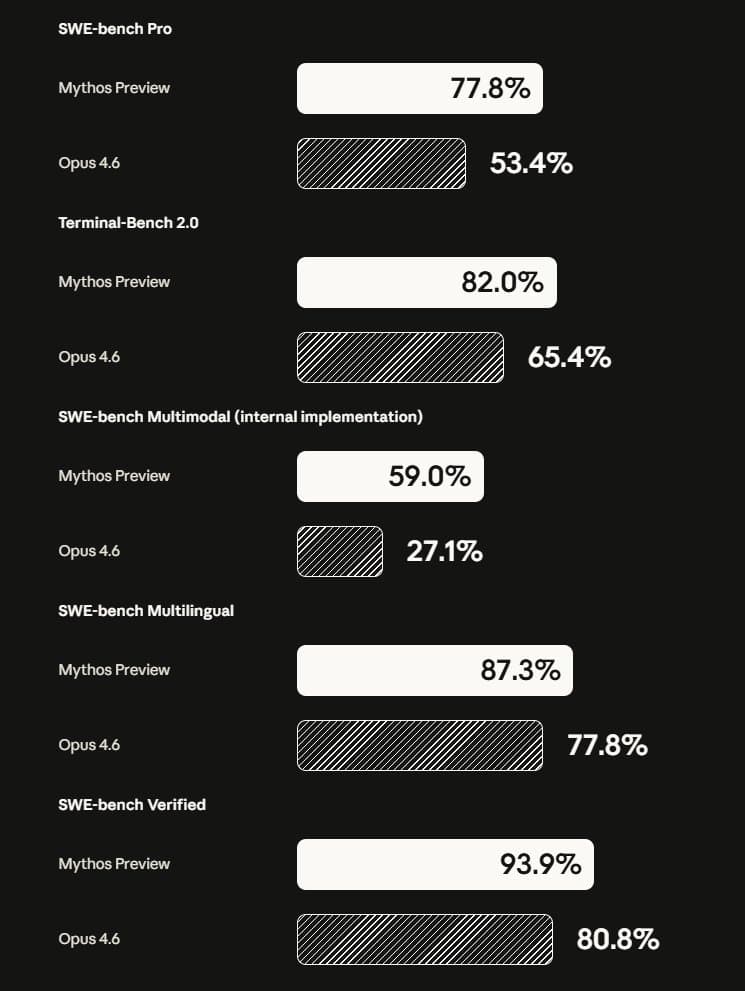

Third, this connects to the broader trend of AI systems hitting scaling limits. We've seen similar plateaus in model size (beyond which additional parameters yield diminishing returns) and training data (the "data scaling laws" discussion). Now we're seeing it in multi-agent coordination—another dimension where simple scaling hits fundamental bottlenecks.

For practitioners, the key takeaway is architectural sophistication matters more than agent count. A well-coordinated team of 3-4 specialized agents will likely outperform a poorly coordinated team of 10+ agents. The field needs to shift focus from "how many agents" to "how well they work together."

Frequently Asked Questions

What is a multi-agent AI system?

A multi-agent AI system uses multiple AI agents (often LLM-powered) working together to solve problems. Each agent might have specialized capabilities (coding, research, analysis) or different perspectives (debating different solutions). The system coordinates their outputs to produce a final result.

Why did researchers think more agents would always help?

The intuition came from several sources: human teams often benefit from more specialists, parallel computing scales with more processors, and ensemble methods in machine learning often improve with more models. However, these analogies break down when coordination costs exceed marginal benefits of additional agents.

What tasks are most vulnerable to this inverse scaling?

Tasks requiring high coherence or consistency in the final output are most vulnerable. For example, writing a cohesive document with 10+ contributing agents is challenging. Tasks with independent sub-tasks (like analyzing separate documents) might scale better, but even there, aggregation becomes problematic.

How can developers design better multi-agent systems?

Developers should: 1) Test performance across different agent counts to find the optimum, 2) Implement sophisticated coordination mechanisms beyond simple voting, 3) Consider hierarchical architectures where meta-agents manage sub-teams, and 4) Monitor for consensus breakdown as agents are added.

Does this mean multi-agent AI is flawed?

No—it means current simple implementations have scaling limits. The research points toward needed architectural innovations in agent coordination, communication protocols, and conflict resolution. Multi-agent systems remain promising but require more sophisticated engineering than initially assumed.