A new report from the US-based newsletter Interconnects AI reveals a seismic shift in the open-source AI landscape: Alibaba Cloud's Qwen family of large language models now accounts for over 50% of all global open-source model downloads. As of March 2026, the Qwen series has reached nearly 1 billion cumulative downloads on the developer platform Hugging Face, far surpassing rivals like Meta's Llama and DeepSeek.

The Download Dominance

The data, cited from Hugging Face, shows the scale of Qwen's lead. In February 2026 alone, Qwen models generated 153.6 million downloads. This figure was more than double the combined total of the next eight major players, which included Meta, DeepSeek, and OpenAI.

This milestone follows the February 2026 release of Qwen 3.5, Alibaba Cloud's latest flagship model series. The company positioned these models as being on par with leading proprietary US models from OpenAI and Anthropic. The report notes that Chinese models first overtook their US counterparts in total downloads on Hugging Face in the summer of 2025. The decisive shift away from popular US models like Llama began in September 2024 with the release of Qwen 2.5.

Market Context and Strategic Open-Sourcing

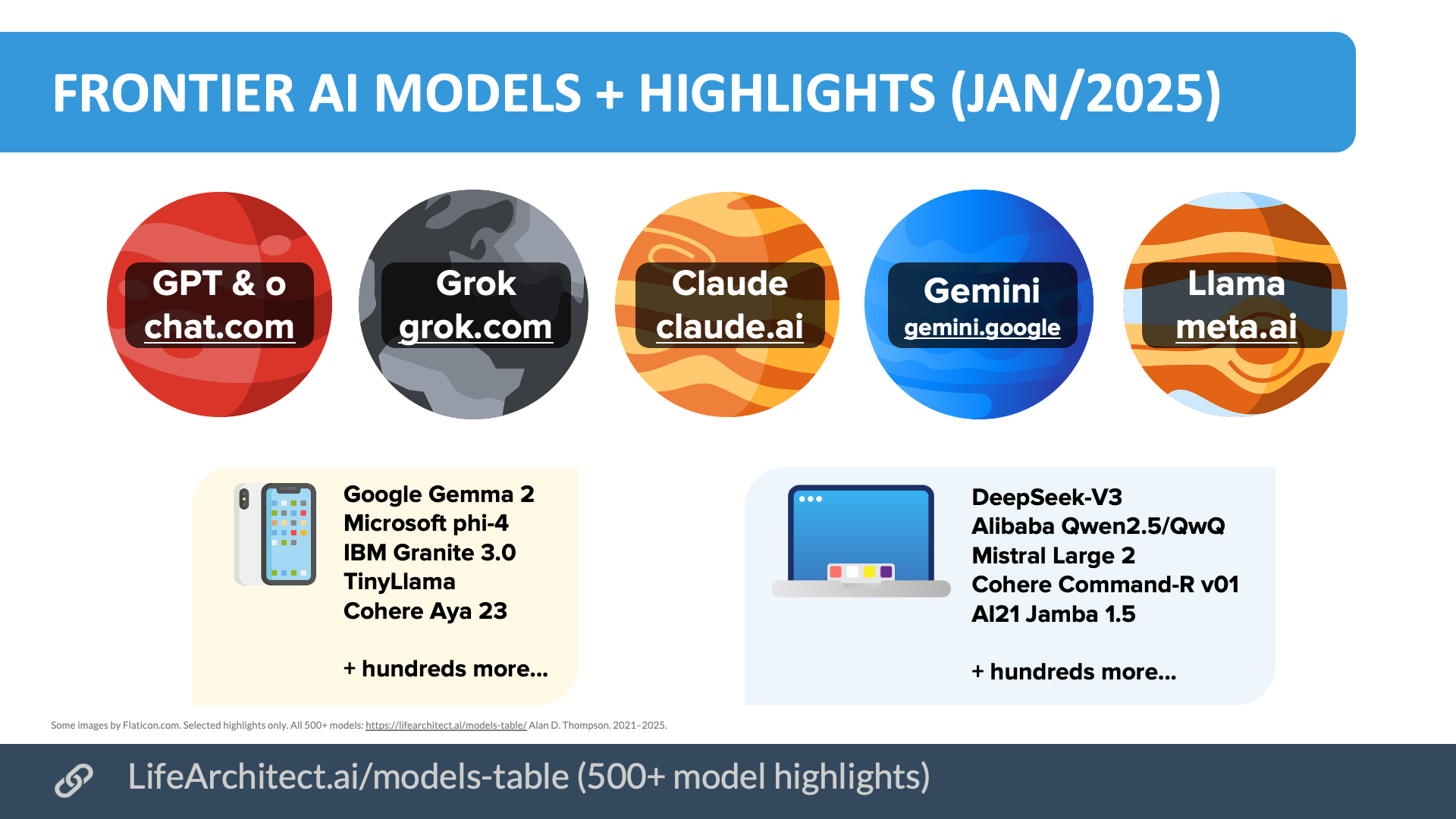

Alibaba Cloud, the AI and cloud computing unit of Alibaba Group, has aggressively pursued an open-source strategy for its Qwen models. This approach stands in contrast to the primarily closed or heavily restricted models from leading US AI labs like OpenAI (GPT series) and Anthropic (Claude).

The report underscores the dominance of Chinese models in the global open-source AI landscape. While US companies like OpenAI and Nvidia are noted to be making early gains in other areas, the download metrics for freely accessible, capable models are decisively in China's favor.

What This Means for Developers

For the global developer community, this data point is critical. Hugging Face is the de facto hub for open-source AI model distribution. A 50%+ market share in downloads indicates that Qwen has become the default starting point for a vast number of projects, experiments, and commercial applications built on open-source LLMs.

This dominance grants Alibaba significant influence over open-source AI development patterns, model architectures that gain traction, and the embedded ecosystem of tools and fine-tunes that spring up around a popular model family.

gentic.news Analysis

This report confirms a trend we've been tracking since late 2024: the strategic open-sourcing by Chinese tech giants is fundamentally reshaping global AI adoption. Alibaba's move mirrors a pattern seen with other Chinese entities, where releasing powerful base models for free drives ecosystem lock-in and cloud revenue—a playbook previously executed by Meta with Llama.

The timing is significant. The surge to 1 billion downloads follows the Qwen 3.5 release, which we covered in February 2026 for its competitive performance on benchmarks like MMLU and its strong coding capabilities. This performance-quality combo is key; developers flock to models that are both free and capable. The data suggests Qwen 3.5 successfully converted its technical parity claims into massive developer adoption.

However, this "download dominance" must be contextualized. A download does not equal a sustained deployment or a commercial contract. Many downloads are for evaluation, benchmarking, or prototyping. The real battle for enterprise AI revenue—often fought on cloud platforms like Alibaba Cloud, AWS, and Azure—is a separate, though related, front. Alibaba is clearly using open-source leadership as a top-of-funnel strategy to pull developers into its broader cloud and services ecosystem.

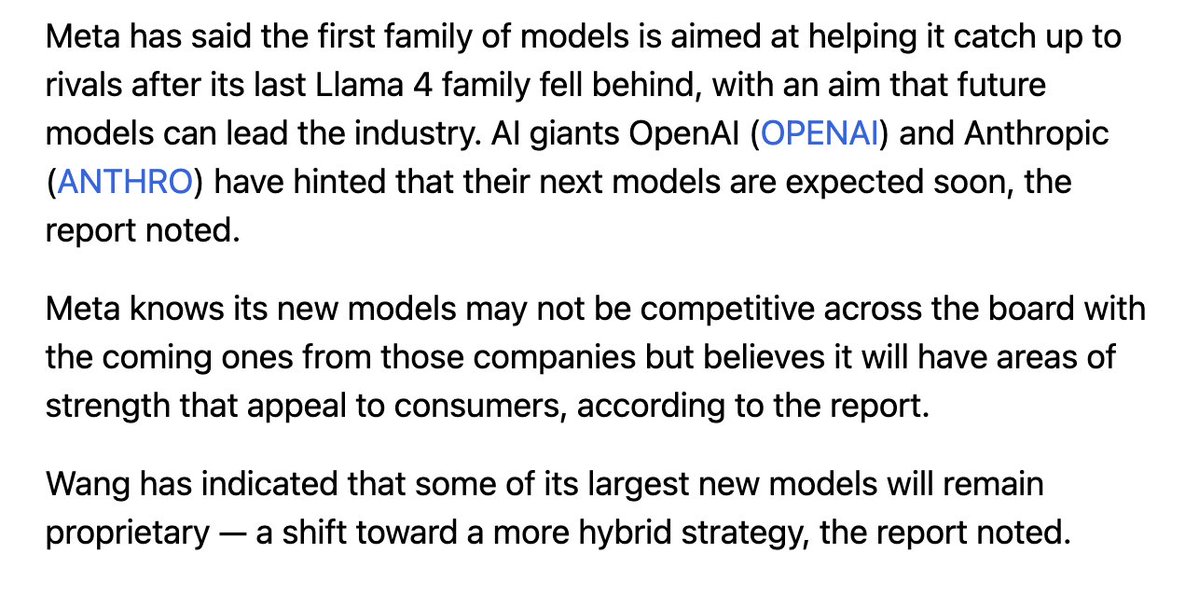

Looking at the competitive landscape, Meta's Llama, once the undisputed open-source leader, has been decisively overtaken in terms of raw download velocity. This puts pressure on Meta and other Western players to reconsider their open-source licensing and release strategies. Will they respond with even more permissive licenses or more powerful releases to win back developer mindshare? The next Llama release will be a critical test.

Frequently Asked Questions

What is the Qwen model family?

Qwen is a series of large language models developed and open-sourced by Alibaba Cloud. It includes models of various sizes (e.g., 0.5B, 7B, 14B, 72B parameters) and specialized variants for coding (Qwen-Coder) and mathematics (Qwen-Math). The latest flagship series is Qwen 3.5, released in February 2026.

Where are these download figures from?

The download data is sourced from Hugging Face, the leading platform for sharing and discovering machine learning models and datasets. The specific analysis comes from a report by Interconnects AI, a US-based newsletter that tracks the open-source AI landscape.

Does this mean Chinese AI is ahead of US AI?

Not necessarily in all dimensions. The report specifically highlights dominance in open-source model downloads. The US still leads in several areas, including the most advanced frontier proprietary models (e.g., OpenAI's GPT-5, Anthropic's Claude 3.7) and key hardware (Nvidia's GPUs). The landscape is bifurcating: Chinese leadership in open-source adoption, and intense competition at the proprietary frontier.

Why does Alibaba open-source its models?

The strategy serves multiple purposes: it drives developer adoption and familiarity, builds a community that creates tools and fine-tunes (enhancing the model's value), establishes Alibaba as a thought leader, and ultimately aims to attract users to Alibaba Cloud's paid services for training, deployment, and inference.