What Happened

A new research paper, "Align then Train: Efficient Retrieval Adapter Learning," introduces a framework designed to solve a fundamental tension in modern dense retrieval systems. The core problem is a retrieval mismatch: users increasingly express search intent through long, complex instructions or task-specific descriptions, while the target documents (product pages, articles, knowledge base entries) remain relatively simple and static. Understanding these nuanced queries requires powerful, large embedding models with strong reasoning capabilities. However, for production systems that must index millions or billions of documents and serve results in milliseconds, using such large models for document encoding is computationally prohibitive and operationally burdensome.

Current solutions often involve fine-tuning a single large embedding model for the entire task, which is expensive and requires massive labeled datasets. The proposed Efficient Retrieval Adapter (ERA) framework offers a more elegant and efficient path.

Technical Details

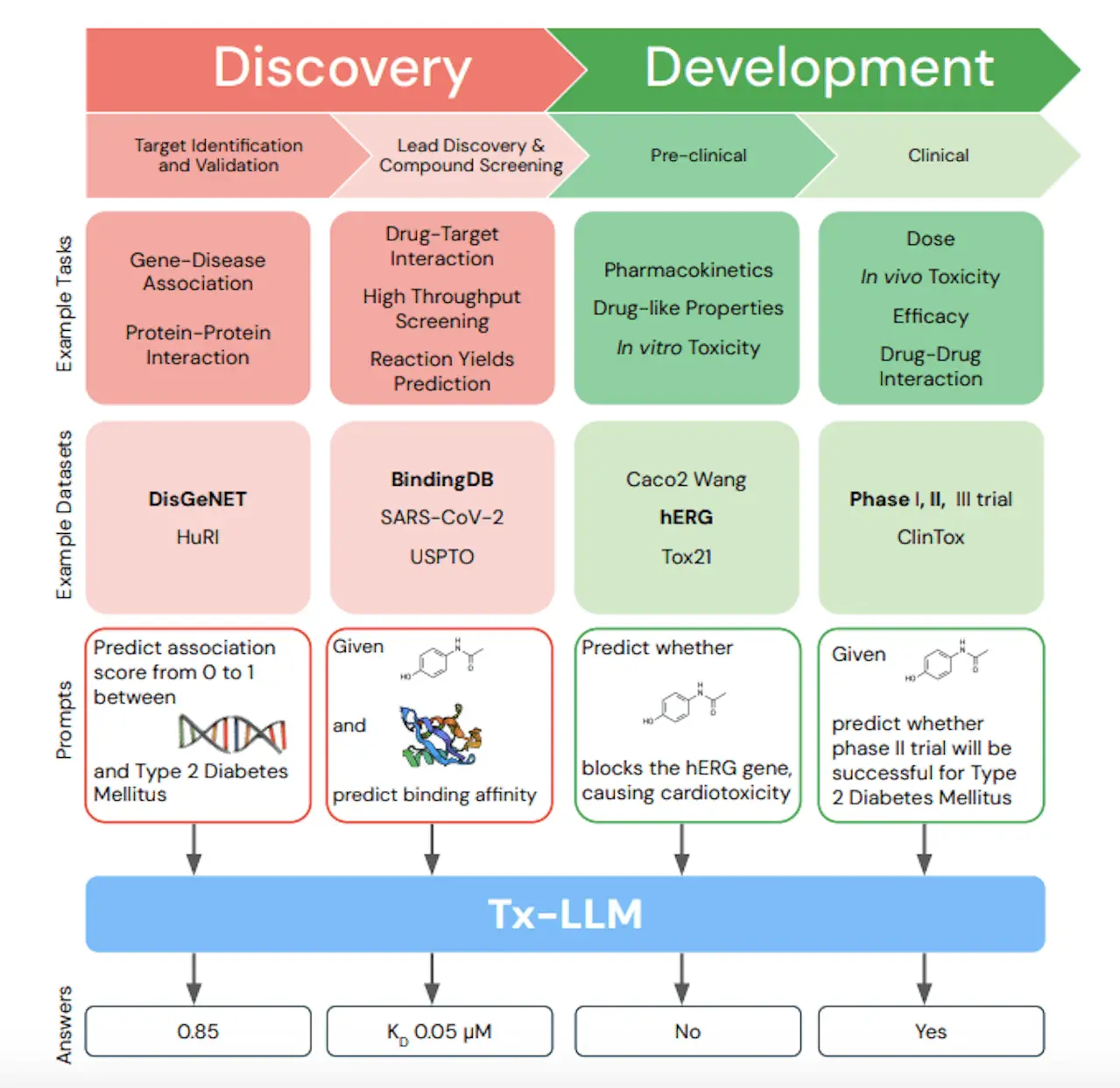

ERA is a label-efficient framework that trains lightweight "retrieval adapters" in two distinct stages, mirroring the pre-training and fine-tuning paradigm of large language models (LLMs).

- Self-Supervised Alignment: In this first stage, ERA aligns the embedding spaces of two separate models: a large, powerful query embedder (e.g., a state-of-the-art LLM) and a small, efficient document embedder. This is done without any task-specific labels, using contrastive learning on unpaired query and document data. The goal is to project the representations from both models into a shared semantic space where they are directly comparable.

- Supervised Adaptation: Once the spaces are aligned, the second stage uses a limited amount of labeled query-document relevance data to adapt only the query-side representation. A small adapter network is fine-tuned to bridge the remaining semantic gap between the complex queries and the simple documents. Crucially, the document embeddings and index are never updated, eliminating the need for costly, full-corpus re-indexing.

The key innovation is decoupling the model requirements for queries and documents. ERA allows a system to leverage the latest, most capable LLMs for understanding user intent while maintaining a lean, fast-serving infrastructure for document retrieval.

The paper validates ERA on the MAIR benchmark, which spans 126 diverse retrieval tasks across 6 domains. Results show that ERA:

- Improves retrieval performance in low-label data settings.

- Can outperform methods that require larger amounts of labeled data.

- Effectively combines stronger query embedders with weaker document embedders across different domains.

Retail & Luxury Implications

This research has direct, practical implications for AI-powered search and discovery in retail and luxury. The "retrieval mismatch" it describes is endemic to the industry.

Complex Queries Meet Simple Product Catalogs: A luxury shopper doesn't just search for "black dress." They might ask, "Find me a cocktail dress for a garden wedding in May that has a vintage-inspired silhouette but feels modern, in a sustainable fabric." Interpreting this requires the nuanced understanding of a large language model. However, the product catalog contains millions of items with structured attributes (SKU, color, material, category) and unstructured descriptions that are far simpler. ERA provides a blueprint for building a system that uses a powerful LLM to comprehend the query while relying on a highly optimized, existing product embedding index for lightning-fast retrieval.

Efficiency and Cost: For global retailers with massive, constantly updating product catalogs, the operational burden and cost of continuously re-embedding and re-indexing every item with a large model are immense. ERA's promise of keeping the document index static while improving query understanding is a major operational advantage. It enables faster iteration and deployment of improved search algorithms without backend overhauls.

Personalized Discovery & Customer Service: Beyond simple search, this framework can enhance personalized recommendation systems and AI customer service agents. An agent using Retrieval-Augmented Generation (RAG) to answer customer questions about products, policies, or styling advice must retrieve the correct information from a knowledge base. ERA could allow the agent to use a sophisticated LLM to parse the customer's complex, multi-turn dialogue while efficiently pulling facts from a lightweight, pre-indexed corporate knowledge base.

gentic.news Analysis

This paper arrives amidst a clear trend on arXiv and in industry toward making advanced AI, particularly LLMs, more efficient and practical for production systems. The Knowledge Graph shows arXiv has been a hub for 30 related articles just this week, with a strong focus on Retrieval-Augmented Generation (RAG) and Recommender Systems. This work directly connects those two themes, offering a method to make RAG architectures more efficient—a constant concern for engineering teams.

The framework's two-stage approach of alignment followed by adaptation is conceptually aligned with other recent efficiency research. For instance, our coverage of FAERec (2026-04-07) also focused on fusing LLM knowledge with existing signals in a computationally sensible way for recommendations. ERA tackles a similar fusion problem but from the infrastructure perspective of embedding model asymmetry.

Furthermore, the emphasis on operating in low-label settings is critical for luxury retail. Creating large datasets of perfectly labeled query-product pairs for niche, high-value items is difficult and expensive. A method that improves performance with limited labeled data lowers the barrier to implementing sophisticated AI search.

However, it's important to note this is a research preprint. The MAIR benchmark, while comprehensive, is not a retail-specific dataset. The real test will be in production environments where document schemas are messy, query distributions shift, and latency requirements are extreme. The principle is sound and highly applicable, but teams should anticipate the need for careful adaptation and validation on their own data and infrastructure before considering deployment.

Business Impact: For technical leaders, ERA represents a potential path to significantly upgrading customer-facing search and discovery without a corresponding explosion in compute costs or a complex, risky migration of core indexing infrastructure. It enables a more agile approach to leveraging the latest LLMs for query understanding.

Implementation Approach: Adopting this would require ML engineering teams to manage two model pipelines: the large query embedder (likely hosted via an API or on dedicated inference hardware) and the lightweight document embedder with its static index. The adapter training itself is the new component, requiring MLOps for the two-stage training process. The complexity is moderate but focused on the ML layer, not the data infrastructure layer.

Governance & Risk: The primary risk is the potential introduction of bias or error through the alignment and adaptation stages if the training data is not representative. The use of a powerful LLM for queries also inherits that model's biases and limitations. Governance must ensure the adapter training data reflects brand values and desired retrieval behavior. The maturity level is early-stage research, so pilot projects in controlled environments are the recommended next step.