A notable trend is emerging in the AI industry: the velocity of shipping practical, enterprise-grade AI products is increasing, with Anthropic appearing as a primary driver. According to observations by Ethan Mollick, a professor at Wharton and a prominent AI commentator, the market is now facing a release cadence that outpaces its ability to track and integrate new information.

What's Happening

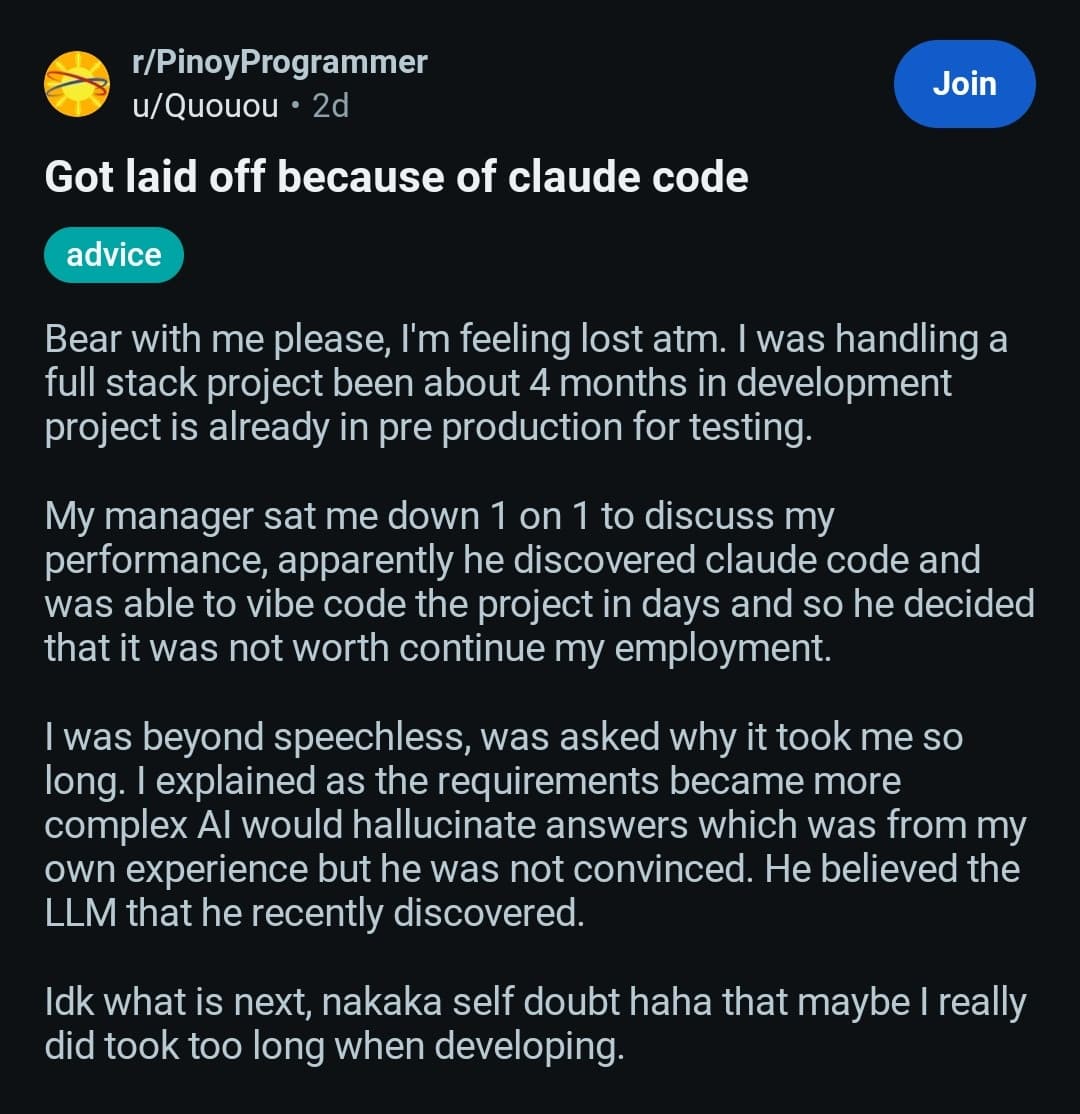

The core observation is twofold. First, the frequency of new foundation model releases from all major labs continues to accelerate—a trend well-documented since 2024. Second, and more impactful for businesses, is the parallel acceleration in the release of "significant application and enterprise products." These are not just research demos or API updates, but finished tools and platforms designed for direct business use.

Mollick singles out Anthropic as a particularly active player in this second category. While the tweet does not specify product names, this acceleration implies a strategic shift from pure model development to aggressive productization and market capture.

The Market Absorption Challenge

The concluding note—"Almost certainly faster than the market can track or absorb information"—highlights a critical friction point. For enterprise CTOs and AI integration teams, this creates a continuous evaluation burden. The risk of adopting a product that may be superseded or deprecated within a short timeframe increases, potentially slowing overall adoption despite the increased supply.

gentic.news Analysis

This observation aligns with a strategic pattern we've tracked since late 2025. Following the release of Claude 3.5 Sonnet and the subsequent Claude 3.7 models, Anthropic's public roadmap shifted emphasis toward "enterprise-ready toolchains" and vertical solutions. This is a classic scaling move: having established competitive model capabilities (notably in coding and reasoning benchmarks), the focus turns to monetization and locking in enterprise customers through integrated workflows, not just API calls.

The acceleration also reflects intensified competition. As we covered in our analysis of Google's Gemini 2.0 rollout and Microsoft's Copilot Stack expansions, the platform war has moved decisively to the application layer. Anthropic, lacking the entrenched cloud infrastructure of its rivals, must compete on velocity and specificity—releasing tailored products for finance, legal, and R&D sectors to build its moat. The risk for the market is fragmentation and decision fatigue, where the cost of continuous evaluation begins to offset the benefits of new tools.

For practitioners, this means procurement and vendor evaluation must become a continuous process, not a quarterly project. The most useful new product for a given task may now come from an unexpected player and with little fanfare. Staying informed requires monitoring not just model papers, but GitHub repositories, enterprise blog announcements, and niche integration channels.

Frequently Asked Questions

What kind of enterprise products is Anthropic releasing?

While the source doesn't specify, based on Anthropic's 2025-2026 trajectory, these likely include specialized agents for code review and security auditing, structured data extraction pipelines for legal and financial documents, and internal knowledge base copilots with enhanced governance controls—all built atop their Claude 3.7 model family.

How can businesses keep up with this accelerated release pace?

Businesses should shift from episodic technology reviews to continuous scanning, potentially dedicating a small team or using curated intelligence services. The focus should be on solving specific, high-value pain points rather than adopting technology for its own sake. Piloting new tools in bounded, non-critical environments allows for rapid assessment without major disruption.

Does this acceleration mean model quality is improving at the same rate?

Not necessarily. Product release velocity and underlying model capability are related but distinct. A faster product cycle often means better packaging, integration, and UX of existing model capabilities. Major leaps in core reasoning or efficiency (which would be reflected in benchmarks like MMLU or GPQA) still follow a slower, more deliberate research timeline, though incremental improvements are continuous.

Is this trend unique to Anthropic?

No, but Anthropic is highlighted as a current leader in execution speed. All major AI labs (OpenAI, Google DeepMind, xAI) are pushing to productize their research. Anthropic's perceived acceleration likely stems from its need to capture enterprise market share more aggressively as a relatively pure-play AI company without a massive existing cloud or consumer product suite to leverage.