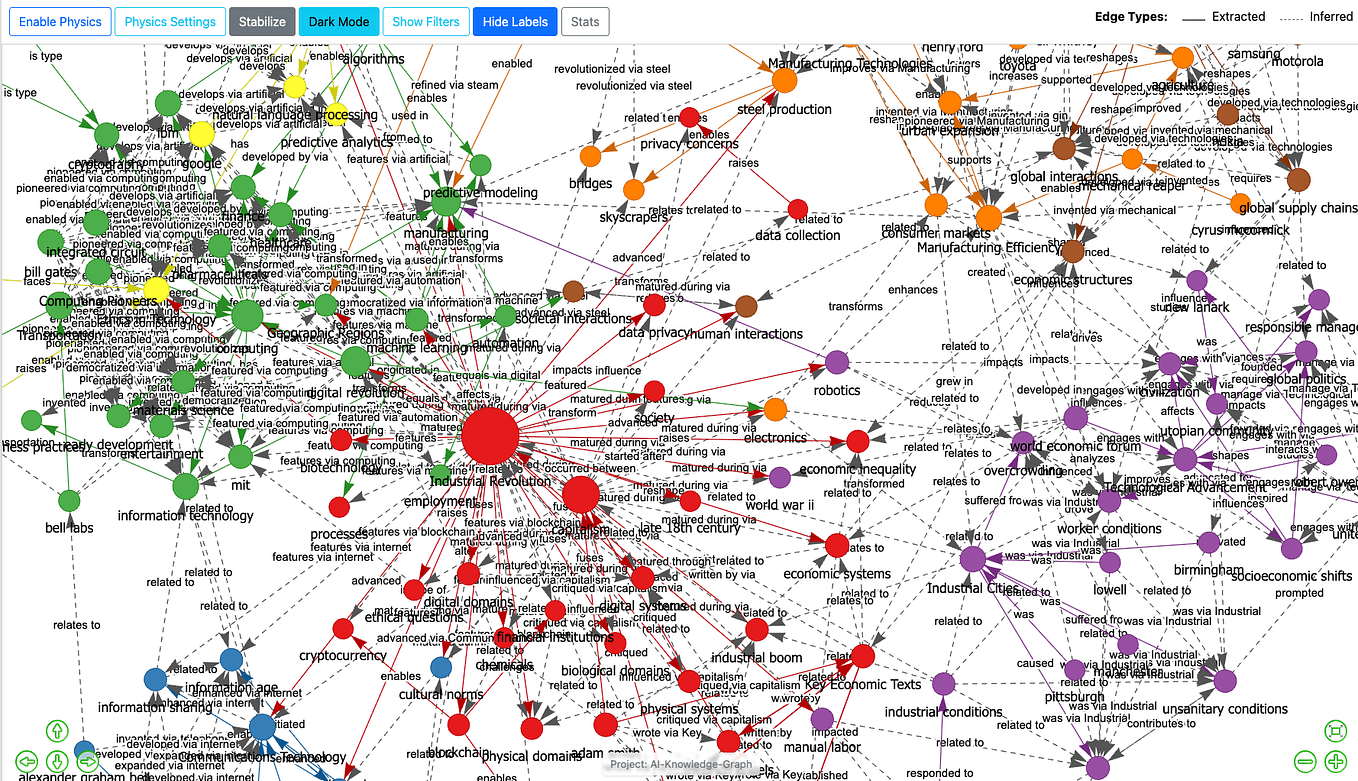

A developer has rapidly built and released a functional tool for creating LLM-powered knowledge graphs, shipping it on GitHub just 48 hours after former Tesla AI director and OpenAI founding member Andrej Karpathy tweeted about wanting such a system.

The source is a social media post highlighting the speed of this open-source development cycle. No specific details about the tool's name, architecture, or capabilities are provided in the source material. The event underscores the reactive and fast-paced nature of the AI developer community, where a high-profile figure's expressed need can catalyze immediate, public implementation.

What Happened

On April 15, 2026, Andrej Karpathy tweeted about his interest in LLM-powered knowledge graphs. Within two days, an unidentified developer had created a working version and published it on GitHub. The source does not specify the repository name, the programming language used, or the specific LLMs integrated.

Context

Knowledge graphs structure information as entities (nodes) and relationships (edges), creating a semantic network. Large Language Models (LLMs) excel at parsing and generating natural language. Combining them—using an LLM to populate, query, or reason over a knowledge graph—is an active area of research and tooling aimed at making AI systems more factual, interpretable, and connected.

Karpathy, a prominent figure in AI education and development through his YouTube lectures and projects like llm.c, often discusses architectural ideas and tooling gaps. His public musings frequently attract attention from developers who then attempt to build proposed systems.

gentic.news Analysis

This rapid build-and-ship event is a microcosm of the current open-source AI ecosystem, where the distance between a conceptual tweet and a functional repository can be mere hours. It highlights a development culture prioritizing fast prototyping and public sharing over prolonged, closed-door research cycles. For practitioners, it signals that tooling for hybrid AI architectures—like LLMs coupled with symbolic knowledge bases—is becoming a hotbed of grassroots innovation.

The trend aligns with increased activity we've noted around making LLMs more reliable and grounded. As covered in our February 2026 analysis of Google's Gemini 2.0 Pro with Fact-Checking API, major labs are investing heavily in retrieval and verification pipelines to combat hallucination. This community-built tool represents a parallel, bottom-up approach to the same core problem: enhancing LLMs with structured knowledge.

However, the lack of details in the announcement is telling. Building a robust, scalable LLM-powered knowledge graph is a significant engineering challenge involving entity resolution, consistent relationship mapping, and efficient graph traversal. A weekend project likely demonstrates a compelling proof-of-concept rather than a production-ready system. The real test will be if this tool gains traction, sees commits from other developers, and evolves beyond a initial spike of interest. Its existence, though, adds to the growing toolkit for developers experimenting with graph-based AI augmentation.

Frequently Asked Questions

What is an LLM-powered knowledge graph?

An LLM-powered knowledge graph is a system that uses a Large Language Model to assist in creating, populating, querying, or reasoning over a knowledge graph. The LLM might interpret natural language to extract entities and relationships for the graph, or it might answer questions by retrieving and synthesizing information from the graph's structured data.

Why did Andrej Karpathy want one?

While his exact tweet isn't quoted in the source, Karpathy has consistently advocated for AI architectures that combine the strengths of different approaches. LLMs have broad knowledge and language understanding but can be imprecise or hallucinate. Knowledge graphs offer precise, structured, and verifiable relationships. A hybrid system could leverage the LLM's flexibility to interact with the rigor of a knowledge graph, potentially leading to more reliable and explainable AI systems.

Where can I find this GitHub project?

The original source does not name the specific GitHub repository. Finding it would likely require searching GitHub for recent projects related to "knowledge graph" and "LLM" or monitoring social media threads stemming from Karpathy's original tweet. The speed of development suggests it is a relatively new repository.

Is this a common way for AI tools to be created?

Yes, increasingly so. The open-source AI community is highly reactive. A blog post, research paper, or tweet from a respected figure often sparks a wave of implementations, forks, and derivatives. This accelerates innovation and democratizes access to new ideas but can also lead to a proliferation of similar, minimally-maintained projects.