AI video generation platform HeyGen has launched what it calls its "most complete" platform update of 2026, introducing three significant technical features: a new avatar engine, an open-source video renderer, and 175-language dubbing capabilities. The announcement, made via social media, positions HeyGen to compete more directly with established players like Synthesia and Runway in the enterprise and creator AI video markets.

What's New: Three Core Technical Features

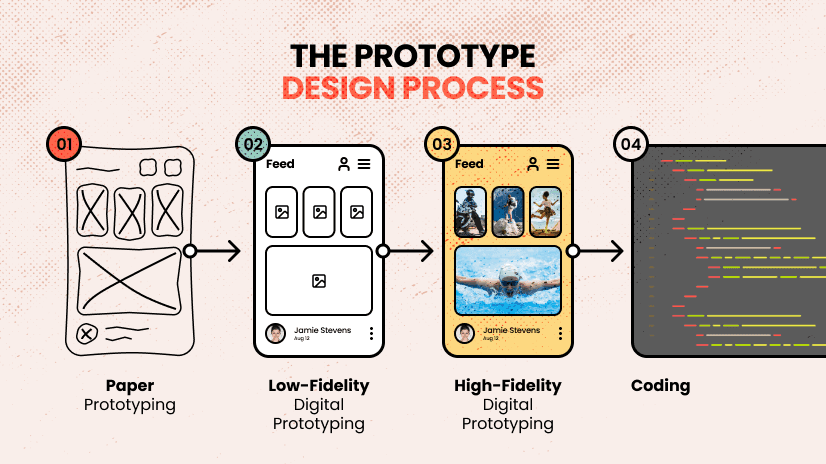

1. New Avatar Engine

HeyGen's updated avatar engine represents the core of its synthetic media offering. While specific architectural details weren't disclosed in the initial announcement, the engine likely builds on the company's previous diffusion-based or GAN-based approaches to generating photorealistic human avatars. The update suggests improvements in lip-sync accuracy, emotional expression range, and rendering quality—critical factors for enterprise training videos, marketing content, and personalized communications.

2. Open-Source Video Renderer

In a notable move toward developer adoption, HeyGen has released an open-source video renderer. This component handles the final composition and output of generated video content. By open-sourcing this renderer, HeyGen enables technical teams to integrate, customize, and potentially contribute to the rendering pipeline, addressing a common pain point where proprietary rendering engines create vendor lock-in and limit customization.

3. 175-Language Dubbing

The platform now supports dubbing into 175 languages, a substantial expansion from previous capabilities. This feature likely combines several AI subsystems:

- Speech-to-Text for transcribing original audio

- Machine Translation for converting scripts

- Text-to-Speech with voice cloning to maintain speaker identity

- Prosody and timing adjustment to match lip movements

The 175-language coverage suggests HeyGen is leveraging large multilingual models (like Meta's NLLB or Google's Universal Speech Model) rather than building custom models for each language pair.

Technical Implementation & Access

While the announcement tweet provides high-level feature descriptions, the linked breakdown offers more practical implementation details:

Avatar Creation Workflow: Users can reportedly create custom avatars with as little as a 2-minute video sample, suggesting improvements in few-shot learning approaches for voice and visual cloning.

Renderer Integration: The open-source renderer appears designed for both cloud API and on-premise deployment, with Docker containers and Kubernetes configurations mentioned in community discussions.

Dubbing Pipeline: The multilingual dubbing system maintains emotional tone and speaker characteristics across languages, using what HeyGen describes as "context-aware voice transfer" technology.

Competitive Landscape

HeyGen's 2026 update directly addresses several competitive gaps:

Avatar Languages 175 120+ N/A (focus on editing) Renderer Openness Open-source Proprietary Proprietary Custom Avatar Creation 2-minute sample 10+ minutes Limited avatar focus Primary Use Case Enterprise video Enterprise training Creative professionalsPlatform Strategy & Business Implications

HeyGen appears to be pursuing a hybrid strategy:

- Enterprise SaaS for no-code video creation

- Developer platform via open-source components and APIs

- Global expansion through extensive language support

The open-source renderer is particularly strategic—it lowers adoption barriers for technical teams while potentially creating a contributor community that improves the core technology. The 175-language dubbing targets multinational corporations needing consistent training and marketing materials across regions.

Limitations & Considerations

Initial user reports suggest two areas needing verification:

- Avatar quality consistency across the expanded language set

- Computational requirements for local rendering deployment

- Ethical safeguards for voice and likeness cloning

As with all synthetic media platforms, responsible use policies and content verification mechanisms will be crucial for enterprise adoption.

gentic.news Analysis

HeyGen's 2026 update represents a calculated expansion in the increasingly crowded AI video generation market. The company appears to be borrowing strategic plays from different sectors: the open-source approach mirrors Stability AI's playbook with Stable Diffusion, while the extensive language support follows the localization strategy of global SaaS platforms.

This release follows HeyGen's $5.6 million seed round in late 2024, which the company indicated would fund "platform expansion and research into multimodal generation." The 175-language dubbing capability particularly stands out—it surpasses Synthesia's 120+ languages and suggests HeyGen has made significant investments in multilingual speech models, possibly leveraging recent advances in self-supervised speech representation learning.

The open-source renderer release is noteworthy because it addresses a common enterprise objection to AI video platforms: vendor lock-in. By allowing technical teams to customize the rendering pipeline, HeyGen may gain traction with organizations that have specific compliance, integration, or performance requirements. This aligns with a broader trend we've observed where AI infrastructure companies balance proprietary differentiators with open-source commoditization.

However, the AI video generation space remains fiercely competitive. Just last month, Runway announced Gen-3 Alpha with improved temporal consistency, while Synthesia expanded its avatar emotion controls. HeyGen's success will depend not just on feature parity but on delivering superior quality and workflow integration—particularly for enterprise customers who value reliability over experimental features.

From a technical perspective, the most interesting aspect may be how HeyGen maintains avatar consistency across 175 languages. Most voice cloning systems degrade significantly when applied to languages not present in the training data. If HeyGen has solved this through better multilingual representations or efficient adaptation techniques, that advancement could have applications beyond video generation.

Frequently Asked Questions

What is HeyGen's new avatar engine?

HeyGen's updated avatar engine generates photorealistic human avatars for video content. While technical details are limited in the announcement, it likely uses diffusion models or GANs to create avatars that can be customized with minimal input (reportedly just 2 minutes of video). The engine handles lip-syncing, emotional expressions, and maintains consistency across different languages and contexts.

Is HeyGen's video renderer truly open-source?

Yes, HeyGen has released a video renderer as open-source software. This means developers can access the source code, modify it for their needs, and potentially contribute improvements back to the project. The renderer handles the final composition and output of AI-generated videos, and its open-source nature allows for greater customization and integration flexibility compared to proprietary alternatives.

How does the 175-language dubbing work technically?

The dubbing system combines several AI components: speech recognition transcribes the original audio, machine translation converts the text to target languages, and text-to-speech with voice cloning generates the new audio while preserving the speaker's vocal characteristics. The system likely uses large multilingual models trained on diverse speech data, with additional processing to match lip movements and emotional tone across languages.

How does HeyGen compare to Synthesia and Runway?

HeyGen now competes more directly with Synthesia in enterprise AI video, offering more languages (175 vs. 120+) and an open-source renderer. Unlike Runway, which focuses on creative professionals and video editing tools, HeyGen targets business use cases like training videos, marketing content, and personalized communications. Each platform has different strengths: Synthesia has deeper enterprise integrations, Runway offers more creative control, while HeyGen emphasizes language support and customization.