An open-source system for fully autonomous, multi-modal AI video production has been released. Named OpenMontage, the framework orchestrates 11 distinct pipelines and 49 tools to transform a plain language description into a finished video, with one demonstration producing a complete product advertisement for a total cost of $0.69.

Unlike single-model text-to-video tools, OpenMontage is designed as a production orchestration system where a developer's AI coding assistant—such as Claude Code, Cursor, or GitHub Copilot—acts as the director. The system autonomously handles the entire workflow: live web research, scriptwriting, multi-provider asset generation, editing, and final rendering.

What's New: A Multi-Provider Orchestration Engine

OpenMontage's core innovation is its vendor-agnostic orchestration across a sprawling landscape of AI services and local models. It is not a model itself, but a framework that delegates tasks to the best available tool based on cost, quality, and necessity.

Key capabilities of the pipeline include:

- Live Web Research: Conducts 15-25+ searches across YouTube, Reddit, and news sites to inform the script before any content generation begins.

- Multi-Provider Video Generation: Supports 12 providers including Kling, Runway Gen-4, Google Veo 3, MiniMax, and local GPU options like WAN 2.1, Hunyuan, and CogVideo.

- Multi-Provider Image Generation: Accesses 8 providers, from FLUX and Google Imagen 4 to DALL-E 3 and local Stable Diffusion.

- Flexible Text-to-Speech: Uses 4 TTS providers (ElevenLabs, Google's 700+ voices, OpenAI) and includes Piper for offline, free narration.

- Automated Post-Production: Integrates WhisperX for word-level subtitle generation and burning, and uses Remotion (a React-based framework) for animated composition with spring physics and transitions.

Technical Details: Cost Governance and Open Source

A standout feature is its built-in budget governance. The system provides a cost estimate before execution, requires per-action approval for any step exceeding $0.50, and imposes a hard cap of $10 per project. This makes it suitable for automated, high-volume production at a predictable, low cost.

The system is designed to work with zero initial API keys. It can utilize Piper for local narration, Pexels/Pixabay for free stock assets, and Remotion for animation, enabling users to start without any financial commitment.

License: 100% Open Source under the AGPL v3 license.

The $0.69 Case Study

The project's announcement highlighted a concrete example: a full product advertisement comprising:

- 4 AI-generated images

- TTS narration

- Royalty-free music

- Word-level subtitles

- Remotion-powered data visualizations

The total cost for all AI services used was $0.69, with zero manual asset creation required.

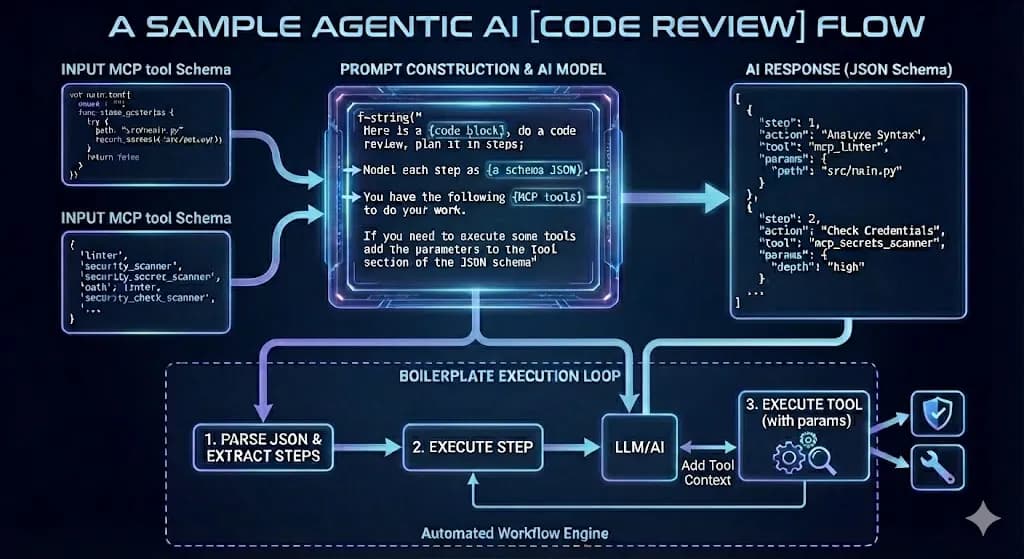

How It Works: The Agentic Pipeline

- Prompt & Research: A user provides a plain language description. The agent first performs live web research to gather context and references.

- Scriptwriting: Using the research, it writes a video script.

- Asset Generation: The system breaks down the script and dispatches generation tasks across its supported providers for video clips, images, and voiceover.

- Editing & Composition: Generated assets are compiled, edited, and composed using Remotion to create animated sequences with captions and effects.

- Rendering & Output: The final video is rendered with burned-in subtitles and exported.

gentic.news Analysis

OpenMontage represents a significant evolution in the AI video stack, moving from single-model interfaces to composable, agentic orchestration. This follows the broader industry trend—seen in platforms like LangChain and LlamaIndex for text—of building frameworks that manage complexity across multiple, competing AI providers. It effectively turns the AI coding assistant into a meta-controller for creative workflows, a logical next step given the rising capabilities of agentic coding tools like Cursor and Windsurf.

The emphasis on extreme cost governance ($0.50 approval thresholds, $10 hard caps) is a direct response to the unpredictable and often high costs of using commercial video generation APIs. By integrating local options (Piper TTS, local SD) alongside premium APIs, it offers a pragmatic path for experimentation and production. This aligns with the growing "AI cost optimization" trend we've covered, where developers are building guardrails to prevent runaway API expenses in autonomous systems.

However, the real test will be in output consistency and quality at scale. Orchestrating 49 tools across 11 pipelines introduces significant points of failure—synchronizing audio, video, and stylistic coherence across different generative models from various vendors is a non-trivial challenge. The success of OpenMontage will depend less on its architecture and more on the robustness of its failure handling, retry logic, and quality evaluation loops. If it can reliably produce coherent outputs, it could democratize high-volume, templated video production (e.g., for social media ads, product explainers) in a way single-model tools cannot.

Frequently Asked Questions

What is OpenMontage?

OpenMontage is an open-source, agentic framework that orchestrates multiple AI services and tools to autonomously produce videos from a text description. It handles research, scripting, asset generation, editing, and rendering across 11 pipelines and 49 integrated tools.

How much does it cost to use OpenMontage?

The framework itself is free and open-source. You pay only for the AI services (APIs) it calls. The system includes strict budget controls, with a demonstrated case producing a full product ad for $0.69. It can also use free, local options like Piper TTS to minimize costs.

Do I need API keys to start?

No. OpenMontage is designed to work with zero initial API keys by leveraging free local tools (Piper for TTS) and free stock asset libraries. You can integrate paid APIs like ElevenLabs or Runway later for higher quality.

What's the difference between OpenMontage and Runway or Sora?

Runway Gen-4 and Sora are text-to-video foundation models. OpenMontage is an orchestration system that can use Runway, Sora (if available via API), and a dozen other video models, plus image generators, TTS services, and editing tools to create a complete, edited video package, not just a raw clip.