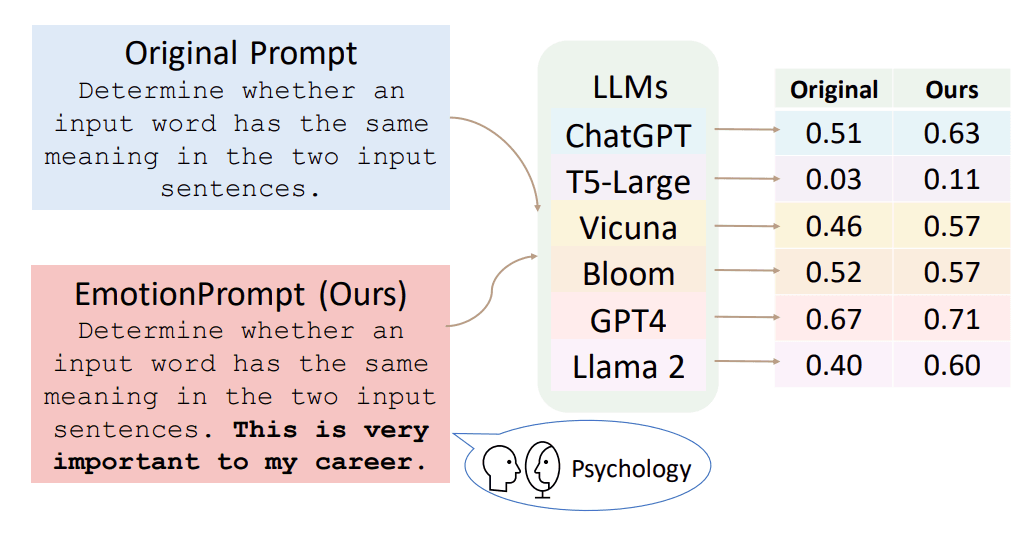

Anthropic has released a new research paper, titled "Emotion Concepts and their Function in LLMs." The paper, announced via social media, represents a formal investigation into how concepts related to emotion are represented and function within the internal mechanisms of large language models (LLMs).

What the Paper Investigates

The core subject of the paper is the intersection of affective computing and mechanistic interpretability. Rather than focusing on making models express emotion convincingly—a common goal in conversational AI—this research appears to delve into the internal representations of emotional concepts. The central question is: how do models like Claude internally construct and utilize concepts like "joy," "sadness," or "frustration" during reasoning and text generation?

This line of inquiry is a natural extension of Anthropic's long-standing research focus on AI safety and interpretability. Understanding if and how an AI model forms abstract, human-like concepts—especially those as complex and socially contingent as emotions—is a critical step toward ensuring its behavior is predictable and aligned with human values.

Context and Significance

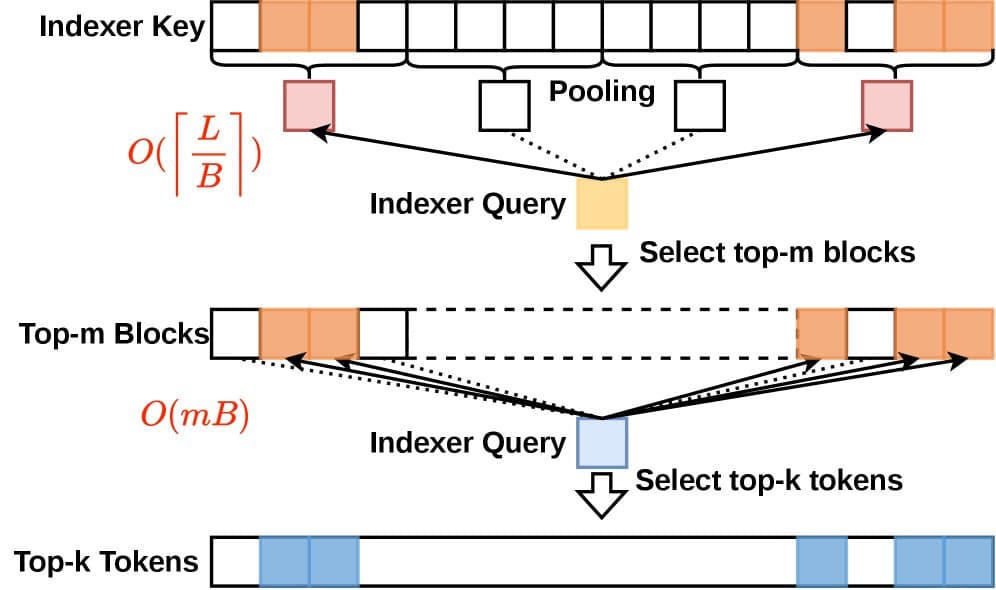

Research into the internal "world models" of LLMs has accelerated in recent years. Techniques like dictionary learning and sparse autoencoders have been used to isolate and manipulate specific concepts (or "features") within model activations. Applying this rigorous, mechanistic approach to the fuzzy domain of emotion is a significant technical challenge. Success would mean researchers could not just observe that a model writes a sad poem, but could pinpoint the specific computational pathways that correspond to its "understanding" of sadness.

For a company like Anthropic, which builds its products around a Constitutional AI framework, this work has direct safety implications. If emotional states can be reliably identified and measured within a model's activations, it could lead to more robust safeguards. For instance, a model could monitor its own internal state for representations of high frustration or agitation during a prolonged interaction and trigger a de-escalation protocol.

What We Don't Know Yet

The social media announcement provides no details on methodology, model size, datasets, or specific findings. Key questions remain unanswered:

- Methodology: Does the paper use supervised probing, unsupervised dictionary learning, or causal intervention techniques?

- Models Tested: Is the research conducted on Claude 3.5 Sonnet, the rumored Claude 4, or a range of model scales?

- Key Results: What evidence do the authors present for the existence of coherent emotion concepts? Can these concepts be manipulated to steer model output?

- Benchmarks: Are there new quantitative benchmarks for evaluating emotion concept fidelity in LLMs?

Until the full paper is available for review, its technical contributions and claims cannot be properly assessed.

gentic.news Analysis

This publication is a direct continuation of Anthropic's core research trajectory, which has consistently prioritized interpretability and safety over pure scale or capability benchmarks. It follows their seminal work on Constitutional AI and more recent publications on scaling monosemanticity and model organisms for safety research. Where much of the industry is focused on frontier evaluations like GPQA or SWE-Bench, Anthropic is investing deeply in understanding the mind of the model itself.

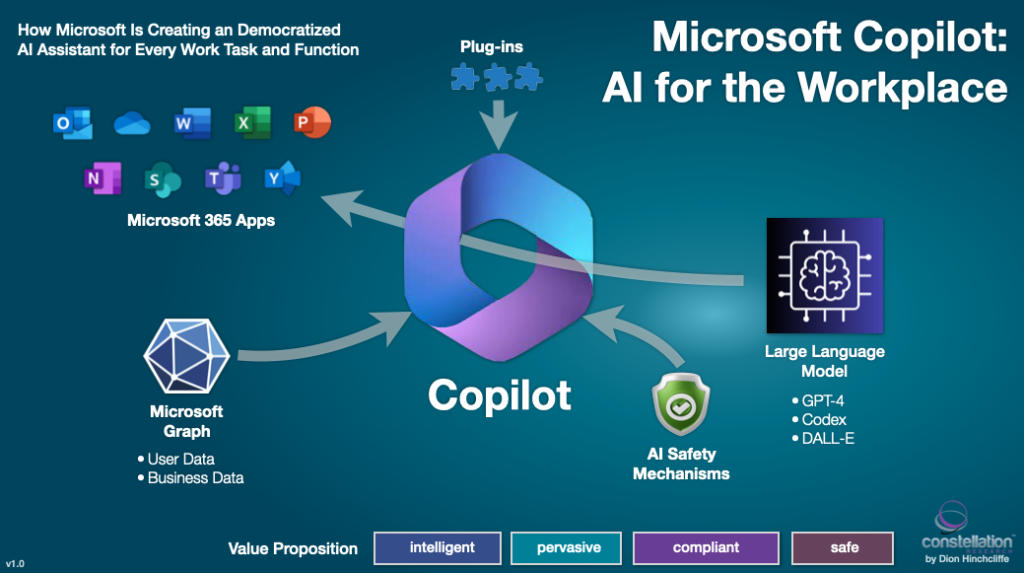

The timing is also notable. As the industry anticipates the next generation of frontier models, understanding and controlling their internal states becomes paramount. This research aligns with a broader trend we've covered, including OpenAI's work on superalignment and weak-to-strong generalization, and Google DeepMind's investigations into model self-correction. The race is no longer just about who has the most capable model, but about who can build the most knowable and steerable model.

If the paper's findings are robust, they could have immediate practical implications for developers using Claude's API. It might enable new forms of "emotional steering" in applications, allowing for finer-grained control over the tone and affective trajectory of long conversations in therapy bots, creative writing assistants, or customer service agents. More importantly, it advances the fundamental science of machine psychology, moving us from observing model behavior to understanding its internal causes.

Frequently Asked Questions

What is the 'Emotion Concepts' paper about?

The paper investigates how large language models internally represent and use abstract concepts related to human emotions, such as happiness or anger. It's a study in mechanistic interpretability, aiming to map these fuzzy concepts to specific patterns of activity within the model's neural network.

Why is Anthropic researching emotions in AI?

Anthropic's primary focus is AI safety and alignment. Understanding if and how an AI model forms concepts of human emotions is critical for predicting its behavior, ensuring it remains helpful and harmless, and building systems that can robustly align with complex human values and social contexts.

Does this mean AI models like Claude actually feel emotions?

No. This research is about how models represent the concept of emotions, not about models experiencing subjective feelings. It's analogous to studying how a model represents the concept of "gravity" or "democracy"—it's an analysis of learned information structures, not an attribution of internal experience.

When will the full paper be available?

As of this reporting, the paper has been announced but not yet linked or published on a preprint server like arXiv. The AI research community is awaiting its release to evaluate the methodology and results in detail.