Omar Saadoun, founder of the DAIR.AI research institute, has announced a significant new capability for his PaperWiki platform: AI agents that generate personalized survey papers on academic topics. This feature leverages a user's personal, LLM-generated knowledge base of research papers to create tailored, comprehensive overviews of a field.

What Happened

In a post on X, Saadoun revealed that his AI agents within PaperWiki are now capable of producing personalized survey papers. These surveys are synthesized from a user's own curated collection of papers, which is maintained as a knowledge base generated and updated by large language models.

Saadoun highlighted that survey papers remain one of the most effective ways to track progress in a fast-moving research field. The new agentic capability automates the labor-intensive process of literature review and synthesis, creating a dynamic resource that evolves with the user's interests and the latest publications.

How It Works: Personalized, Self-Improving Knowledge

The core of this feature is a personal paper knowledge base. Users build this base, which is then processed and structured by LLMs. AI agents act on this knowledge base, identifying connections, trends, and key papers within a specified topic to generate a coherent survey.

Crucially, Saadoun describes these surveys as "self-improving and always up-to-date." As new papers are added to the user's knowledge base or published in the relevant field, the AI agents can incorporate them, refreshing the survey document automatically. This addresses a major pain point in academic research: the rapid obsolescence of static literature reviews.

Saadoun shared that these dynamically generated topic wiki pages are among his "favorite views" in the evolving PaperWiki platform. He plans to share this capability with students at the DAIR.AI Academy, enabling their own AI agents to "work on the frontier" of research.

Context and Background

PaperWiki is part of Saadoun's broader vision for AI-augmented research, developed under the DAIR.AI institute. The platform aims to transform how researchers interact with the scientific literature, moving from passive reading to active, AI-assisted synthesis and knowledge management.

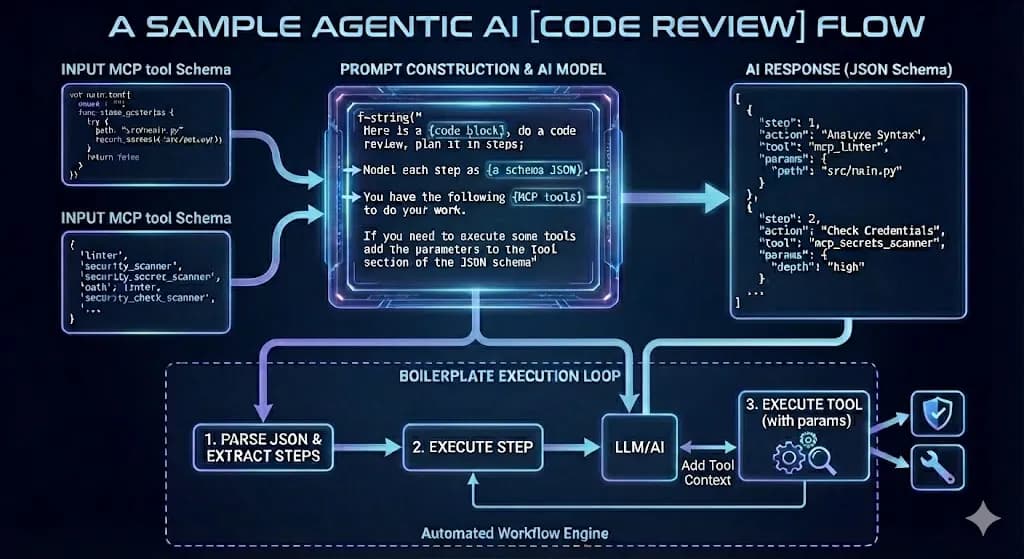

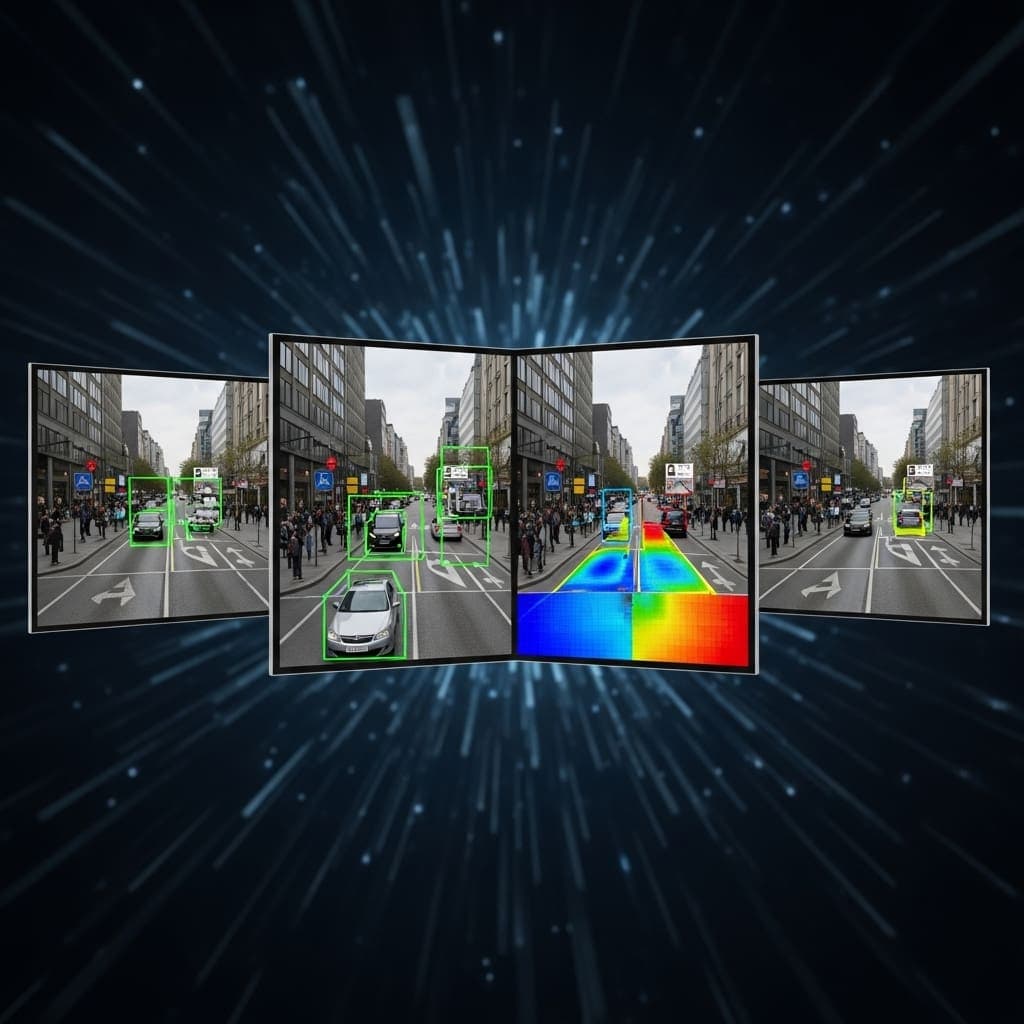

This development follows a clear trend toward agentic AI for research. Instead of merely retrieving papers, systems are now being designed to reason over content, draw connections, and produce novel scholarly outputs like surveys. This positions PaperWiki alongside other research tools exploring AI co-authorship and synthesis.

gentic.news Analysis

This announcement is a logical and significant step in the evolution of AI for Science (AI4S). For over a year, the focus has been on AI that can read papers (e.g., for Q&A or summarization). Saadoun's work pushes into the territory of AI that can write scholarly content—specifically, synthesis—which is a higher-order cognitive task. The personalization angle is key; a generic survey on "large language models" is far less valuable than one tailored to a researcher's specific focus on, say, "efficient fine-tuning methods for medical LLMs."

The concept of a "self-improving" survey directly tackles academia's velocity problem. In fields like ML, a traditional survey can be outdated within months. An AI agent that continuously ingests ArXiv-sanity, OpenReview, or a user's Zotero library could maintain a living document, a powerful tool for PhD students and principal investigators alike.

However, the real test will be in quality and trust. Generating a coherent list of papers is one thing; producing a survey with accurate technical descriptions, critical analysis, and insightful commentary on future directions is another. The AI's output will likely require careful human verification, especially for nuanced claims. This move also intensifies the conversation about AI's role in scholarly authorship. If an AI generates a survey that a researcher then publishes, what are the ethical and attribution norms? Saadoun's plan to deploy this at the DAIR.AI Academy makes it a live experiment in next-generation research workflows.

Frequently Asked Questions

What is PaperWiki?

PaperWiki is a platform founded by Omar Saadoun of DAIR.AI that uses AI to help researchers manage, synthesize, and generate insights from academic papers. It goes beyond simple paper search to build personalized knowledge bases and now, generate survey papers.

How does the AI generate a personalized survey?

The AI agents within PaperWiki analyze a user's personal knowledge base of research papers, which is built and structured using LLMs. The agents identify the key papers, themes, methodologies, and trends within a user-specified topic area, then synthesize this information into a coherent survey-style document.

What does "self-improving" mean for these AI-generated surveys?

It means the survey document is not static. As new relevant papers are published and added to the system (either automatically or by the user), the AI agents can incorporate this new information, update existing sections, and revise the survey to reflect the latest state of the field without requiring manual rewriting.

Who is Omar Saadoun?

Omar Saadoun is a researcher and the founder of DAIR.AI (Distributed AI Research Institute), an organization focused on open, transparent, and accessible AI research. He is known for his work on practical AI tools and educational resources for the research community.