OpenBMB, a prominent Chinese open-source AI community, has announced the release of VoxCPM 2, a new text-to-speech (TTS) model. The announcement, made via social media, positions the model as standing "shoulder to shoulder" with Qwen3-TTS, the TTS component of Alibaba's Qwen large language model series. This release adds another capable, openly-licensed voice synthesis model to the ecosystem, particularly from China's rapidly advancing AI research community.

What Happened

On April 15, 2026, the OpenBMB account on X (formerly Twitter) announced that "VoxCPM 2 is live!". The post framed it as "another open-source AI #TTS model from China" and explicitly compared its capabilities to those of Qwen3-TTS. The announcement was subsequently retweeted by AI researcher Rohan Pandey (@rohanpaul_ai), bringing it to a wider technical audience. The original post did not include detailed technical specifications, benchmark scores, or release artifacts like GitHub repository links or model weights.

Context

OpenBMB (Big Model Base) is a community and toolkit project focused on large-scale pre-trained models, originally launched by researchers from Tsinghua University. The group is known for releasing open-source models and training frameworks, including the original CPM (Chinese Pretrained Models) series and the BMTools plugin system for LLMs. A "VoxCPM" model, presumably a voice-focused variant, was part of their earlier portfolio.

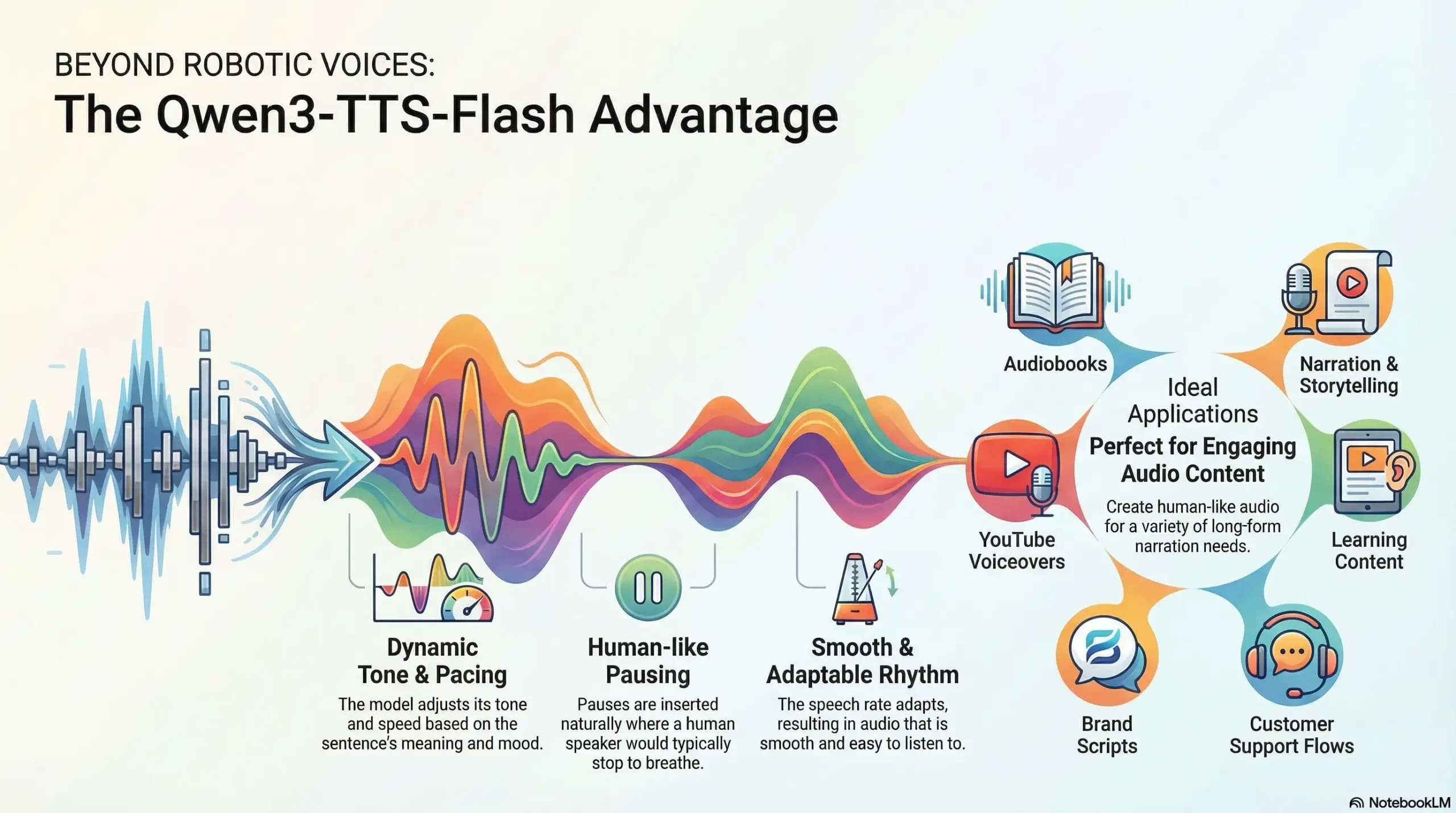

Qwen3-TTS is the text-to-speech system developed by Alibaba's Qwen team, released alongside the Qwen3 series of LLMs in late 2025. It is recognized for high-quality, expressive Mandarin speech synthesis and has been a benchmark for open-source Chinese TTS. The explicit comparison suggests OpenBMB is targeting parity with a leading model in the same language domain.

What We Know (and Don't Know)

The announcement is a launch declaration, not a research paper. Key details typically required for technical evaluation are absent from the source:

- Model Architecture: Unspecified (e.g., VITS, VALL-E, autoregressive diffusion).

- Training Data: Scale and composition of audio-text pairs are unknown.

- Performance Metrics: No objective Mean Opinion Score (MOS), word error rate (WER), or similarity scores were provided.

- Voice Cloning & Control: Capabilities for zero-shot voice cloning, emotion control, or prosody adjustment are not detailed.

- Release Format: It is unclear if the model is released as weights, a demo, or through an API.

gentic.news Analysis

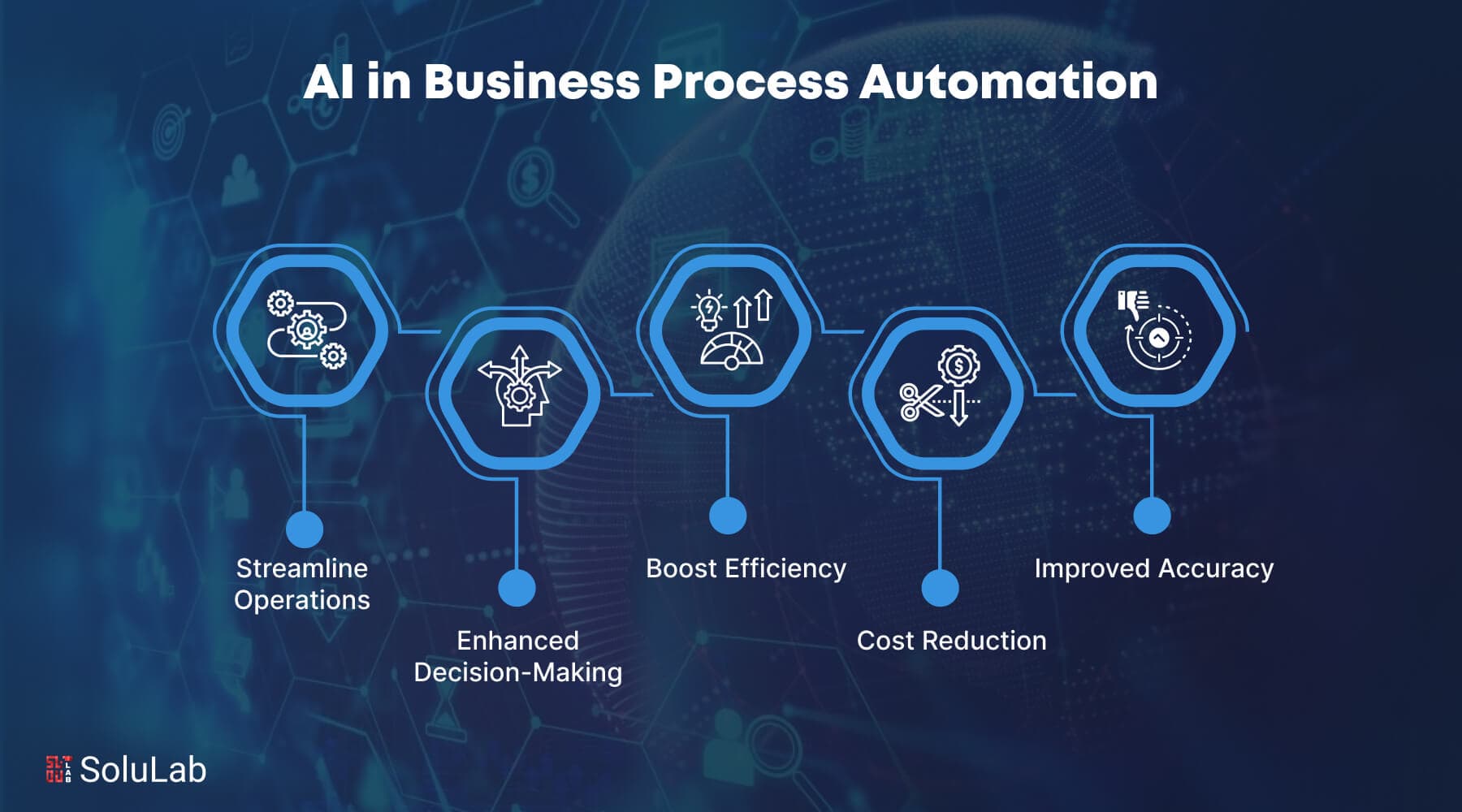

This launch continues two significant trends we've tracked closely. First, it underscores the intense activity in the Chinese open-source AI scene, where groups like OpenBMB, 01.AI, and Qwen are rapidly iterating and releasing capable models across modalities. As we noted in our February 2026 analysis of the Qwen3.5 series launch, the competitive pressure in this ecosystem is driving faster release cycles and direct model-to-model comparisons, as seen with the "shoulder to shoulder" claim for VoxCPM 2.

Second, it highlights the growing specialization within open-source LLM families. The original CPM models were general-purpose text generators. The development of VoxCPM 2 represents a strategic pivot towards a vertical, modality-specific tool (TTS) that can compete with the integrated offerings of larger players like Alibaba. This mirrors a broader industry pattern where foundational model groups spin out specialized models for vision, audio, and reasoning.

For practitioners, the immediate question is whether VoxCPM 2's open-source license and purported quality will make it a viable, more accessible alternative to Qwen3-TTS for Chinese speech synthesis tasks. However, without published benchmarks or available weights, the claim remains aspirational. The burden is on OpenBMB to follow this announcement with the technical substantiation the open-source community expects.

Frequently Asked Questions

What is VoxCPM 2?

VoxCPM 2 is a newly announced open-source text-to-speech (TTS) AI model developed by the OpenBMB community in China. It is designed to convert written text into spoken audio, with an implied focus on high-quality Mandarin Chinese synthesis.

How does VoxCPM 2 compare to Qwen3-TTS?

According to its developers, VoxCPM 2 stands "shoulder to shoulder" with Qwen3-TTS, Alibaba's well-regarded TTS model. This suggests they aim for comparable output quality and expressiveness. A definitive technical comparison requires the release of benchmarks and model weights for independent evaluation.

Where can I find the VoxCPM 2 model?

As of this announcement, specific release details such as a GitHub repository, Hugging Face model page, or demo link were not provided in the source. Interested developers should monitor the official OpenBMB channels for the subsequent release of code and weights.

Why is another open-source TTS model significant?

The proliferation of high-quality, open-source TTS models lowers the barrier to entry for developers building voice applications, from assistants to audiobook narration. It also reduces dependency on proprietary, paid APIs and fosters innovation through community iteration and fine-tuning.