A new AI model named Mythos has been described by its creators as "very powerful, and should feel terrifying." The team behind it has released a model card and is taking a cautious approach, previewing the technology with a select group of cyber defenders rather than making it generally available.

What Happened

On April 26, 2026, Boris Cherny, a software engineer and entrepreneur, announced the existence of the Mythos AI model via a social media post. The core message was that the model possesses significant, potentially alarming capabilities. In response, the development team has chosen a controlled, responsible disclosure strategy, sharing the model first with cybersecurity professionals who can assess its implications and potential defensive uses.

A model card—a document that provides details about a machine learning model's performance, limitations, and intended use—has been published, offering a technical glimpse into Mythos.

Context

The announcement reflects a growing trend in the AI industry concerning the responsible deployment of highly capable models. Following high-profile incidents involving earlier models, many developers and researchers are advocating for staged or restricted releases, especially for systems with dual-use potential (capable of both beneficial and harmful applications). Previewing a powerful model with security experts allows for vulnerability assessment and the development of potential countermeasures before a wider audience can access it.

gentic.news Analysis

This cautious rollout for Mythos is a direct response to the industry-wide reckoning on AI safety that intensified throughout 2024 and 2025. It follows a pattern established by other entities working on frontier models, such as Anthropic's structured access programs and OpenAI's preparedness framework. The decision to engage cyber defenders first is particularly strategic; it inverts the typical vulnerability disclosure process by giving defenders a head start, potentially allowing them to harden systems and develop detection tools before offensive applications become widespread.

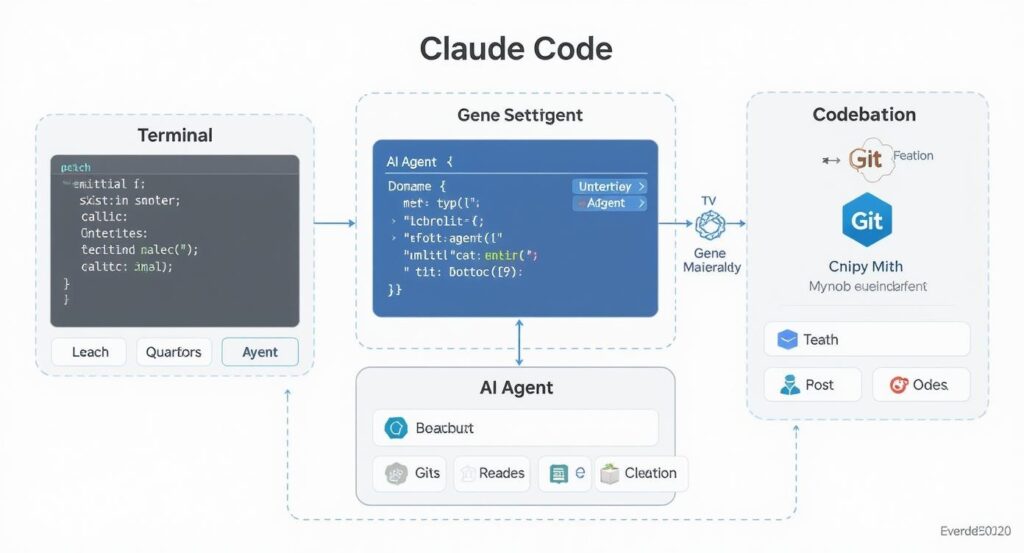

The model's description as "terrifying" aligns with an ongoing trend we've covered, where each new generation of models demonstrates unexpected emergent capabilities. As we reported in our analysis of the GPT-5 post-training process, the scaling laws continue to produce qualitative leaps in reasoning and tool-use that are difficult to predict at smaller scales. If Mythos represents a similar leap, particularly in areas like autonomous system navigation, code generation, or social engineering simulation, its controlled preview is not just prudent but necessary. This approach may become a de facto standard for powerful AI releases, moving beyond voluntary commitments to structured, tiered access models.

Frequently Asked Questions

What is the Mythos AI model?

Mythos is a newly announced artificial intelligence model described by its creators as "very powerful" and having a "terrifying" potential. Its specific architecture, capabilities, and training data are detailed in its published model card.

Why is Mythos being shown only to cyber defenders?

The developers are previewing Mythos with cybersecurity professionals as a responsible disclosure practice. This allows experts to evaluate the model's potential for misuse, assess its capabilities in offensive security contexts, and develop defensive strategies and tools before any broader release that could be exploited maliciously.

Where can I find the Mythos model card?

The model card has been published and is accessible via the link provided in the original announcement. Model cards typically include information on the model's intended uses, limitations, performance metrics, and training data.

Does this mean Mythos is a malicious AI?

No. The description "terrifying" likely refers to the model's potent capabilities, which could be misused, not an intrinsic malicious intent. The developers' choice to preview it with defenders first is a safety measure, indicating the model is powerful enough to warrant careful control over its initial exposure.